A primer on anvi'o

Table of Contents

- Downloading the pre-packaged Infant Gut Dataset

- Chapter I: Genome-resolved Metagenomics

- Importing taxonomy for genes

- Inferring taxonomy for metagenomes

- Manual identification of genomes in the Infant Gut Dataset

- Summarizing the binning results

- Renaming bins in your collection (from chaos to order)

- Refining individual MAGs: the curation step

- Chapter II: Automatic Binning

- Importing an external binning result

- Comparing multiple binning approaches

- Manually curating automatic binning outputs

- Chapter III: Phylogenomics

- Chapter IV: Pangenomics

- Generating a genome storage

- Computing and visualizing the pangenome

- Adding average nucleotide identity

- Organizing gene clusters based on a forced synteny

- Functional enrichment analyses

- Binning gene clusters

- Summarizing a pangenome

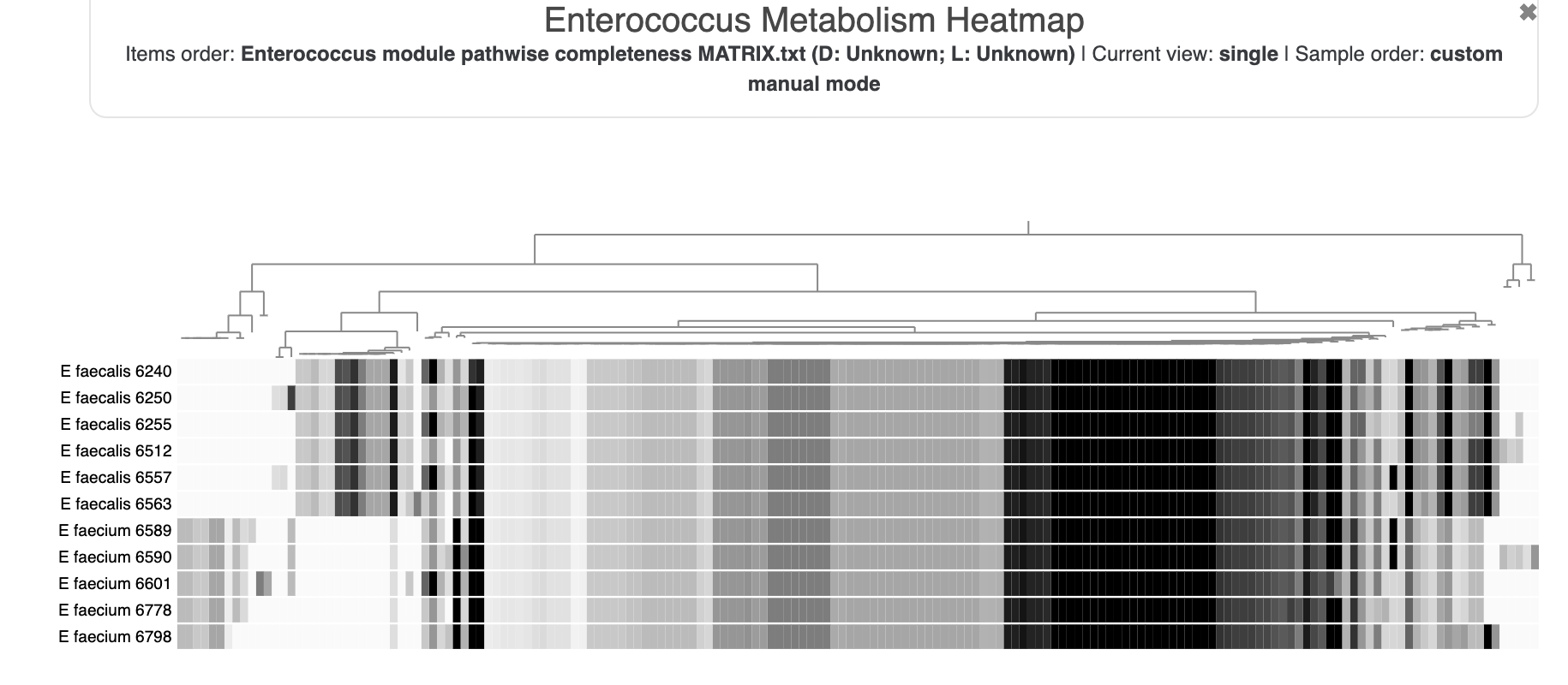

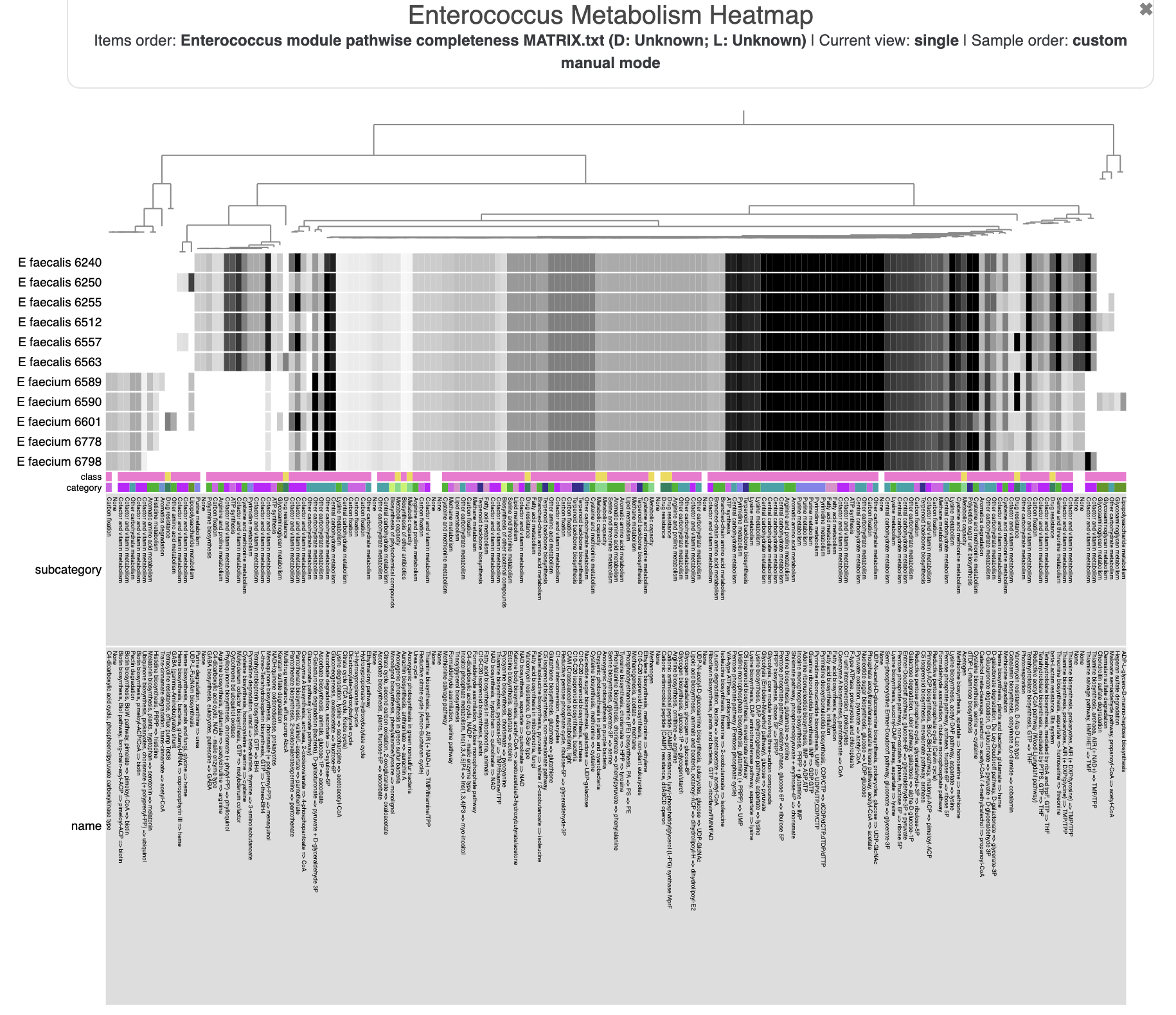

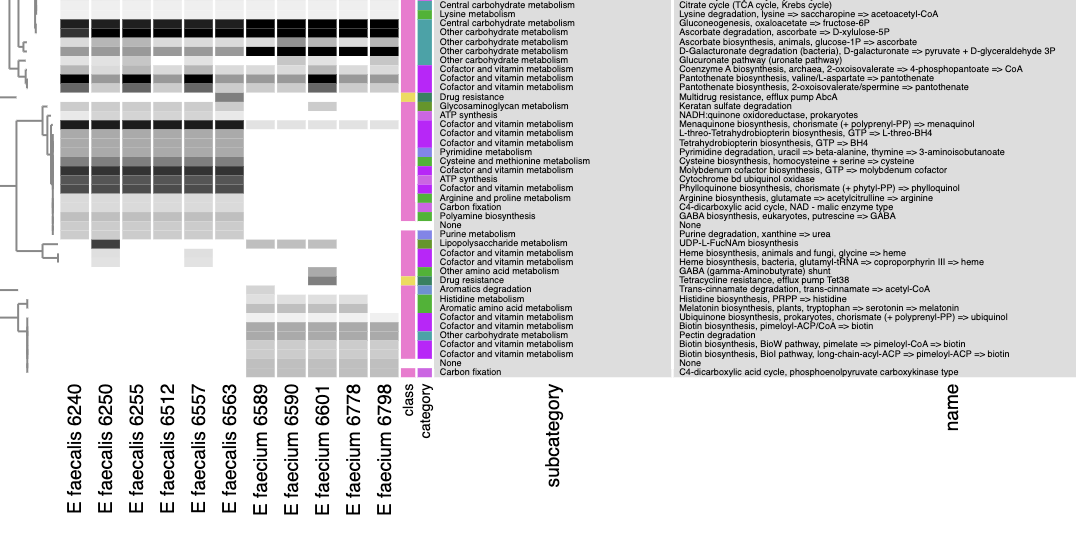

- Chapter V: Metabolism Prediction

- Some obligatory background on metabolism prediction

- Estimating metabolism in the Enterococcus genomes

- Metabolism Enrichment

- Chapter VI: Microbial Population Genetics

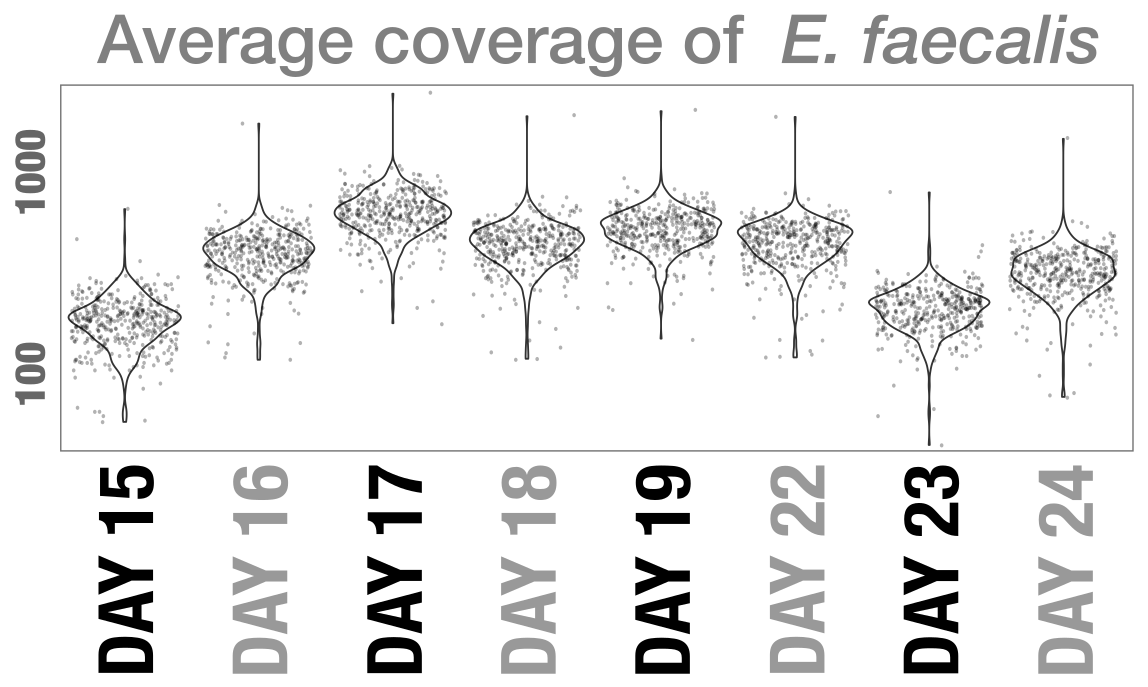

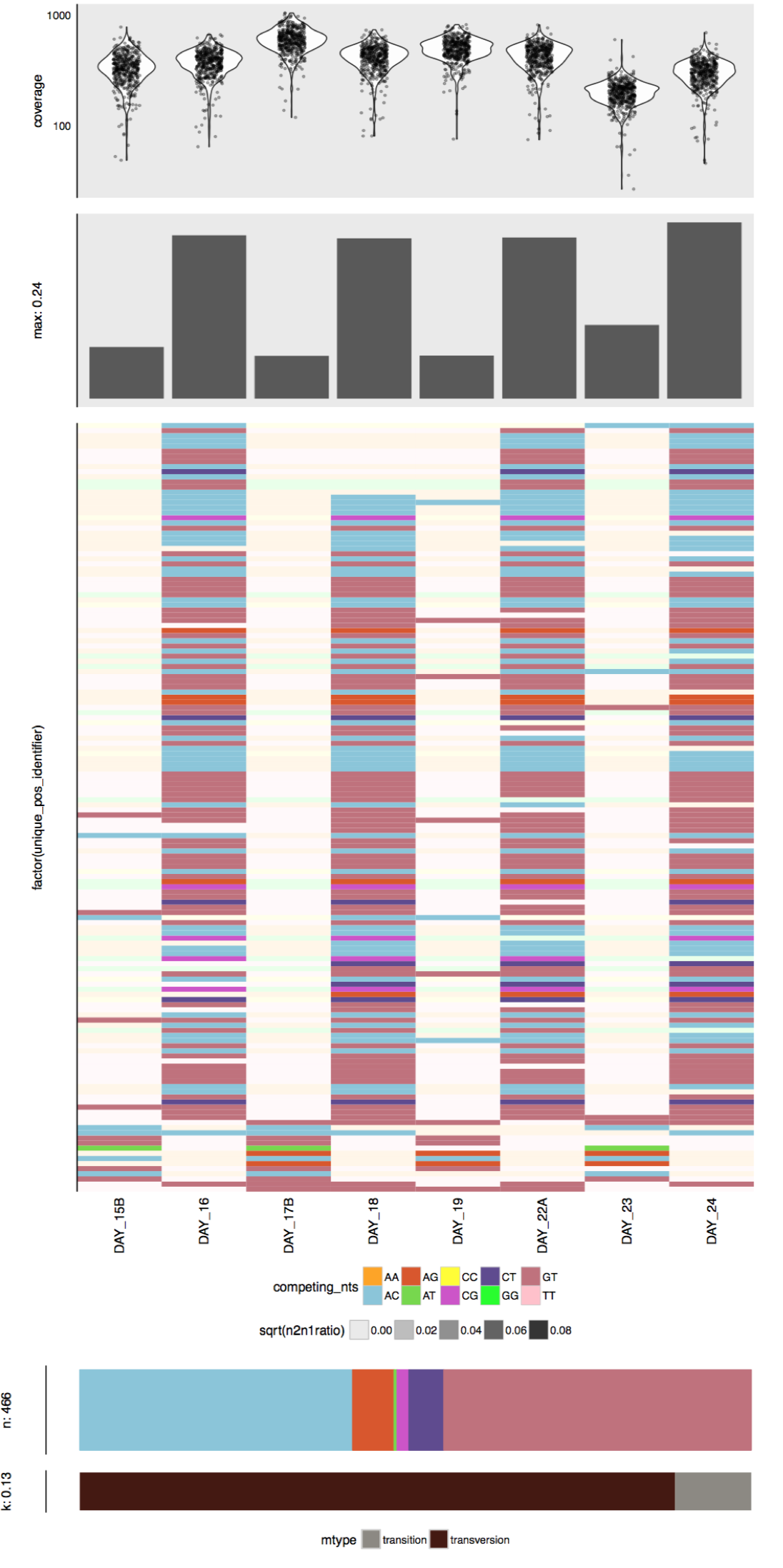

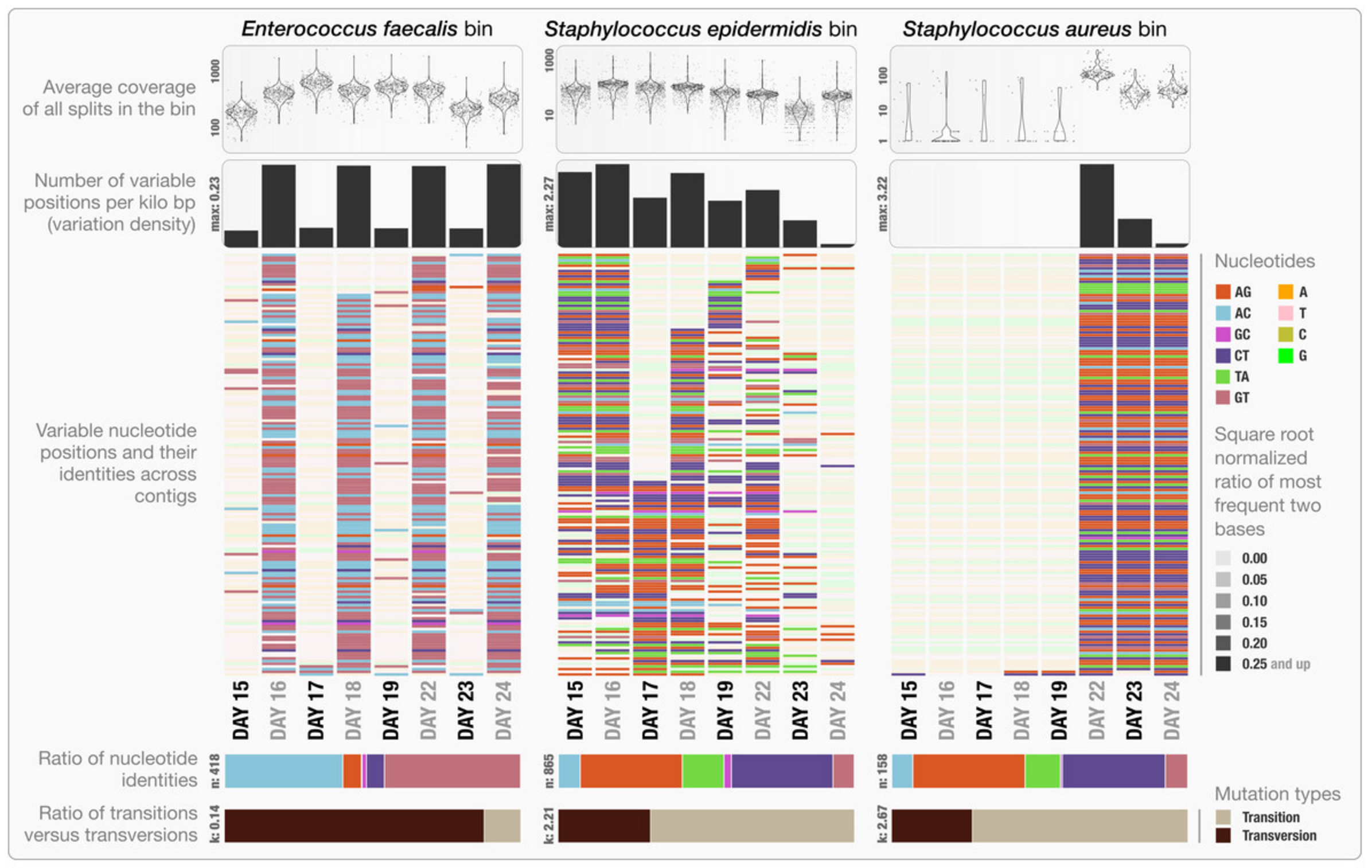

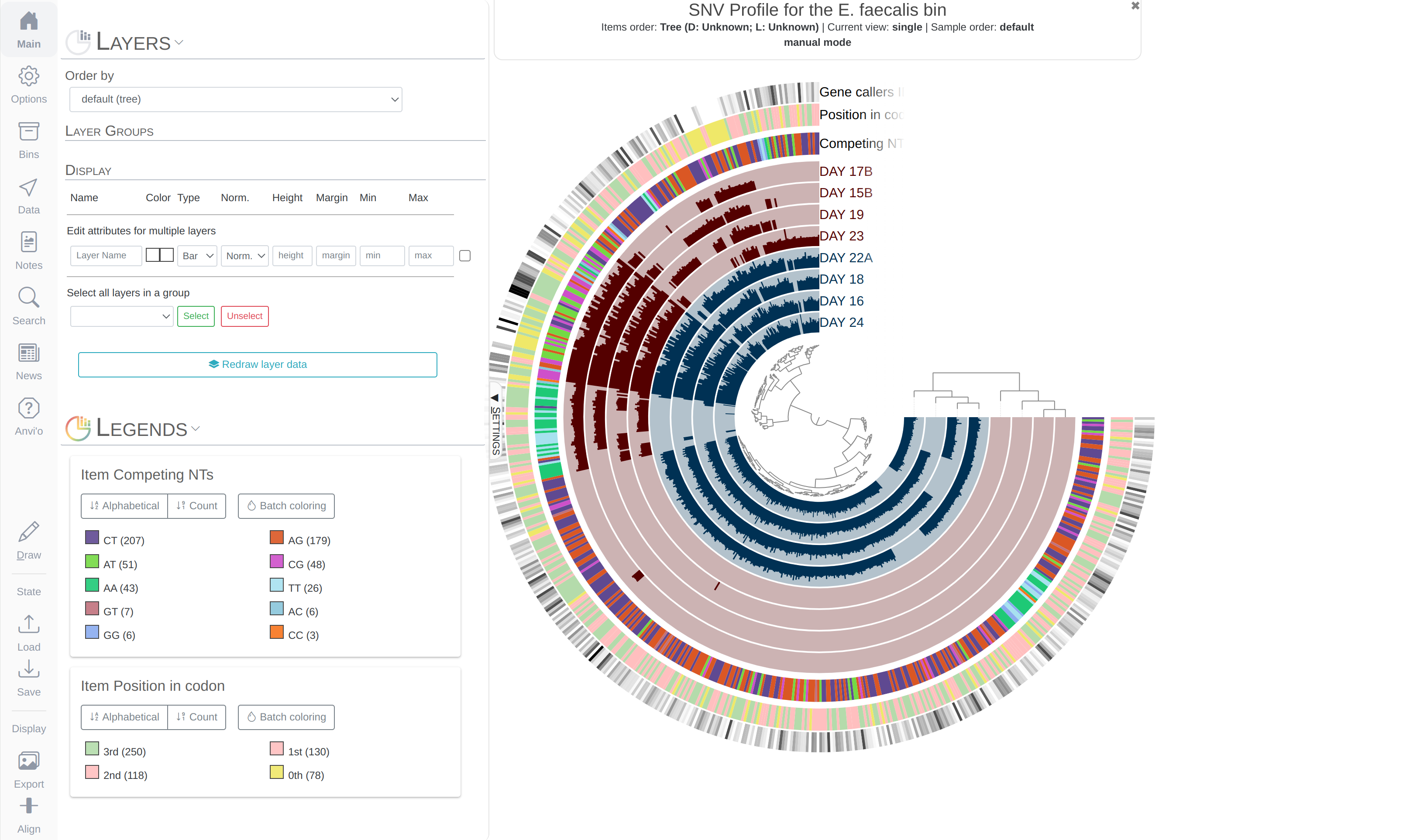

- Visualizing SNV profiles using R

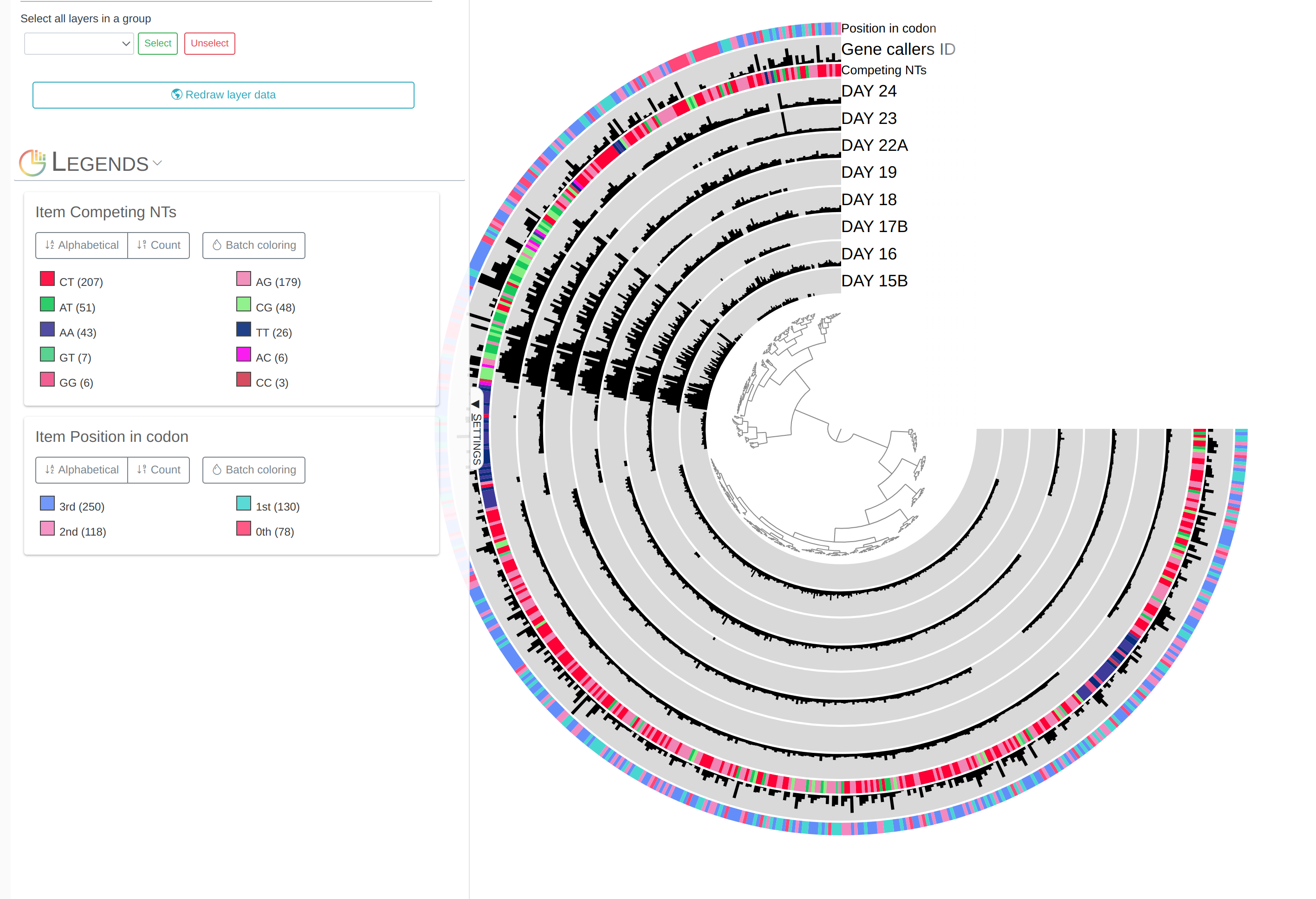

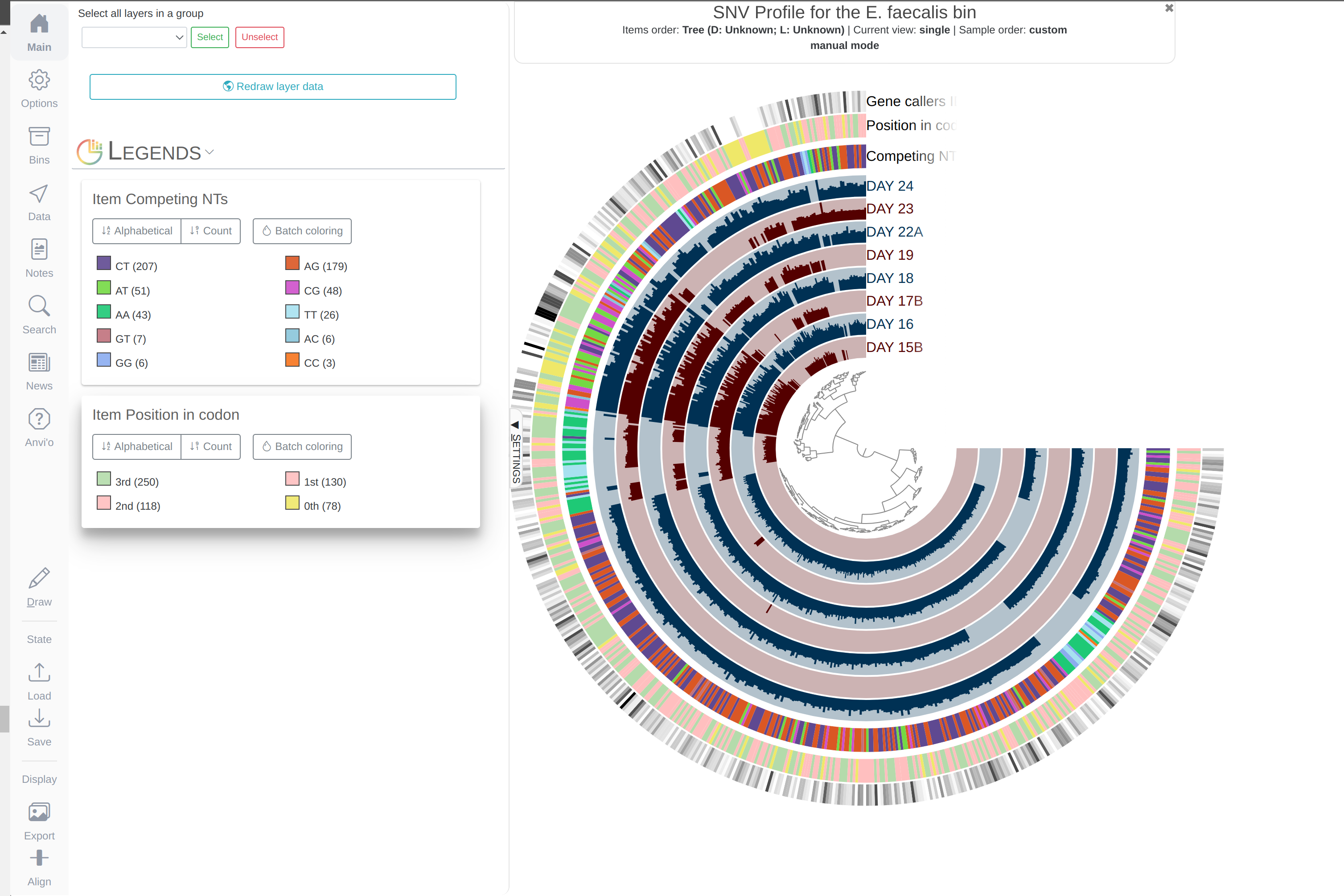

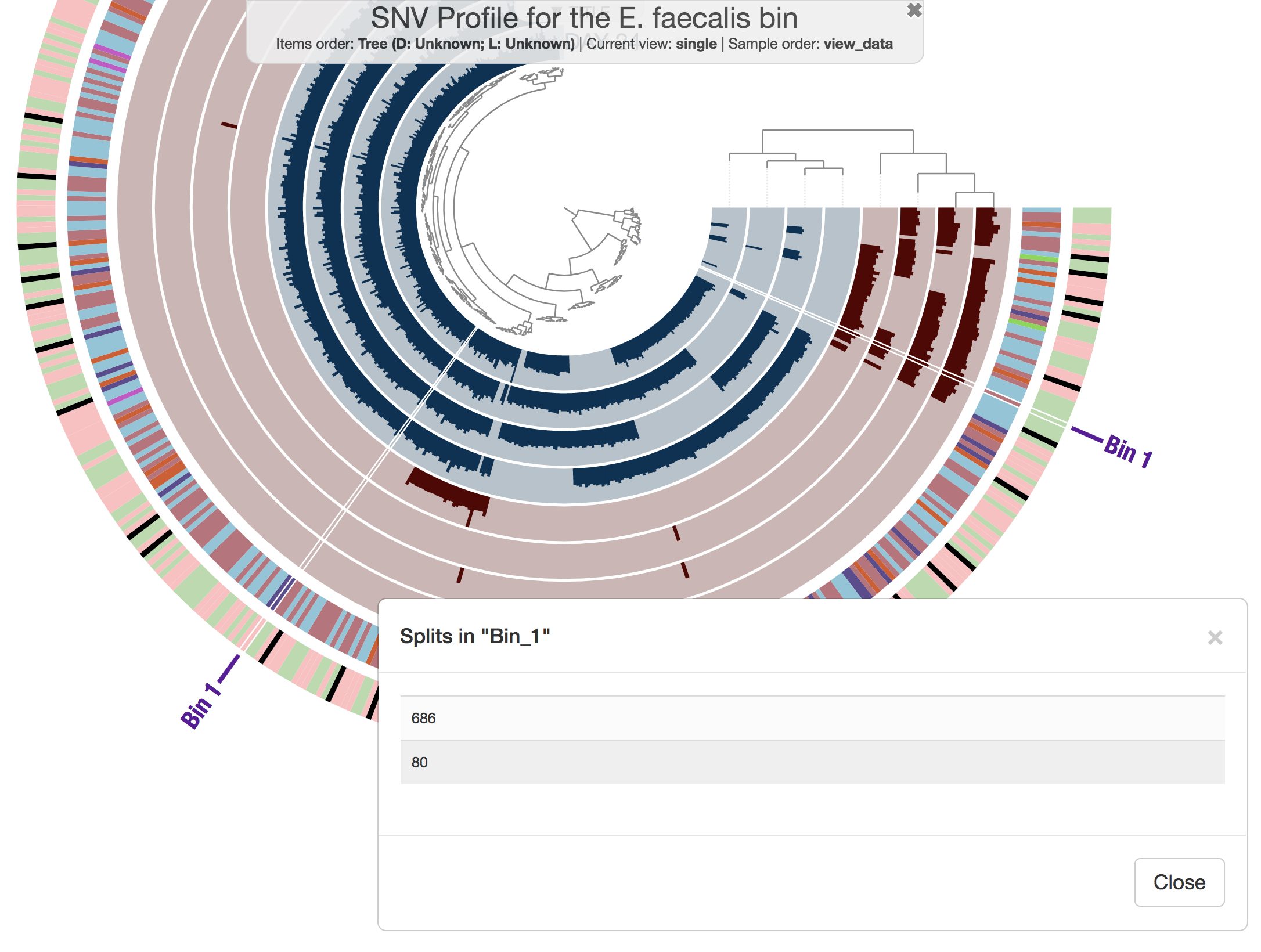

- Visualizing SNV profiles using anvi’o

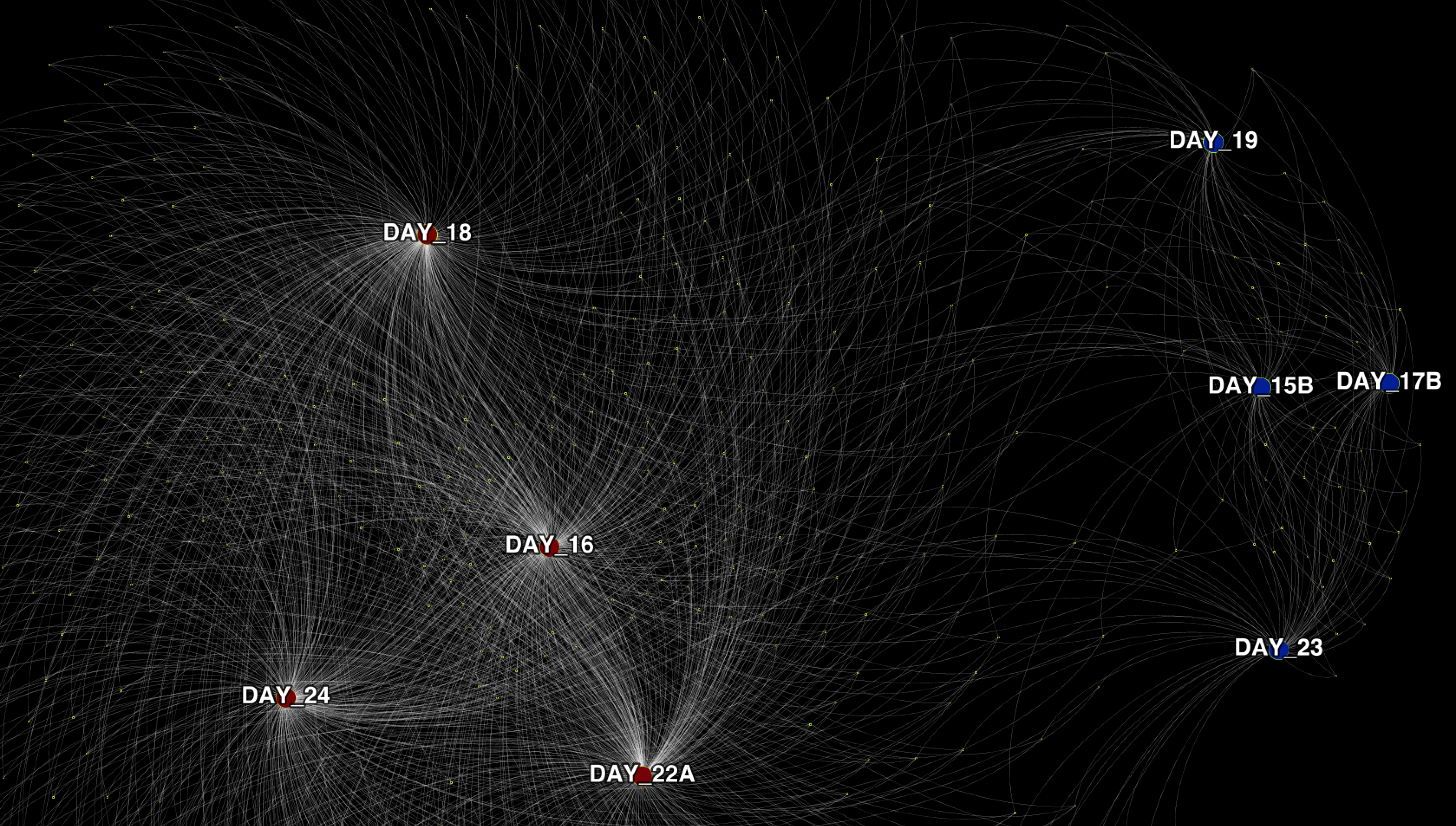

- Visualizing SNV profiles as a network

- Measuring distances between metagenomes with FST

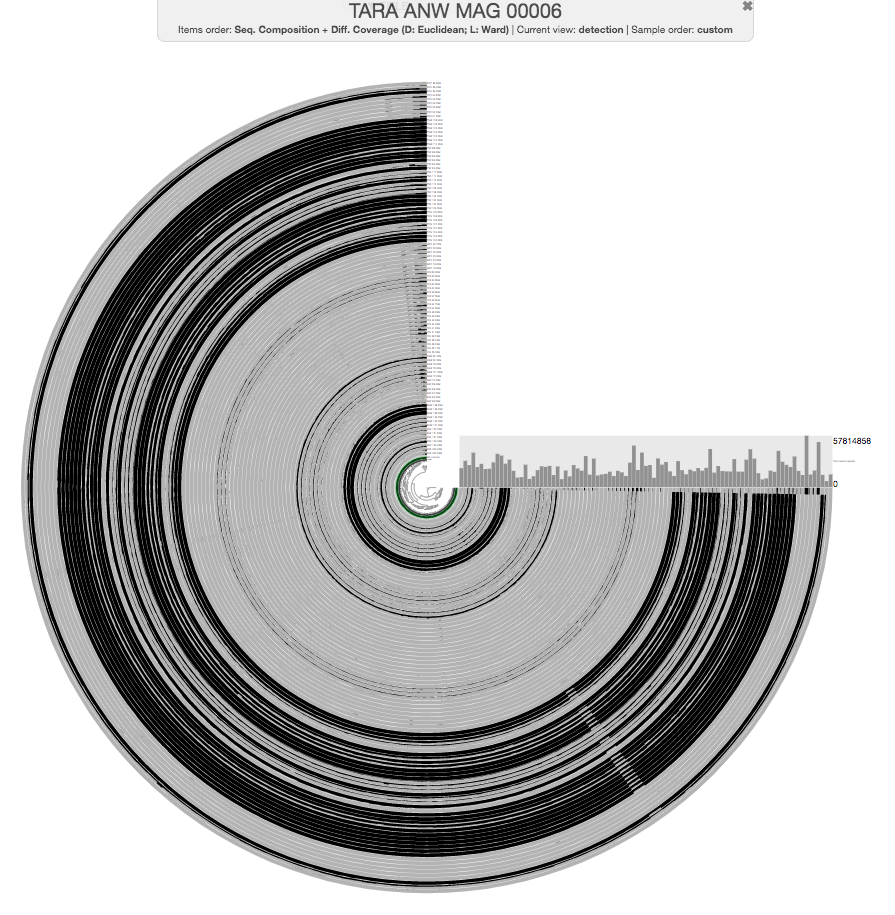

- Chapter VII: Genes and genomes across metagenomes

- Chapter VIII: From single-amino acid variants to protein structures

- Predicting binding sites with InteracDome

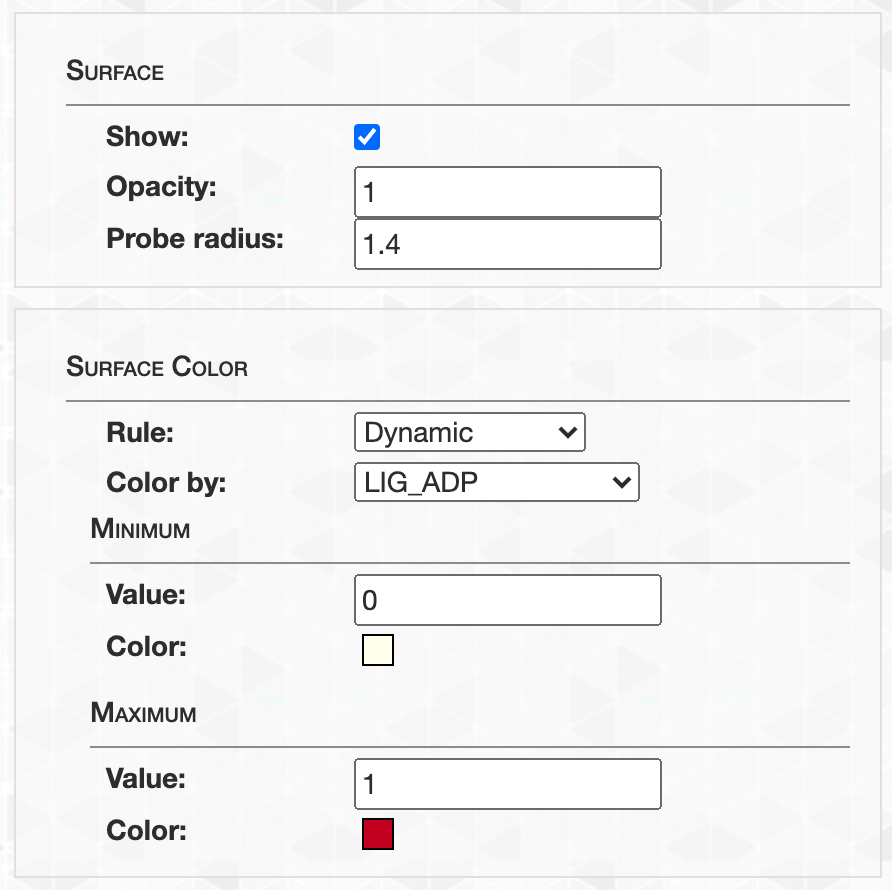

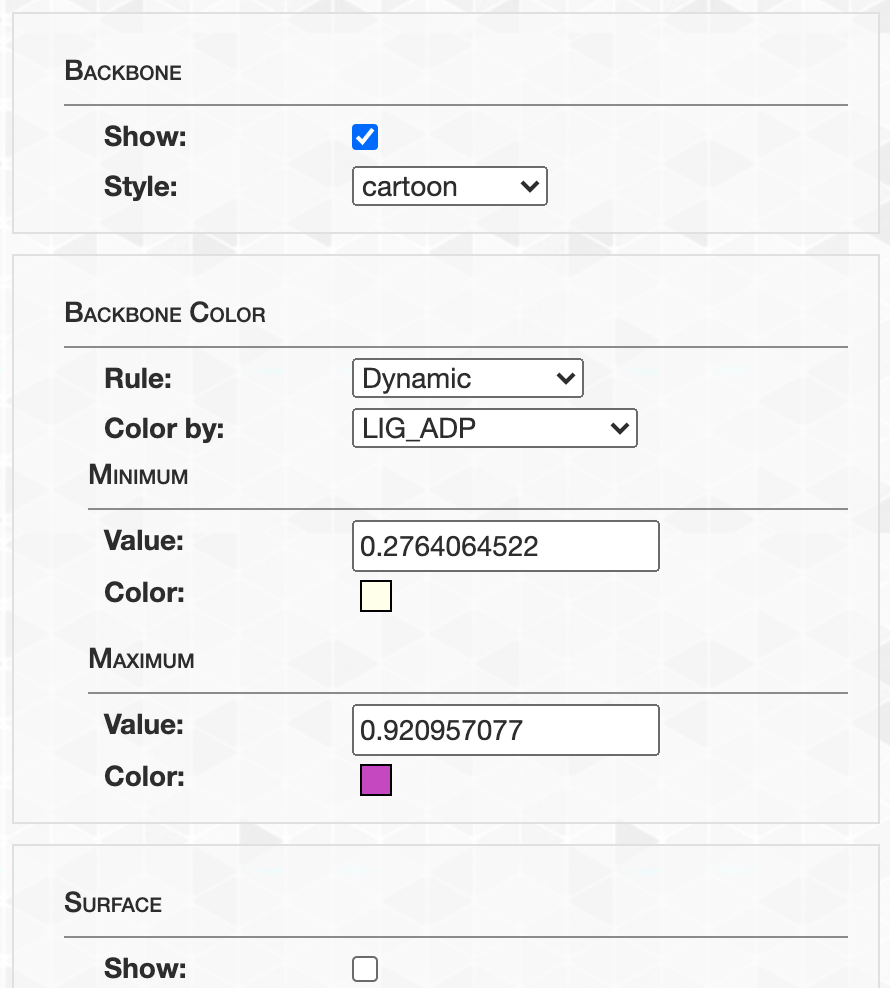

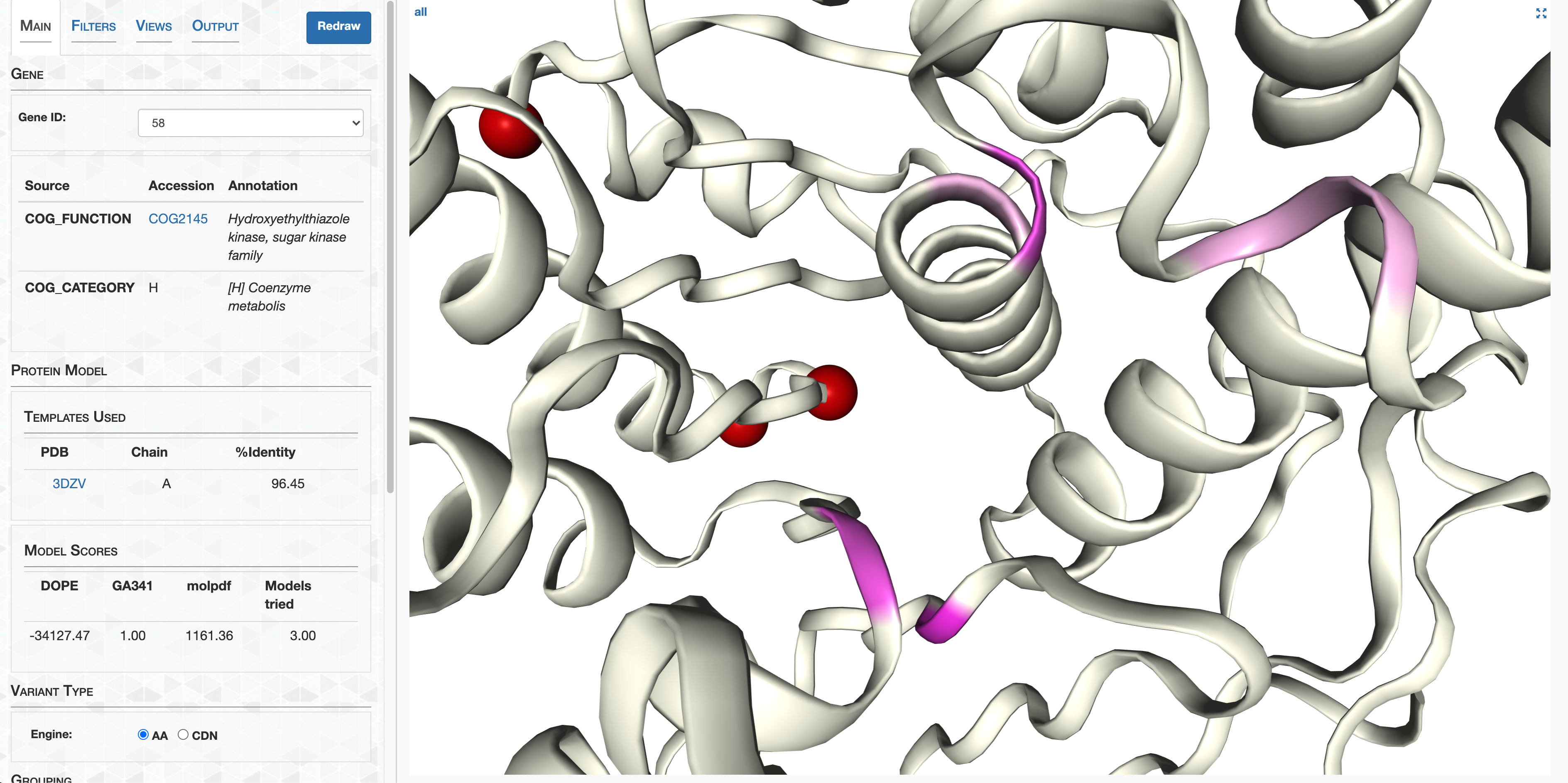

- Visualizing protein structures, SAAVs, SCVs, and binding sites with anvi’o structure

- The structure database

- Visualizing proteins with anvi-display-structure

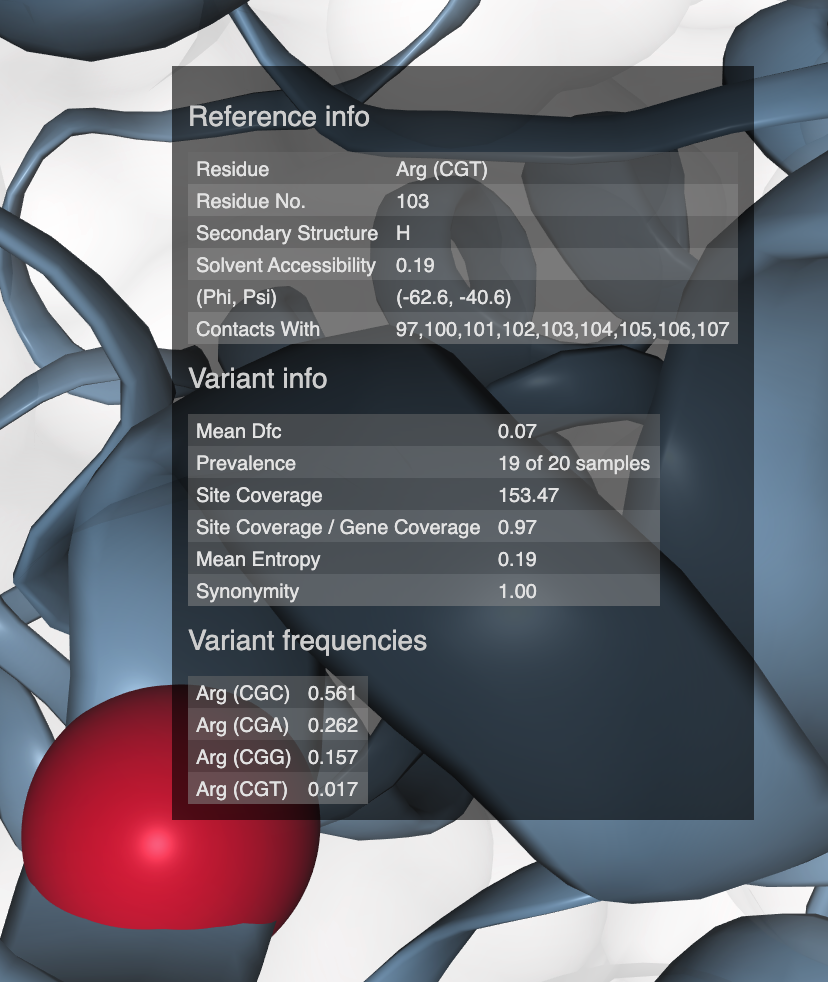

- SAAVs and SCVs

- Case study: sequence variation within a kinase binding pocket

- Grouping metagenomes

- Final words

This tutorial is tailored for anvi’o v6 or later. You can learn the version of your installation by typing anvi-interactive -v. If you have an older version, some things will not work the way they should.

The goal of this tutorial is to explore some of the most fundamental aspects of anvi’o and its application to a real-world dataset. We organized it in multiple interconnected chapters, which all use the same dataset:

- Chapter I: Genome-resolved Metagenomics

- Chapter II: Automatic Binning

- Chapter III: Phylogenomics

- Chapter IV: Pangenomics

- Chapter V: Metabolism Prediction

- Chapter VI: Microbial Population Genetics

- Chapter VII: Genes and genomes across metagenomes

- Chapter VIII: From single-amino acid variants to protein structures

At the end of this tutorial, you should be able to

- Characterize high-quality genomes from metagenomes and manually curate them,

- Incorporate automatic binning results into your metagenomes,

- Perform phylogenomic analyses to learn about their approximate location in the tree of life,

- Determine core and accessory genes they contain compared to closely related genomes through pangenomics,

- Explore within population genomic diversity through single-nuleotide variants,

- Put a given metagenome-assembled, single-cell or isolate genome into the context of public metagenomes to investigate their core and accessory genes, and

- Investigate the genomic heterogeneity in the context of predicted protein structures.

All of these sections use the same publicly available metagenomic dataset generated by Sharon et al. (2013), which contains 11 feacal samples from a premature infant collected between Day 15 and Day 24 of her life in a time-series manner. After downloading raw sequencing reads, we co-assembled all samples and processed the resulting contigs longer than 1K using anvi’o following the anvi’o metagenomic workflow. The main reason we chose this dataset was its simplicity: more than 95% of metagenomic reads map back to the contigs, a great indication of the assembled contigs describe a very large fraction of this infant’s gut microbiome.

We hope you find the tutorial useful, and generously share your opinions or criticism should you have any.

Downloading the pre-packaged Infant Gut Dataset

To download and unpack the infant gut data-pack, copy-paste the following commands into your terminal:

curl -L https://figshare.com/ndownloader/files/45076909 -o INFANT-GUT-TUTORIAL.tar.gz

tar -zxvf INFANT-GUT-TUTORIAL.tar.gz && cd INFANT-GUT-TUTORIAL

This will download a 224 Mb compressed file, and unpack it, which will take an additional 502 Mb.

If you are using a newer version of anvi’o than was the one that was used to generate these databases (perhaps you are following the development branch), you may need to run anvi-migrate to get them up to date. If you are not sure whether you need this, do not worry - you could safely skip it and anvi’o would later remind you what exactly needs to be done.

anvi-migrate --migrate-safely *.db

If you were sent here somewhere from down below, now you can go back. If you have no idea what this means, ignore this notice, and continue reading. You’re OK :)

Some details on the contents of the data-pack for the curious

If you type ls in the dataset directory you will see that the data-pack contains an anvi’o contigs database, an anvi’o merged profile database (that describes 11 metagenomes), and other additional data that are required by various sections in this tutorial. Here are some simple descriptions for some of these files, and how we generated them.

The contigs and profile databases. We generated an anvi’o contigs database using the program anvi-gen-contigs-database. This special anvi’o database keeps all the information related to your contigs: positions of open reading frames, k-mer frequencies for each contig, functional and taxonomic annotation of genes, etc. The contigs database is an essential component of everything related to anvi’o metagenomic workflow. We also generated a merged anvi’o profile database using the program anvi-profile. In contrast to the contigs database, anvi’o profile databases store sample-specific information about contigs. Profiling a BAM file with anvi’o creates a single profile that reports properties for each contig in a single sample based on mapping results. Each profile database automatically links to a contigs database, and anvi’o can merge single profiles that link to the same contigs database into an anvi’o merged profile (which is what you will work with during this tutorial), using the program anvi-merge. Here are some direct links describing these steps:

- Creating an anvi’o contigs database

- Creating an anvi’o profile database

- Merging anvi’o profile databases

Identifying single-copy core genes among contigs. We used the program anvi-run-hmms to identify single-copy core genes for Bacteria, Archaea, Eukarya, as well as sequences for ribosomal RNAs among the IGD contigs. All of these results are also stored in the contigs database. This information allows us to learn the completion and redundancy estimates of newly identified bin in the interactive interface, on the fly. Note that if all single copy-core genes for a given domain are detected once in the selected bin, then the completion will be 100% and the redundancy 0%. If a few genes are detected multiple times, the redundancy value will increase. If a few genes are missing, then it is the completion value that will drop.

Assigning functions to genes. We also ran anvi-run-ncbi-cogs and anvi-run-kegg-kofams on the contigs database before we packaged it for you, which stored functions for genes results in the contigs database. At the end of the binning process, functions occurring in each bin will be made available for downstream analyses.

Chapter I: Genome-resolved Metagenomics

The purpose of this tutorial is to have a conversation about genome-resolved metagenomics (with a focus on manual binning) using the Infant Gut Dataset (IGD), which was generated, analyzed, and published by Sharon et al. (2013), and was re-analyzed in the anvi’o methods paper.

By the end of the tutorial, you will be able to:

- Familiarize with the interactive interface for binning (~25 minutes)

- Inspect contigs in the context of their metagenomic signal (~5 minutes)

- Characterize bins by manual binning (~20 minutes)

- Summarize manual binning results for downstream analyses (~20 minutes)

- Manually curate individual bins for quality control (~10 minutes)

- Import and visualize external binning results (~20 minutes)

- Combine manual and automatic binning (~10 minutes)

By the end of this chapter, you should (1) have a comprehensive understanding of genome-resolved metagenomics, and (2) be able to characterize and manually curate genomes from your own assembly outputs moving forwards.

A typical anvi’o genome-resolved metagenomic workflow starts with one or more BAM files and a FASTA file of your contigs. There are many ways to get your contigs and BAM files for your metagenomes and we have started implementing a tutorial that describes the workflows we often use. But in this tutorial we will start from a point in the workflow where you have used your BAM and FASTA files to generate anvi’o contigs and profile databases.

While this tutorial will take you through a simple analysis of a real dataset, there also is available a more comprehensive (but more abstract) tutorial on anvi’o metagenomic workflow.

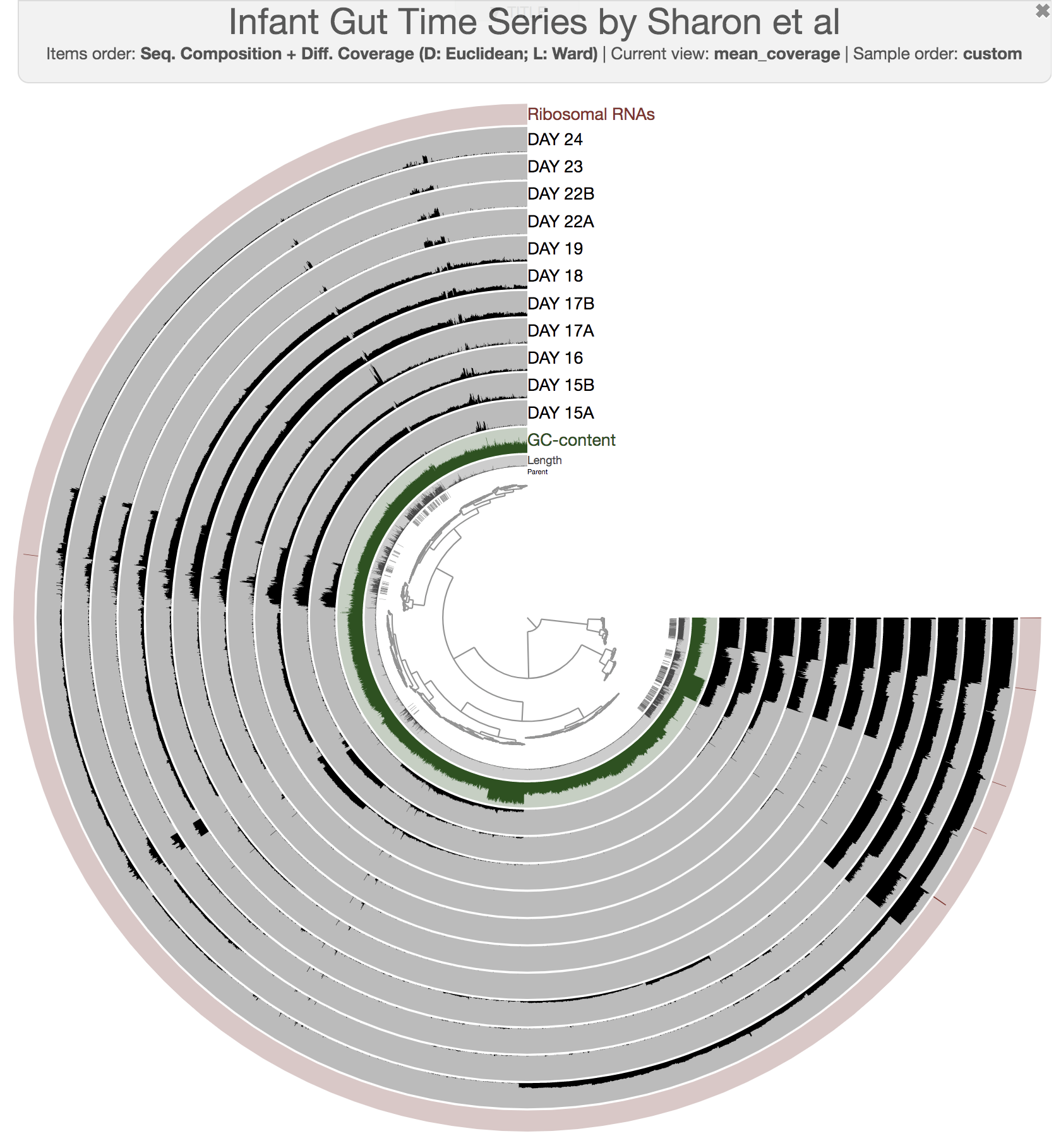

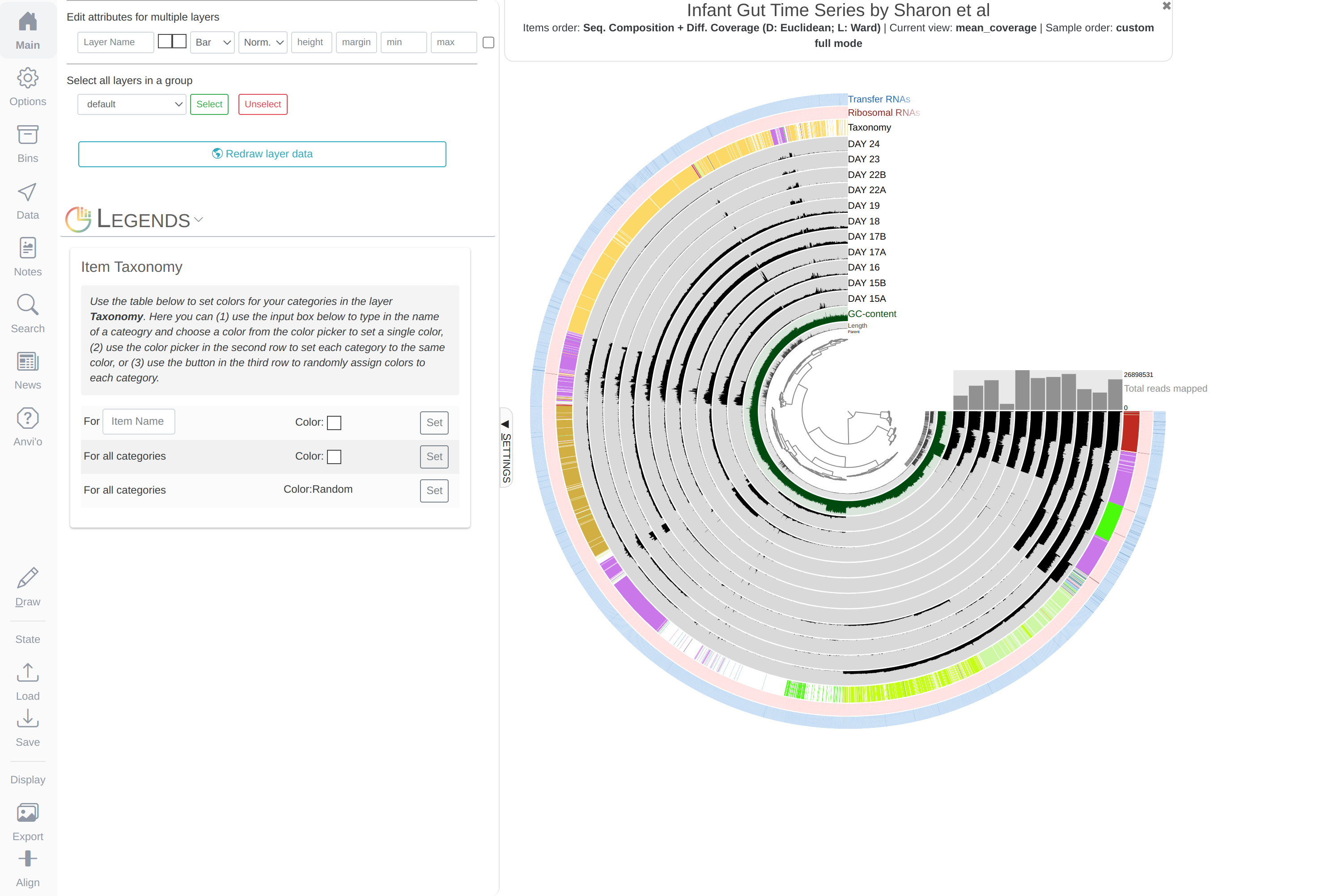

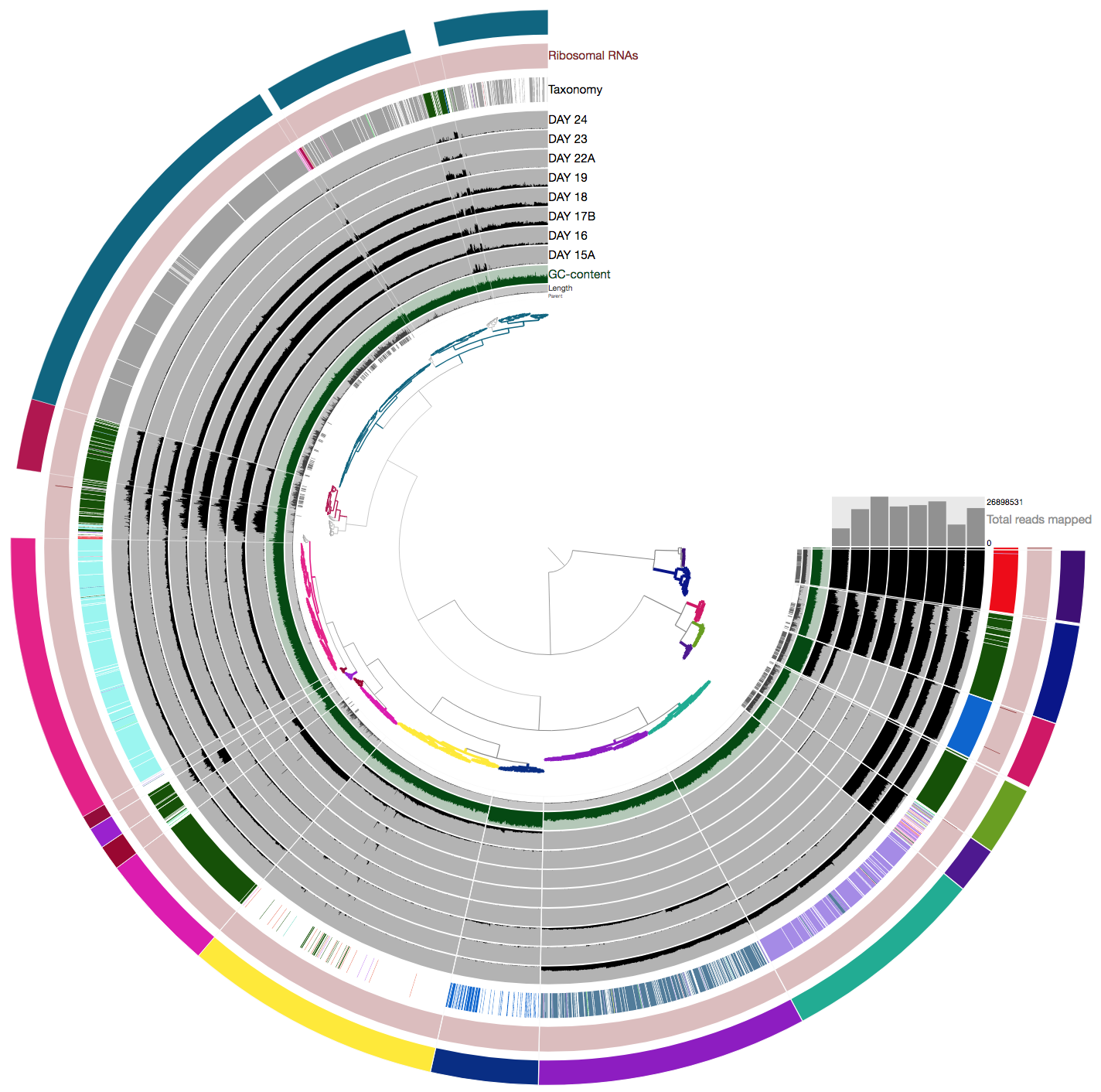

Using the files in the data-pack directory, let’s take a first look at the merged profile database for the infant gut dataset metagenome. If you copy-paste this to your terminal:

anvi-interactive -p PROFILE.db -c CONTIGS.db

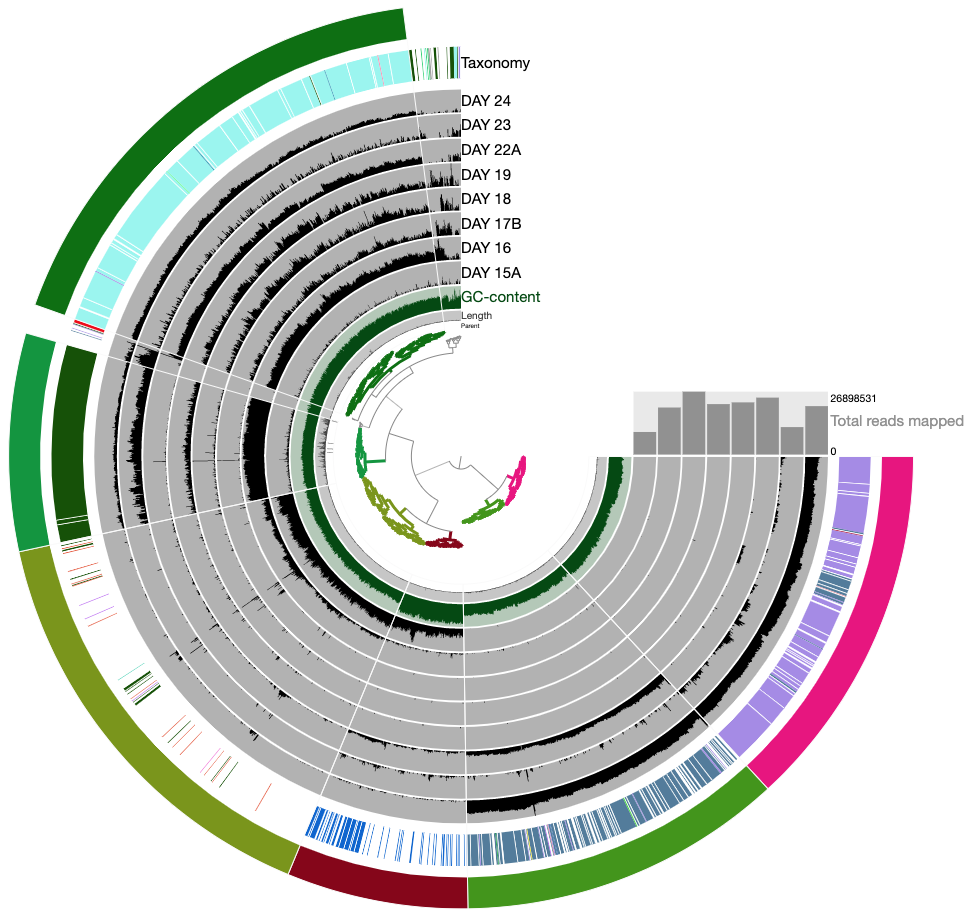

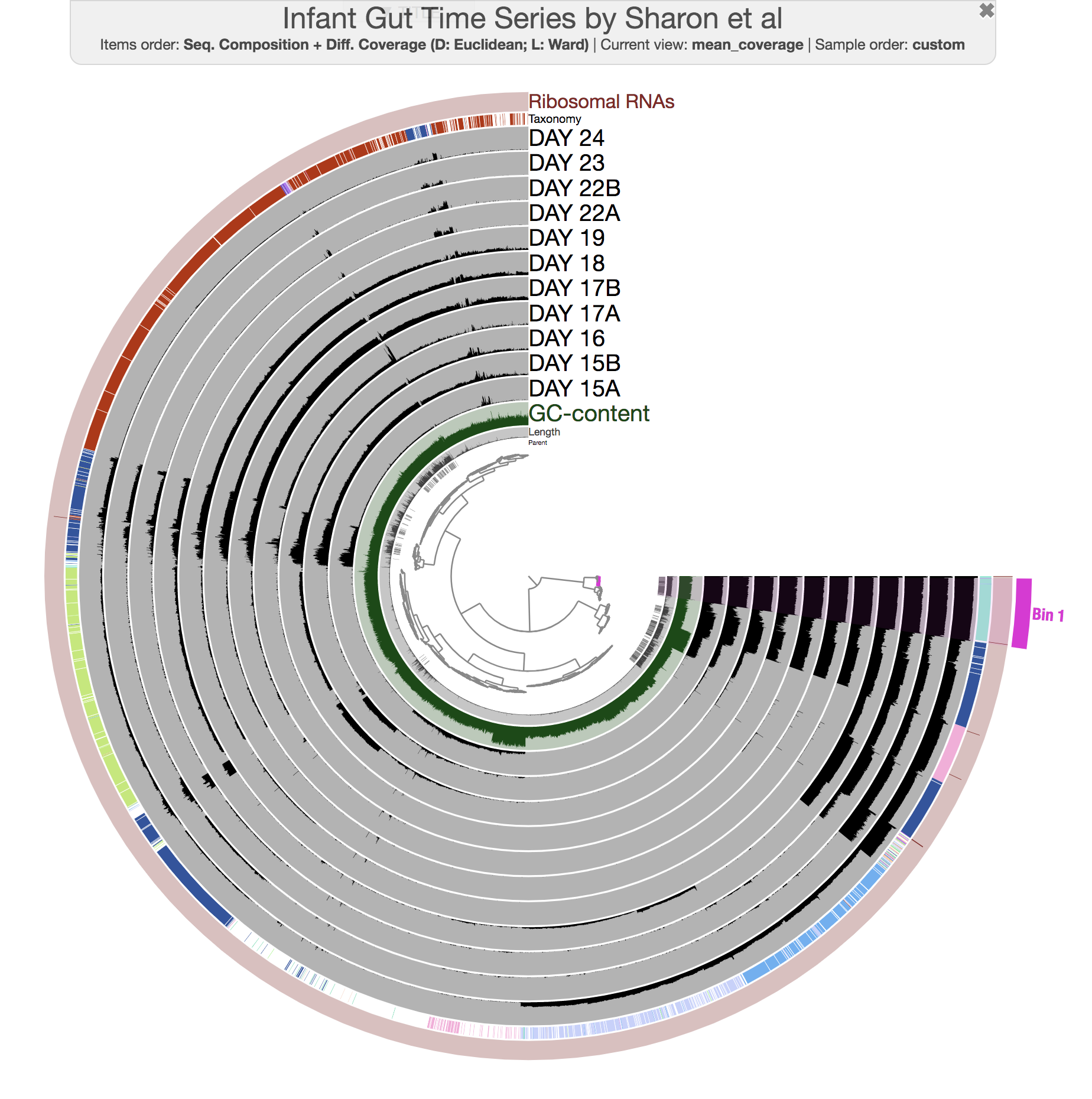

The anvi’o interactive interface should welcome you with this display (after you click “draw”):

When it is time to type other commands, you can close the window, go back to the terminal and press CTRL + C to kill the server.

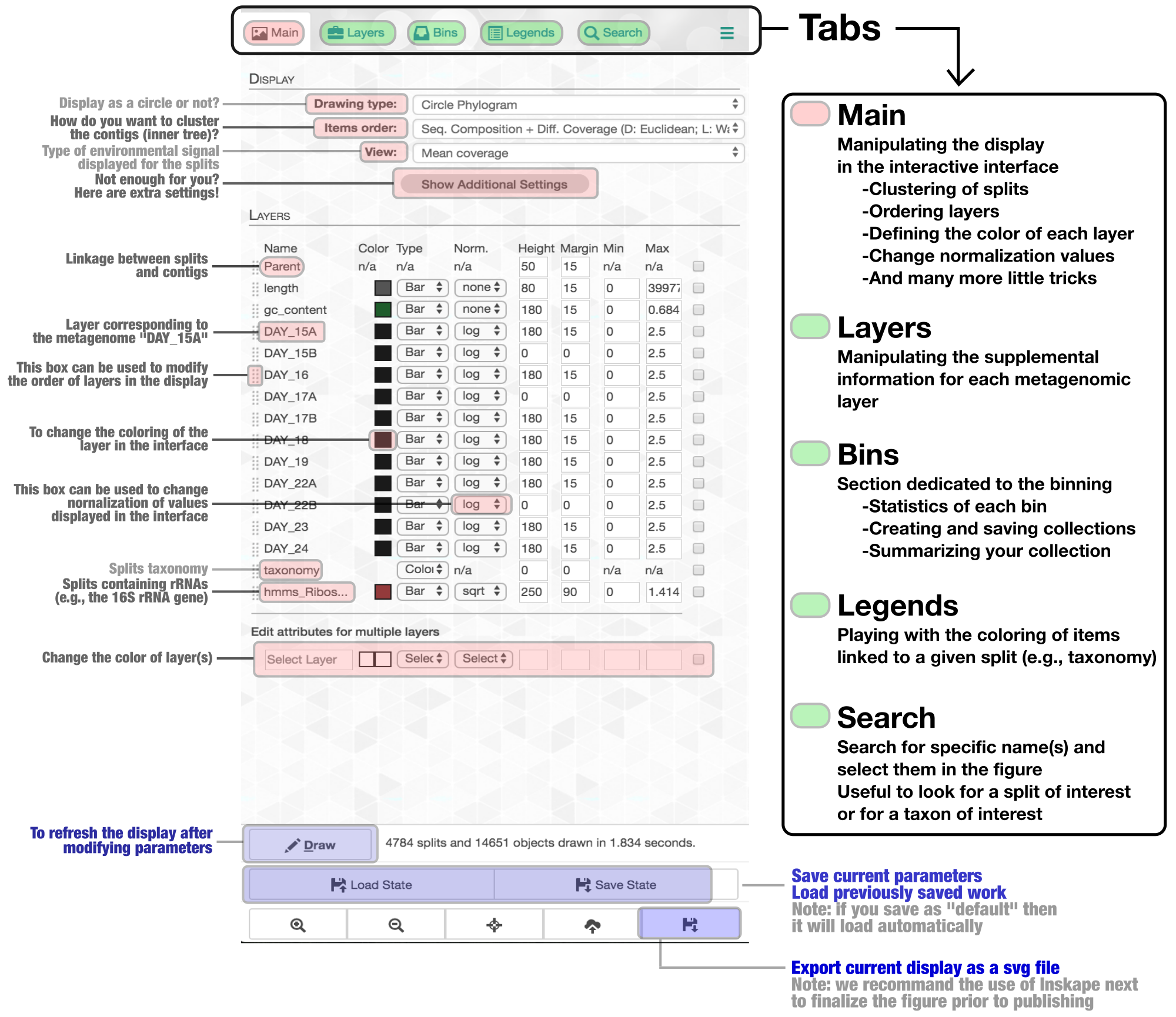

Show/hide Tom's description of the metagenomic binning-related features of the anvi'o interactive interface

The interactive interface of anvi’o can be quite overwhelming. This particular box, in addition to the interactive interface tutorial, attempts to give you insights into the features of the interactive interface relevant to metagenomic binning.

First of all, each leaf in the cerntral dendrogram describes an individual contig. Contigs that were fragmented into multiple splits due to their extensive length can be identified in the Parent layer. The grey layers after the GC-Content display mean coverage values (i.e., the environmental signal) of each contig/split across the 11 metagenomes. Finally, you can click on the MOUSE box on the right side of the interface to explore numerical and alphabetic values in more details across the display.

Once the interactive interface is up and running, you can start binning:

Make contig selections by hovering your mouse over the tree in the center of the anvi’o figure. To add the highlighted selection to your current bin, left click. To remove the highlighted selection from your current bin, right click. To create a new bin, click “New bin” under the Bins tab in Settings. To change to a different bin, click the blue dot next to the bin name you’re interested in.

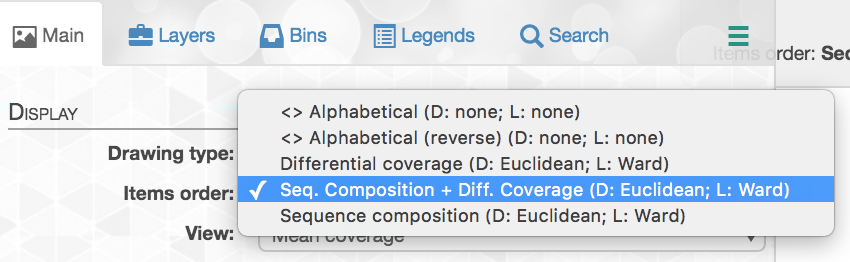

Manipulating the inner dendrogram. By default, anvi’o uses three different clutering approaches to organize contigs in the center dendrogram. Your ability to perform manual binning will be in part determined on your understanding of these clustering strategies. Here is a brief description of each:

-

Differential coverage: clustering based on the differential coverage of contigs across metagenomes. The logic behind this metric is that fragments originating from the same genome should have the same distribution patterns, often different from other genomes.

-

Sequence composition: clustering based on the sequence composition of contigs (by default their tetra-nucleotide frequency). This might seem strange (and in fact, it is to many of us), but fragments originating from the same genome have the tendency to exhibit a similar sequence composition, often different from genomes corresponding to distant lineages.

-

Differential coverage and sequence composition: clustering using the two metrics for optimal binning resolution.

And here is where to change the settings in the Main tab:

Anvi’o by default trusts the assembly; therefore splits from the same contig will remain together (but you can breakup contigs through the interface).

Your selections will not be lost when switching from one organization to another. This particular ability has been very useful to visualize differences between clustering strategies and access the biological relevance of identified bins.

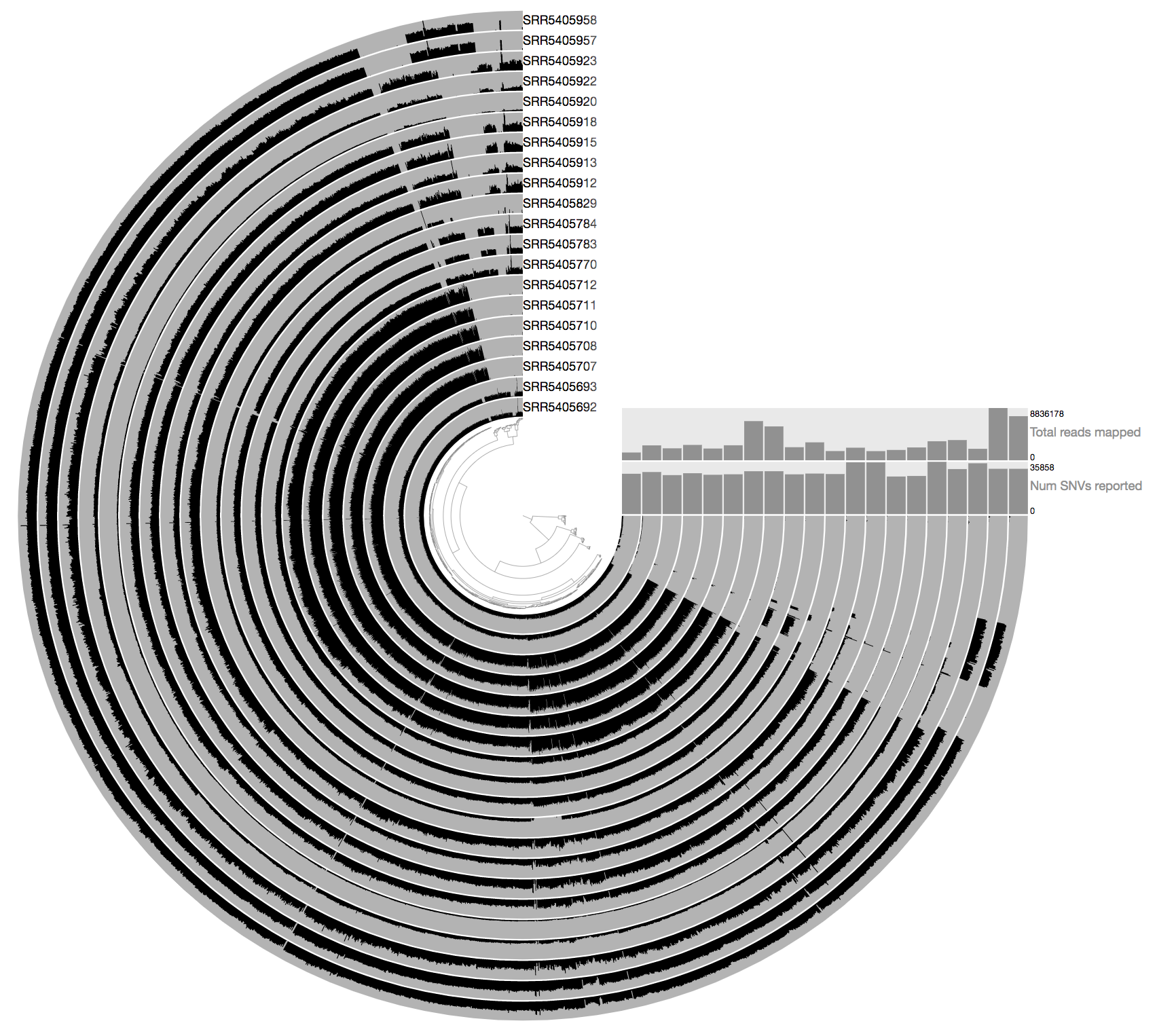

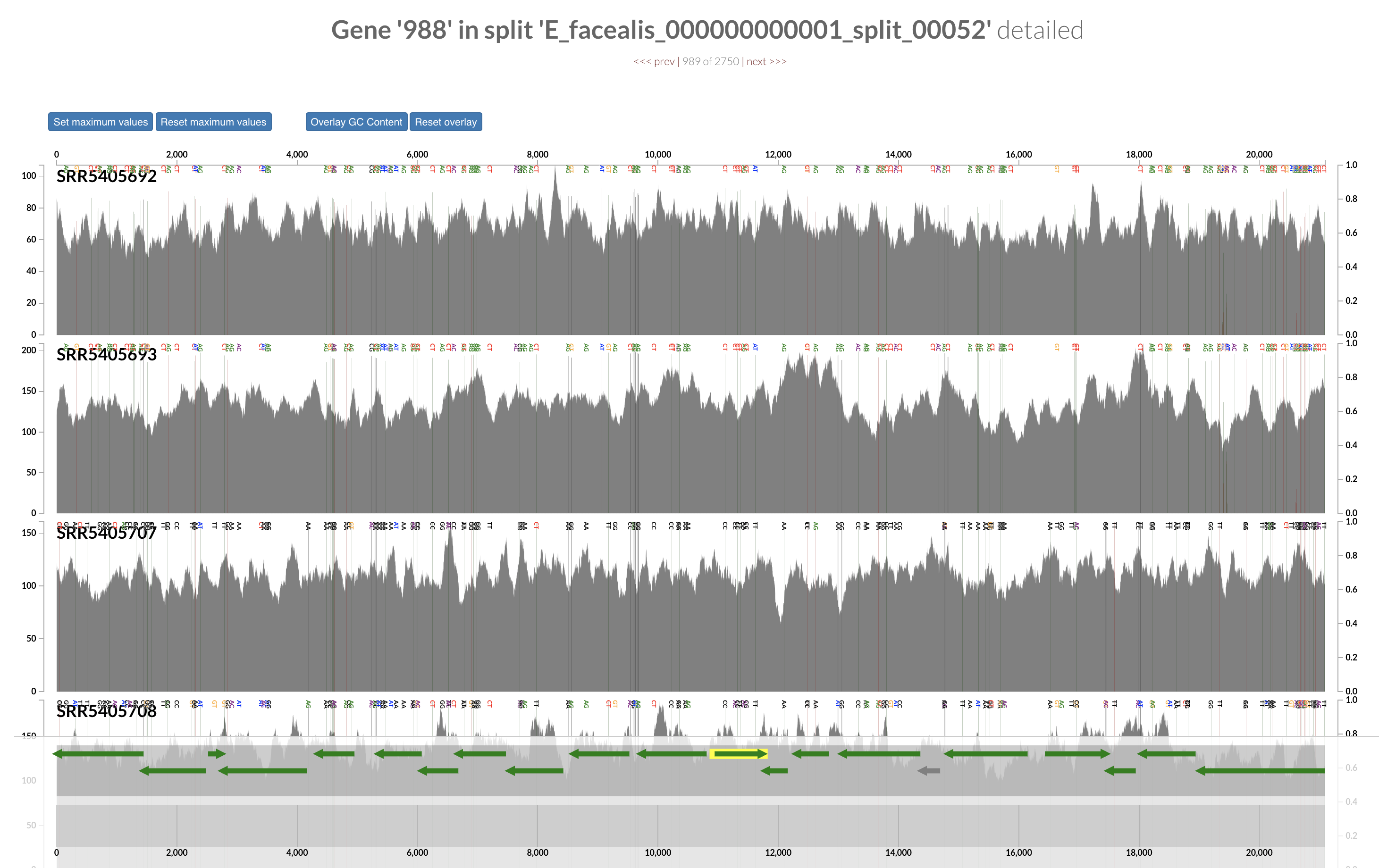

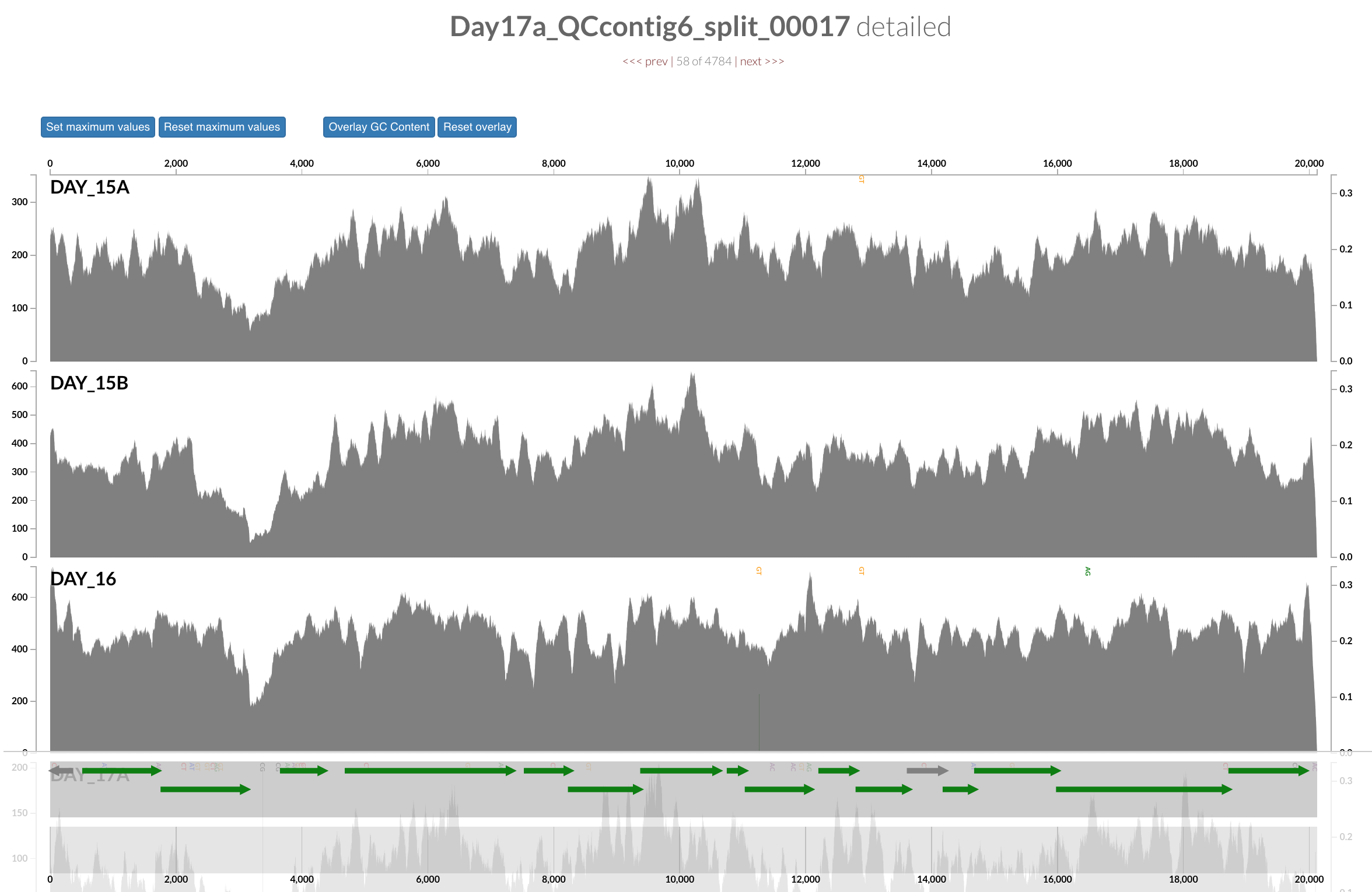

Inspecting individual contigs in the context of their metagenomic signal. In addition to selecting bins based on different clustering strategies, you can explore in more details the coverage of individual contigs across metagenomes: this is the inspection mode. You simply need to right click on any metagenomic layer at the coordinates of a contig of interest, and select “Inspect split”. A new window should pop-up, like in this example:

Note that gene coordinates are displayed at the bottom and their inferred function can be accessed in a simple click. When detected, single nucleotide variants are also described in this display (vertical bars). You can close the inspection mode window when your curiosity has been satisfied.

Importing taxonomy for genes

Anvi’o can work with gene-level taxonomic annotations, but gene-level taxonomy is not useful for anything beyond occasional help with manual binning. Once gene-level taxonomy is added into the contigs database, anvi’o will determine the taxonomy of each contig based on the taxonomic affiliation of genes they describe, and display them in the interface whenever possible.

Centrifuge (code, paper) is one of the options to import taxonomic annotations into an anvi’o contigs database. Centrifuge files for the IGD are already in the directory additional-files/centrifuge-files.

If you import these files into the contigs database the following way,

anvi-import-taxonomy-for-genes -c CONTIGS.db \

-p centrifuge \

-i additional-files/centrifuge-files/centrifuge_report.tsv \

additional-files/centrifuge-files/centrifuge_hits.tsv

And run the interactive interface again,

anvi-interactive -p PROFILE.db -c CONTIGS.db

You will see an additional layer with taxonomy:

In the Layers tab find the Taxonomy layer, set its height to 200, then drag the layer in between DAY24 and hmms_Ribosomal_RNAs, and click Draw again. Then click Save State button, and overwrite the default state. This will make sure anvi’o remembers to make the height of that layer 200px the next time you run the interactive interface!

Inferring taxonomy for metagenomes

So at this point we don’t have any idea about what genomes do we have in this dataset, but anvi’o can make sense of the taxonomic make up of a given metagenome by characterizing taxonomic affiliations of single-copy core genes. The details of this anvi-run-scg-taxonomy is described here in greater detail.

You can take a very quick look at the taxonomic composition of the metagenome through the command line first:

anvi-estimate-scg-taxonomy -c CONTIGS.db \

--metagenome-mode

which should give us this output for the IGD:

╒════════════════════╤════════════════════╤══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╕

│ │ percent_identity │ taxonomy │

╞════════════════════╪════════════════════╪══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╡

│ Ribosomal_S6_11655 │ 98.9 │ Bacteria / Firmicutes / Bacilli / Staphylococcales / Staphylococcaceae / Staphylococcus / Staphylococcus epidermidis │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_12163 │ 98.9 │ Bacteria / Firmicutes / Bacilli / Staphylococcales / Staphylococcaceae / Staphylococcus / Staphylococcus hominis │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_15200 │ 98.9 │ Bacteria / Firmicutes / Bacilli / Lactobacillales / Lactobacillaceae / Leuconostoc / Leuconostoc citreum │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_25880 │ 98.9 │ Bacteria / Firmicutes / Clostridia / Tissierellales / Helcococcaceae / Finegoldia / Finegoldia magna │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_2915 │ 97.9 │ Bacteria / Firmicutes / Bacilli / Lactobacillales / Enterococcaceae / Enterococcus / Enterococcus faecalis │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_29818 │ 98.9 │ Bacteria / Firmicutes / Bacilli / Lactobacillales / Streptococcaceae / Streptococcus / Streptococcus oralis │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_30904 │ 92.5 │ Bacteria / Firmicutes / Clostridia / Tissierellales / Helcococcaceae / Anaerococcus / Anaerococcus sp002359915 │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_4484 │ 98.9 │ Bacteria / Firmicutes / Clostridia / Tissierellales / Peptoniphilaceae / Peptoniphilus / Peptoniphilus rhinitidis │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_6421 │ 100 │ Bacteria / Actinobacteriota / Actinobacteria / Propionibacteriales / Propionibacteriaceae / Cutibacterium / Cutibacterium avidum │

├────────────────────┼────────────────────┼──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────┤

│ Ribosomal_S6_7660 │ 98.9 │ Bacteria / Firmicutes / Bacilli / Staphylococcales / Staphylococcaceae / Staphylococcus / │

╘════════════════════╧════════════════════╧══════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════════╛

Good, but could have been better. Why?

Pro tip: we have a profile database, what does it mean and how it could improve the information we see here?

Making use of our profile database the following way, will give us a little more information about our dataset:

anvi-estimate-scg-taxonomy -c CONTIGS.db \

-p PROFILE.db \

--metagenome-mode \

--compute-scg-coverages

which should give us the following output:

Taxa in metagenome "Infant Gut Contigs from Sharon et al."

===============================================

╒════════════════════╤════════════════════╤════════════════════════════════╤═══════════╤═══════════╤══════════╤═══════════╤═══════════╤══════════════╕

│ │ percent_identity │ taxonomy │ DAY_15A │ DAY_15B │ DAY_16 │ DAY_17A │ DAY_17B │ ... 6 more │

╞════════════════════╪════════════════════╪════════════════════════════════╪═══════════╪═══════════╪══════════╪═══════════╪═══════════╪══════════════╡

│ Ribosomal_S6_2915 │ 97.9 │ (s) Enterococcus faecalis │ 372.512 │ 699.853 │ 663.241 │ 186.34 │ 1149.6 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_11655 │ 98.9 │ (s) Staphylococcus epidermidis │ 112.694 │ 172.478 │ 147.304 │ 23.3901 │ 140.769 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_4484 │ 98.9 │ (s) Peptoniphilus lacydonensis │ 0 │ 0 │ 0 │ 0 │ 0 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_7660 │ 98.9 │ (g) Staphylococcus │ 0 │ 0 │ 0 │ 0 │ 0 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_6421 │ 100 │ (s) Cutibacterium avidum │ 17.8935 │ 5.87368 │ 4.79909 │ 0 │ 0 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_12163 │ 98.9 │ (s) Staphylococcus hominis │ 2.39322 │ 22.5447 │ 13.2806 │ 0 │ 9.94853 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_15200 │ 98.9 │ (s) Leuconostoc citreum │ 0 │ 0 │ 1.86532 │ 0 │ 1.6936 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_29818 │ 98.9 │ (s) Streptococcus oralis │ 0 │ 0 │ 0 │ 0 │ 0 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_25880 │ 98.9 │ (s) Finegoldia magna │ 0 │ 0 │ 0 │ 0 │ 0 │ ... 6 more │

├────────────────────┼────────────────────┼────────────────────────────────┼───────────┼───────────┼──────────┼───────────┼───────────┼──────────────┤

│ Ribosomal_S6_30904 │ 98.1 │ (s) Anaerococcus nagyae │ 0.528958 │ 0 │ 0.14157 │ 0 │ 0 │ ... 6 more │

╘════════════════════╧════════════════════╧════════════════════════════════╧═══════════╧═══════════╧══════════╧═══════════╧═══════════╧══════════════╛

These look like information that would have been useful to have in front of us in our interactive interface. Luckily, anvi’o can add these taxonomic insights into a given profile database, if you change the previous command just a bit:

anvi-estimate-scg-taxonomy -c CONTIGS.db \

-p PROFILE.db \

--metagenome-mode \

--compute-scg-coverages \

--update-profile-db-with-taxonomy

Tadaa. Now let’s take another look at our interactive interface and find the additional data for our layers:

anvi-interactive -c CONTIGS.db \

-p PROFILE.db

At this point we have an overall idea about the make up of this metagenome, but we don’t have any genomes from it. The following sections will cover some of multiple ways to do this.

Manual identification of genomes in the Infant Gut Dataset

Let’s first talk about the signal that leads to this specific organization of contigs we see in our display, and its biological implications.

Next, we can perform a round of manual binning. This should take about 10 minutes.

A few tips for binning:

- You do not have to bin all contigs. Instead, try to identify bins corresponding to an actual genome. Those will have relatively high completion values. The low-completion or no-completion bins in metagenomes might represent viruses, plasmids, or other interesting genetic elements, but this tutorial will ignore them.

- Please try to avoid bins with redundancy >10%. Those likely contain contaminants.

- You can increase the inner tree radius (e.g., 5,000) for a better binning experience in the

Maintab (additional settings) - You can select the option

show gridin theMaintab (additional settings) for a better demarcation of identified bins

Here is an example of 16 bins we identified for comparison AFTER you performed your own binning:

Please save your bins as a collection. You can give your collection any name, but if you call it default, anvi’o will treat it differently.

In the anvi’o lingo, a collection is something that describes one or more bins, each of which describe one or more contigs.

If you identified near-complete genomes, then congratulations, you have characterized genomic contents of microbial populations de novo.

Summarizing the binning results

Why do we do binning? Because we are interested in making sense of our metagenomes in the context of genomes we have recovered through binning. Understanding the distribution patterns of the genomes we have in a collection in a quantitative fashion, or getting back a table of function names found in each one of them, or even summarizing our bins as distinct FASTA files are critical for next steps of every binning analysis. After all, binning is a boring detail before you start doing your science.

To ensure that you have everything you need to continue working with the outcomes of your binning effort outside of anvi’o, we have a program called anvi-summarize. It is possible to summarize any collection stored in an anvi’o profile database through this program. The result is a static HTML page that can be viewed on any computer.

For the lazy

If you don’t want to do your own binning and still be able to continue with the commands below, you can import some binning results this way:

anvi-import-collection additional-files/collections/merens.txt \

--bins-info additional-files/collections/merens-info.txt \

-p PROFILE.db \

-c CONTIGS.db \

-C default

Let’s summarize the collection you have just created:

anvi-summarize -p PROFILE.db \

-c CONTIGS.db \

-C default \

-o SUMMARY

Once the summary is finished, take a minute to look at its contents.

Renaming bins in your collection (from chaos to order)

As you can see from the summary file, at this point bin names are random, and we often find it useful to put some order on this front. This becomes an extremely useful strategy especially when the intention is to merge multiple binning efforts later. For this task we use the program anvi-rename-bins:

anvi-rename-bins -p PROFILE.db \

-c CONTIGS.db \

--collection-to-read default \

--collection-to-write MAGs \

--call-MAGs \

--prefix IGD \

--report-file rename-bins-report.txt

With those settings, a new collection MAG will be created in which (1) bins with a completion >70% are identified as MAGs (stands for Metagenome-Assembled Genome), and (2) bins and MAGs are attached the prefix IGD and renamed based on the difference between completion and redundancy.

Now we can use the program anvi-summarize to summarize the new collection:

anvi-summarize -p PROFILE.db \

-c CONTIGS.db \

-C MAGs \

-o SUMMARY_AFTER_RENAME

You can now visualize the results by double-clicking on the index.html file present in the newly created folder SUMMARY_MAGs.

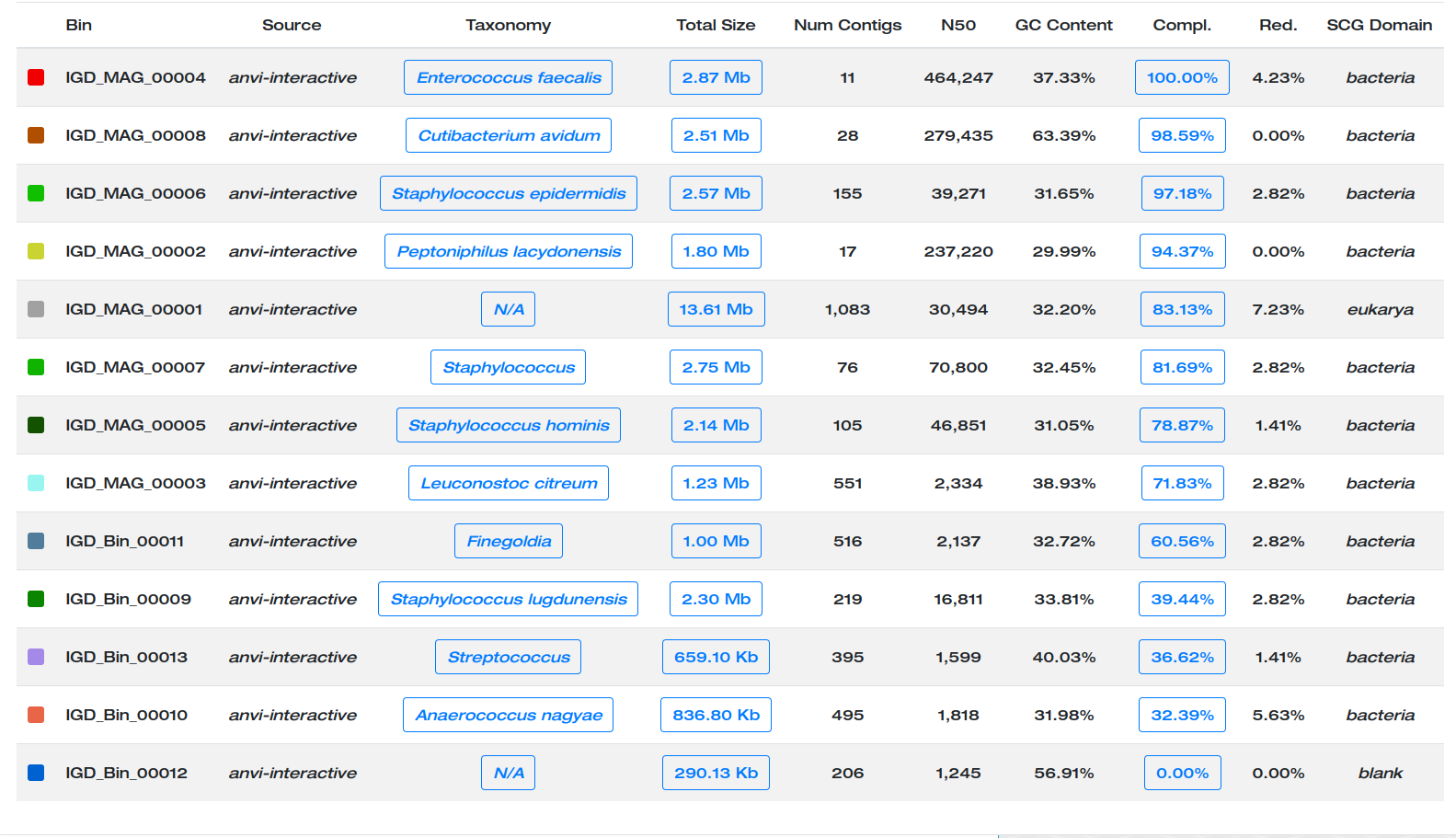

Here are the MAGs we got:

Better.

Refining individual MAGs: the curation step

Why do we need to curate bins? Please see this paper to see how poorly refined bins can influence ecological and evolutionary insights we gain from MAGs. There is also a step-by-step workflow to describe steps of manual curation here.

Why not relying only on single-copy core genes to estimate purity of a genome bin? Let’s think about this altogether. But once we are done with this, please see the relevant section in this study.

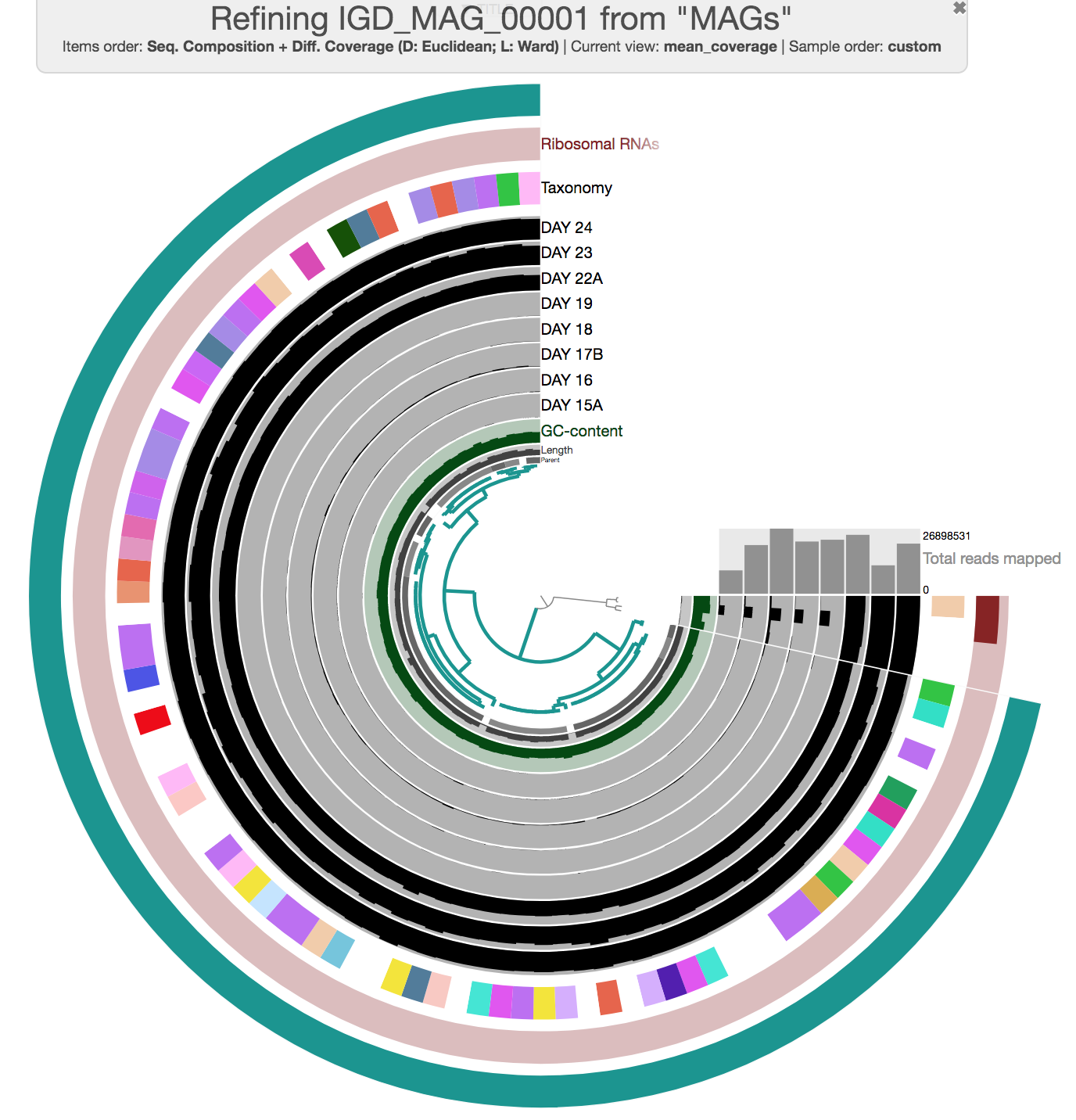

To straighten the quality of the MAGs collection, it is possible to visualize individual bins and if needed, refine them. For this we use the program anvi-refine. For instance, if you were to be interested in refining one of the bins in our current collection, you could run this command:

anvi-refine -p PROFILE.db \

-c CONTIGS.db \

-C MAGs \

-b IGD_MAG_00001

Now the interactive interface only displays contigs from a single bin. During this curation step, one can try different clustering strategies (i.e. by only relying on coverage, or only relying on sequence composition) to identify outliers and investigate carefully whether they may be contaminants. You can select everything, and remove those contigs you don’t want to keep in the bin before using the Bins panel to store your updated set of contigs in the database.

Here is an example of MAG we had to curate (we removed three contigs):

Storing the refined new bin in the database will modify the collection, but you will need to run anvi-summarize if you want the summary output to also be updated.

This is indeed a simple example. But in some cases refining a given MAG can take hours. But this is an extremely critical step of genome-resolved metagenomics studies, especially when important claims are made based on MAGs.

Chapter II: Automatic Binning

Even if you prefer manual binning over automatic binning for the sake of accuracy and control over your data, automatic binning is an unavoidable need due to performance limitations associated with manual binning. The actual purpose of this chapter is to talk about advantages and disadvantages of automatic binning, by comparing multiple binning approaches on the simplest real-world gut metagenome there is: the infant gut dataset. However, while we are going through this, you will also learn about how to incorporate automatic binning results into anvi’o.

The directory additional-files/external-binning-results contains a number of files that describe the binning of contigs in the IGD based on various automatic and manual approaches. These files include (1) outputs from some of the well-known binning algorithms (i.e., GROOPM.txt, MAXBIN.txt, METABAT.txt, BINSANITY_REFINE.txt, MYCC.txt, and CONCOCT.txt), (2) the original binning of this dataset (SHARON_et_al.txt), and the manual binning we performed in the anvi’o paper (MEREN_et_al.txt).

External binning results [FROM AGES AGO]:

The first five files are courtesy of Elaina Graham, who used GroopM (v0.3.5), MetaBat (v0.26.3), MaxBin (v2.1.1), MyCC, and BinSanity (v0.2.1) to bin the IGD. For future references, here are the parameters Elaina used for each approach:

# GroopM v0.3.5 (followed the general workflow on their manual)

groopm parse groopm.db contigs.fa [list of bam files]

groopm core groopm.db -c 1000 -s 10 -b 1000000

groopm recruit groopm.db -c 500 -s 200

groopm extract groopm.db contigs.fa

# MetaBat v0.26.3 (used jgi_summarize_bam_contig_depths to get a depth file from BAM files).

metabat -i contigs.fa -a depth.txt -o bin

# MaxBin v2.1.1

run_MaxBin.pl -contig contigs.fa -out maxbin_IGM -abund_list [list of all coverage files in associated format]

# MyCC (ran via the docker image available here: https://sourceforge.net/projects/sb2nhri/files/MyCC/)

(used jgi_summarize_bam_contig_depths to get depth file from BAM files per the authors suggestion)

MyCC.py contigs.fa 4mer -a depth.txt

# BinSanity + refinement v0.2.1

Binsanity -f . -l contigs.fa -p -10 -c igm.coverage.lognorm

## After bin inspection using CheckM & Anvi'o, INFANT-GUT-ASSEMBLY-bin_18

## was refined using the following parameters

Binsanity-refine -f . -l -p -150 -c igm.coverage.lognorm

CONCOCT results come from the CONCOCT module that was embedded within anvi’o until v6.

Eren et al. results come directly from the collection generated during the study.

Finally, a file corresponding to Sharon et al. results was created by BLAST-searching sequences in bins identified by the authors of the study (see http://ggkbase.berkeley.edu/carrol) to our contigs to have matching names for our assembly.

Now you have the background information about where these files are coming from. Moving on. But just before we continue to move on, let’s remove the default collection from our profile database, if there is one, to avoid any confusion.

You can use the program anvi-show-collections-and-bins to see all collections in your anvi’o profile database:

anvi-show-collections-and-bins -p PROFILE.db

And use this one to remove one that’s called default:

anvi-delete-collection -p PROFILE.db \

-C default

Importing an external binning result

You can create a collection by using the interactive interface (e.g., the default and MAGs collections you just created), or you can import external binning results into your profile database as a collection and see how that collection groups contigs. For instance, let’s import the CONCOCT collection:

anvi-import-collection additional-files/external-binning-results/CONCOCT.txt \

-c CONTIGS.db \

-p PROFILE.db \

-C CONCOCT \

--contigs-mode

you can immediately see what collections are available in a given profile database using the program anvi-show-collections-and-bins (which in this case should show us the collections CONCOCT, and MAGs):

anvi-show-collections-and-bins -p PROFILE.db

You can get a quick idea regarding the estimated completion of bins in a given collection:

anvi-estimate-genome-completeness -p PROFILE.db \

-c CONTIGS.db \

-C CONCOCT

You can also get an idea about their taxonomy:

anvi-estimate-scg-taxonomy -p PROFILE.db \

-c CONTIGS.db \

-C CONCOCT

OK. Let’s run the interactive interface again with the CONCOCT collection:

anvi-interactive -p PROFILE.db \

-c CONTIGS.db \

--collection-autoload CONCOCT

Alternatively you could load the interface without the --collection-autoload flag, and click Bins > Load bin collection > CONCOCT > Load to load the CONCOCT collection.

To turn off text annotation, go to Settings > Options > Bins Selection and then uncheck Show names. You will then see something like this:

So this is how you load and display an external collection. So far so good.

Comparing multiple binning approaches

Since we have all these results from different binning approaches, it clearly would have been interesting to compare them to each other (because benchmarking stuff is often very insightful). But how to do it? The simplest way to do it is to assume a ‘true organization of contigs’, and then investigate every other approach with respect to that.

Here we have multiple independent sources of information we could use. Including (1) the organization of contigs based on hierarchical clustering analysis, (2) per-contig taxonomy estimated from the gene-level taxonomic annotations by Centrifuge, and (3) results from the original publication from Sharon et al., in which authors did a very careful job to identify every genome in the dataset (even resolving the Staphylococcus pangenome, which is extremely hard for automatic binning approaches that work with a single co-assembly). So these are the things we can build upon for a modest comparison.

To include binning results in this framework, we could import each collection into the profile database the way we imported CONCOCT. But unfortunately at any given time there could only be one collection that can be displayed in the interface. Luckily there are other things we can do. For instance, as a workaround, we can merge all binning results into a single file, and use that file as an ‘additional data file’ to visualize them in the interactive interface.

Anvi’o has a script called anvi-script-merge-collections to merge multiple files from external binning results into a single merged file (don’t ask why):

anvi-script-merge-collections -c CONTIGS.db \

-i additional-files/external-binning-results/*.txt \

-o collections.tsv

If you take a look at this file, you will realize that it has a very simple format:

head collections.tsv | column -t

contig BINSANITY_REFINE CONCOCT GROOPM MAXBIN METABAT MYCC SHARON_et_al

Day17a_QCcontig1000_split_00001 INFANT-GUT-ASSEMBLY-bin_19.fna Bin_4 db_bin_11 maxbin.008 metabat_igm.unbinned Cluster.5.fasta Finegoldia_magna

Day17a_QCcontig1001_split_00001 INFANT-GUT-ASSEMBLY-bin_6.fna Bin_7 db_bin_46 maxbin.006 metabat_igm.unbinned Cluster.3.fasta Staphylococcus_epidermidis_virus_014

Day17a_QCcontig1002_split_00001 INFANT-GUT-ASSEMBLY-bin_19.fna Bin_4 db_bin_11 maxbin.007 metabat_igm.unbinned Cluster.5.fasta Finegoldia_magna

Day17a_QCcontig1003_split_00001 INFANT-GUT-ASSEMBLY-bin_14.fna Bin_2 db_bin_1 maxbin.009 metabat_igm.7 Cluster.12.fasta

Day17a_QCcontig1004_split_00001 INFANT-GUT-ASSEMBLY-bin_16.fna Bin_3 db_bin_8 maxbin.008 metabat_igm.10 Cluster.14.fasta

Day17a_QCcontig1005_split_00001 INFANT-GUT-ASSEMBLY-bin_13.fna Bin_5 db_bin_47 maxbin.007 metabat_igm.unbinned Cluster.3.fasta Staphylococcus_epidermidis_viruses

Day17a_QCcontig1006_split_00001 INFANT-GUT-ASSEMBLY-bin_16.fna Bin_3 db_bin_8 maxbin.008 metabat_igm.10 Cluster.14.fasta Leuconostoc_citreum

Day17a_QCcontig1007_split_00001 INFANT-GUT-ASSEMBLY-bin_16.fna Bin_3 db_bin_8 maxbin.008 metabat_igm.10 Cluster.14.fasta

Day17a_QCcontig1008_split_00001 INFANT-GUT-ASSEMBLY-bin_14.fna Bin_2 db_bin_1 maxbin.009 metabat_igm.7 Cluster.8.fasta Candida_albcans

Good. Now you can run the interactive interface to display all bins in all collections stored in collections.tsv as additional layers:

anvi-interactive -p PROFILE.db \

-c CONTIGS.db \

-A collections.tsv

Dealing with additional data tables like a pro

As you can see, -A parameter allows us to add anything to the interface as additional layers as far as the first column of that data matches to our item names. We could alternatively import this additional information into our profile database, and the way to do it is through the use of additional data tables subsystem of anvi’o. There is much more information on how to deal with additional data of all sorts here, but basically we can use the program anvi-import-misc-data to import this data into our database:

anvi-import-misc-data collections.tsv \

-p PROFILE.db \

-t items

Now you can run the interactive interface without the collections.tsv, and you would get the exact same display, since the additional data now would be read from the additional data tables:

anvi-interactive -p PROFILE.db -c CONTIGS.db

But for now, we will continue without these tables, so let’s delete them from the profile database:

anvi-delete-misc-data -p PROFILE.db \

-t items \

--just-do-it

Fine.

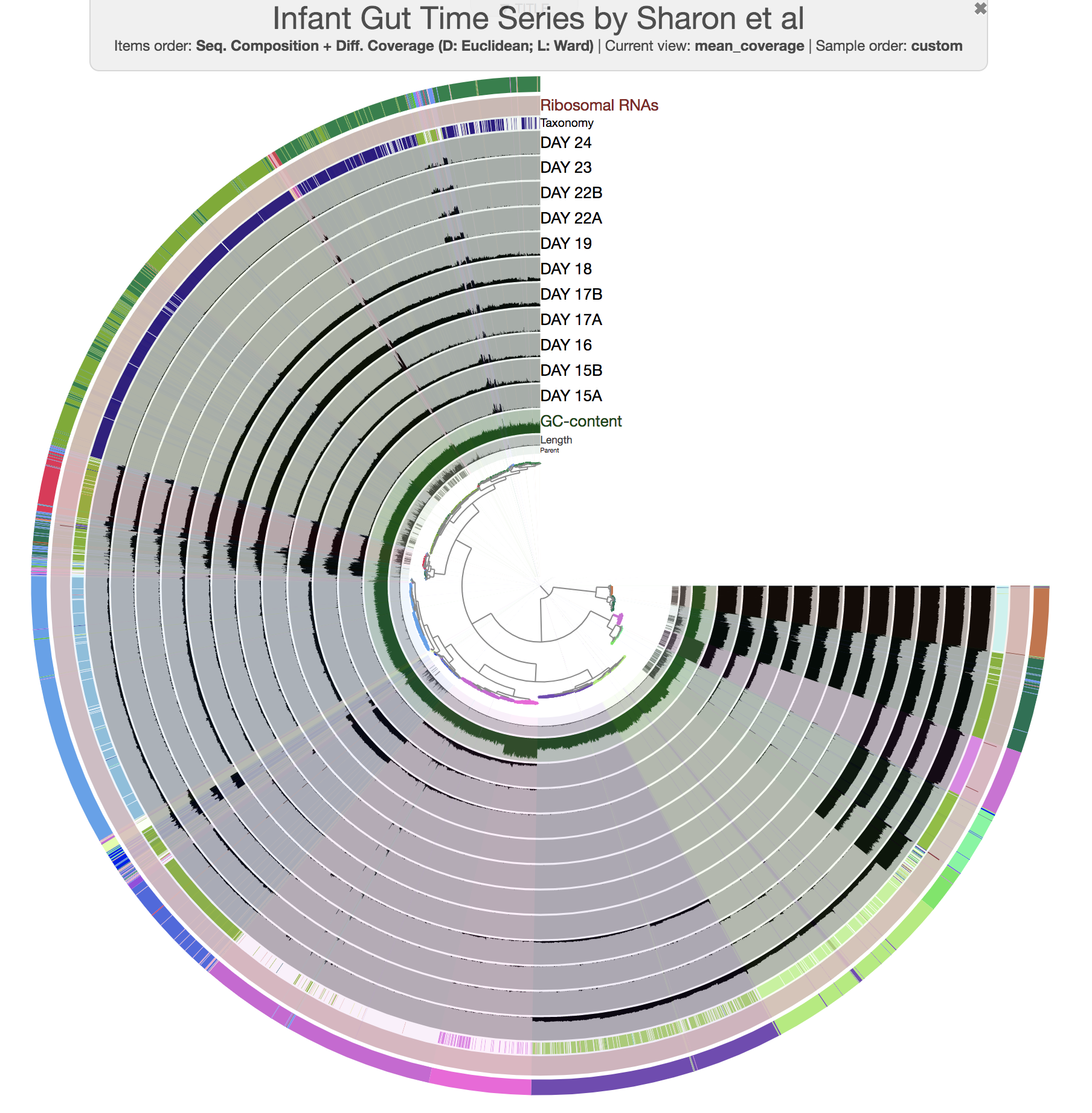

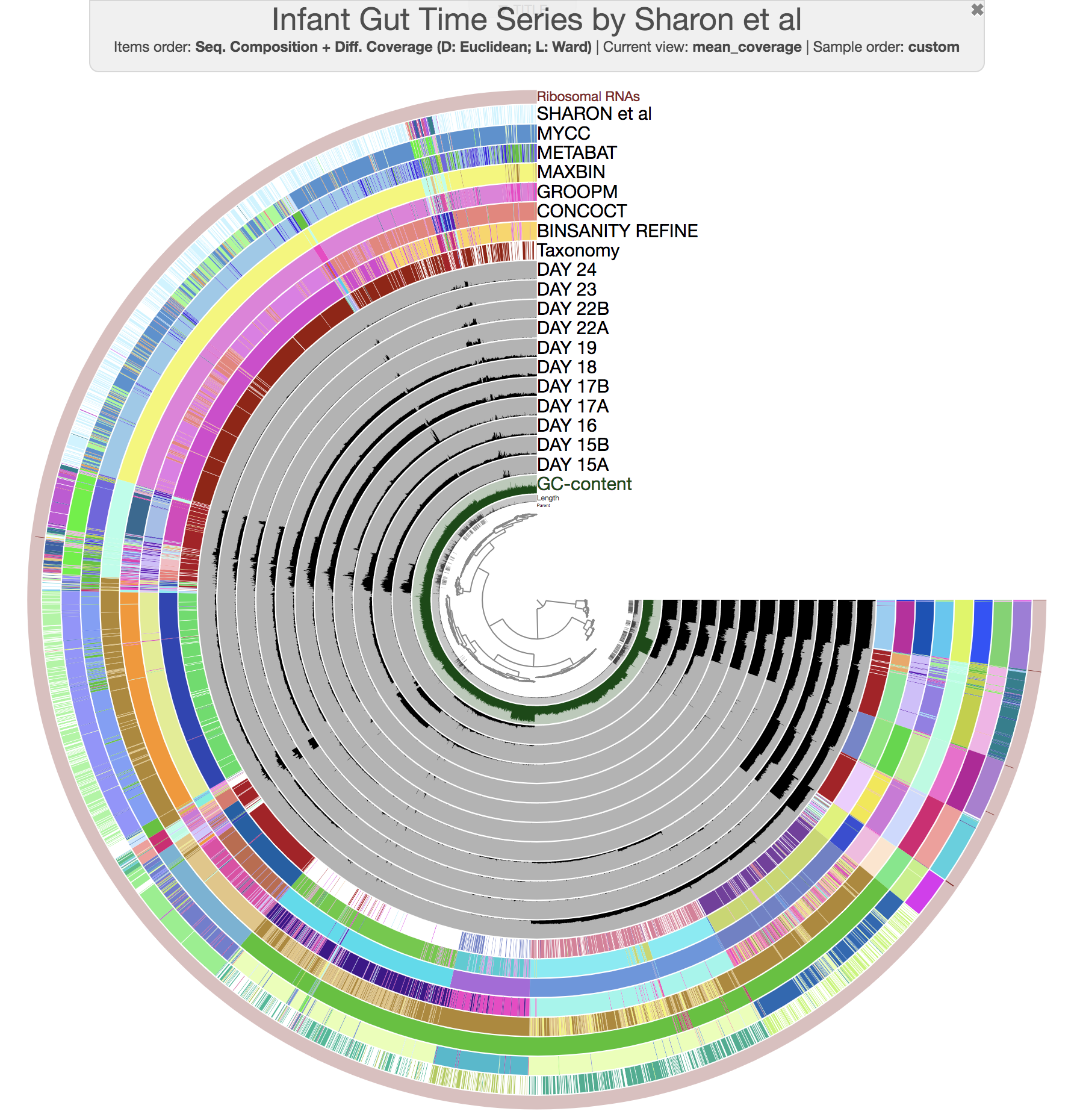

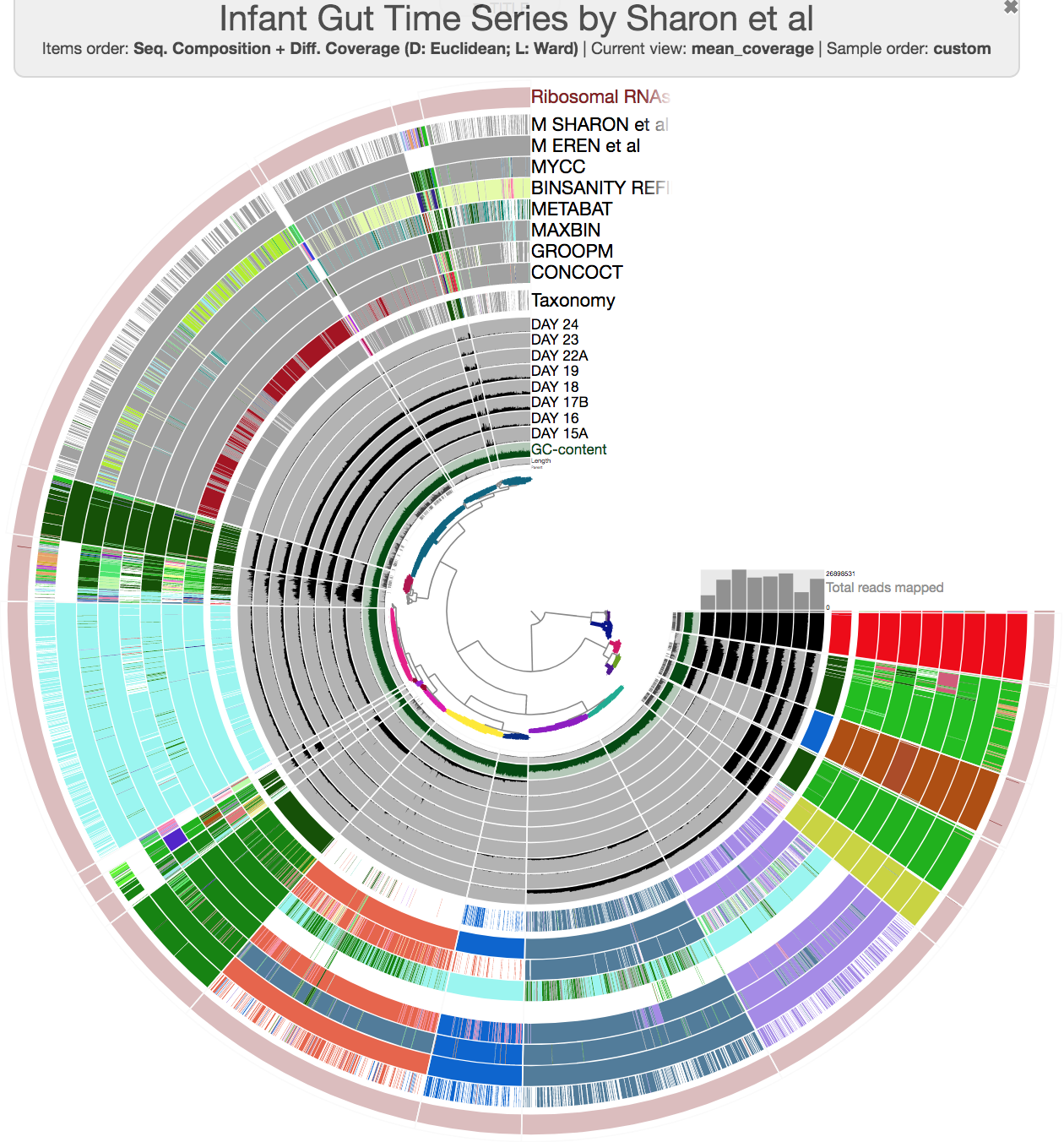

At this point you should be seeing a display similar to this (after setting the height of each additional layer to 200px):

The legends for each of the bin collections are available in the Legends tab of Settings. To visually emphasize relationships between bins, you can change the color of each bin manually by clicking on the colored boxes in the legends. Or, if you’re not a masochist, you can use the program anvi-import-state to import an anvi’o state we have created for you:

anvi-import-state --state additional-files/state-files/state-merged.json \

--name default \

-p PROFILE.db

and run the interactive interface again with the same command line,

anvi-interactive -p PROFILE.db \

-c CONTIGS.db \

-A collections.tsv

this time you should get this display:

So far so good?

Now we can discuss about different approaches of automatic binning.

Please note that the algorithms we have used here may have been improved since the time we did these analyses, therefore please don’t make any decisions about their performance or efficacy based on what you are seeing here.

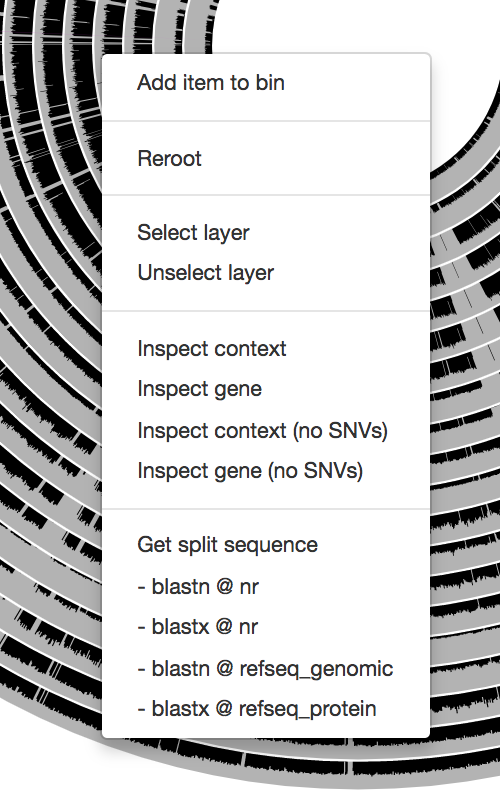

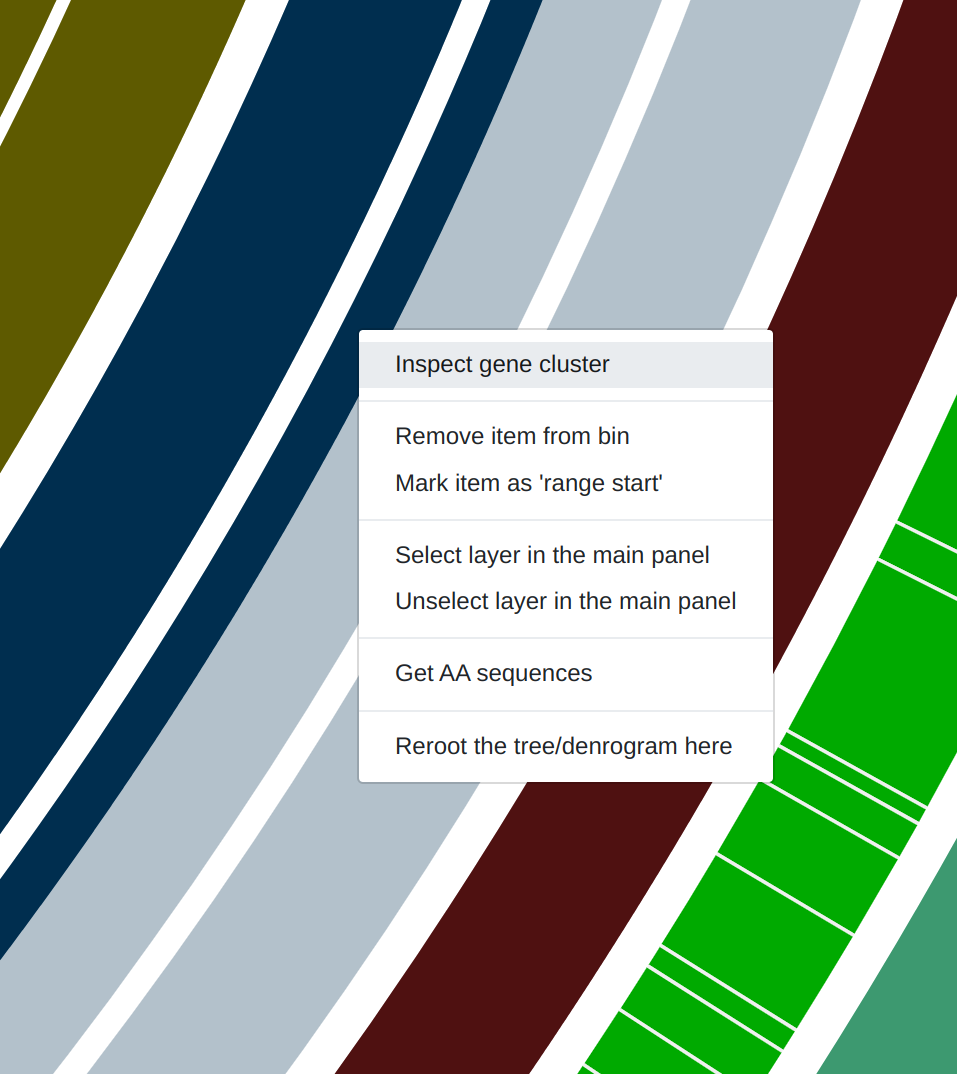

Just a reminder, once you have the interactive interface in front of you, you can in fact investigate the taxonomy of contigs by BLASTing them against various NCBI collections using the right-click menu to have a second opinion about what do public databases think they are:

Mouse section moved under Settings > Data, after version 7.0.0.

We recently have added an option to quickly run them on BIGSI. Sometimes it takes a split second, sometimes (especially when you are in France) it takes minutes. So, no promises, but try it for sure! It is an excellent algorithm.

Manually curating automatic binning outputs

OK. Let’s assume, we didn’t see the interactive interface, and we have no idea about the dataset. We didn’t do any of the things we did up to this point. We just had profiled and merged the IGD, and we did binning of this dataset using MaxBin. Let’s start by importing MaxBin results into the profile database as a collection:

anvi-import-collection additional-files/external-binning-results/MAXBIN.txt \

-c CONTIGS.db \

-p PROFILE.db \

-C MAXBIN \

--contigs-mode

From here, there are two things we can do very quickly. First, we can create a summary of our new collection or we can take a quick look at the completion / redundancy estimates of bins described by this collection from the command line, using anvi-estimate-genome-completeness:

anvi-estimate-genome-completeness -p PROFILE.db \

-c CONTIGS.db \

-C MAXBIN

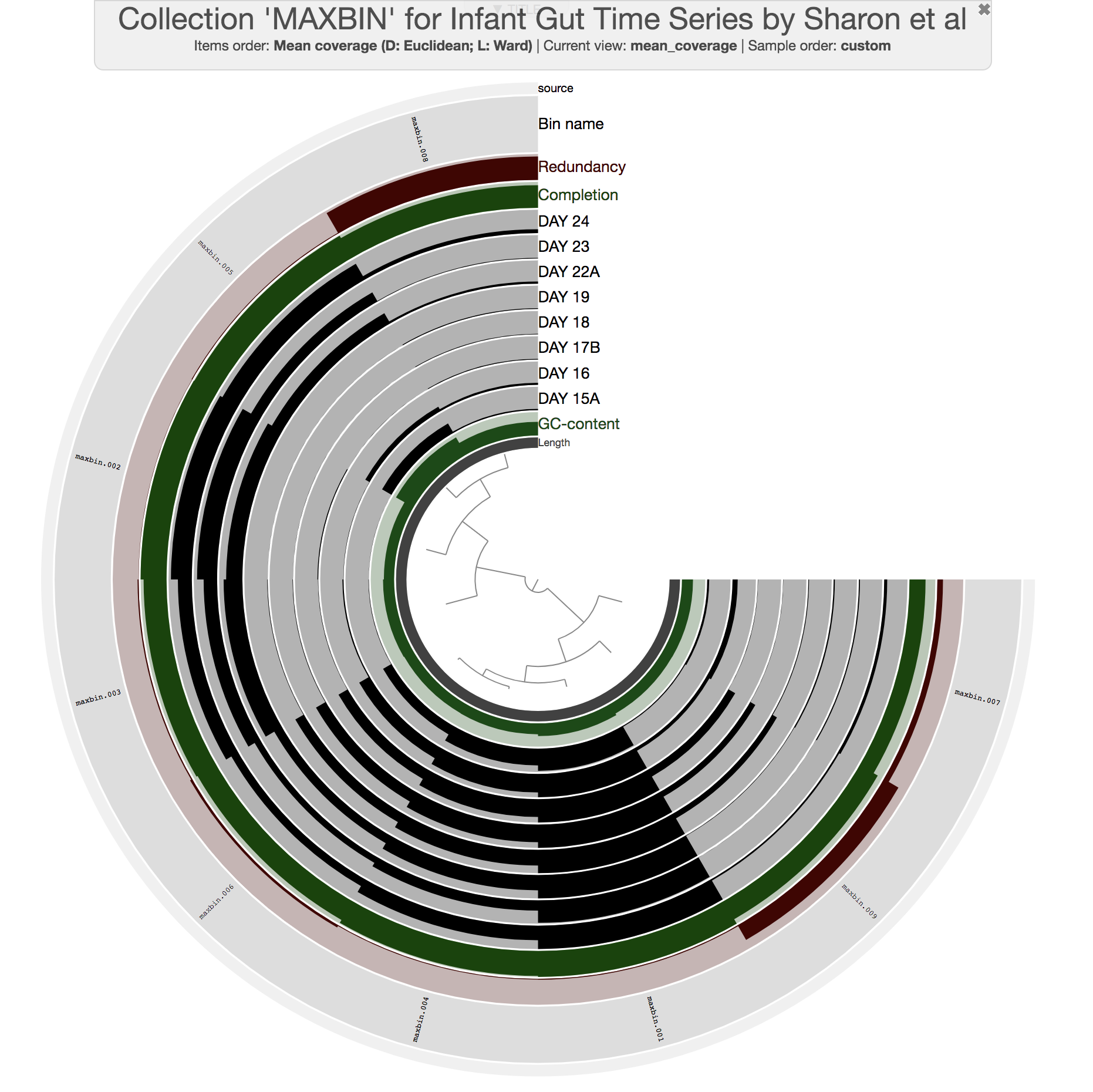

Alternatively, we can take a quick look at the binning results by initiating the interactive interface in collection mode:

anvi-interactive -p PROFILE.db \

-c CONTIGS.db \

-C MAXBIN

This command should give you a display similar to this:

All previous interactive displays were at the contig-level (each leaf in the center tree was a contig). However, this display is at the bin-level. Instead of contigs, this display shows us the distribution of bins MaxBin identified. We also have completion and redundancy estimates for each bin, which helps us make some early sense of what is going on.

Please read this post to learn more about completion and redundancy estimates: Assessing completion and contamination of metagenome-assembled genomes

It is clear that some bins are not as well-resolved as others. For instance, bins maxbin_007 and maxbin_008 have redundancy estimates of 22% and 91%, respectively, which suggests each of them describe multiple distinct populations. Well, clearly we would have preferred those bins to behave.

If you order bins based on their detection across metagenomes (by changing the ‘Items order’ to ‘detection’ from the menu in the Main tab), you can also see that bins maxbin_007 and maxbin_008 are right next to each other. This suggests that it may be a good idea to simply merge these bins first, and then use the program anvi-refine to avoid issues of over-splitting populations of interest. Let’s use the program anvi-merge-bins to merge them into a single bin first:

anvi-merge-bins -p PROFILE.db \

--collection-name MAXBIN \

--bin-names-list "maxbin_007, maxbin_008" \

--new-bin-name maxbin_007_and_008

If you take a quick look at the collection again, you can see that the new bin maxbin_007_and_008 is the bin that needs immediate action based on single-copy core genes:

anvi-estimate-genome-completeness -p PROFILE.db \

-c CONTIGS.db \

-C MAXBIN

Bins in collection "MAXBIN"

===============================================

╒════════════════════╤══════════╤══════════════╤════════════════╤════════════════╤══════════════╤════════════════╕

│ bin name │ domain │ confidence │ % completion │ % redundancy │ num_splits │ total length │

╞════════════════════╪══════════╪══════════════╪════════════════╪════════════════╪══════════════╪════════════════╡

│ maxbin_001 │ BACTERIA │ 1 │ 100 │ 4.23 │ 148 │ 2969341 │

├────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_002 │ BACTERIA │ 0.9 │ 94.37 │ 0 │ 88 │ 1801068 │

├────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_003 │ BACTERIA │ 0.7 │ 81.69 │ 2.82 │ 144 │ 2764617 │

├────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_004 │ BACTERIA │ 0.9 │ 97.18 │ 1.41 │ 188 │ 2571878 │

├────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_005 │ BACTERIA │ 1 │ 98.59 │ 0 │ 151 │ 2555414 │

├────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_006 │ BACTERIA │ 0.6 │ 78.87 │ 5.63 │ 305 │ 2901149 │

├────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_009 │ EUKARYA │ 0.7 │ 83.13 │ 7.23 │ 1379 │ 13915165 │

├────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_007_and_008 │ BACTERIA │ 1 │ 95.77 │ 154.93 │ 2381 │ 6287535 │

╘════════════════════╧══════════╧══════════════╧════════════════╧════════════════╧══════════════╧════════════════╛

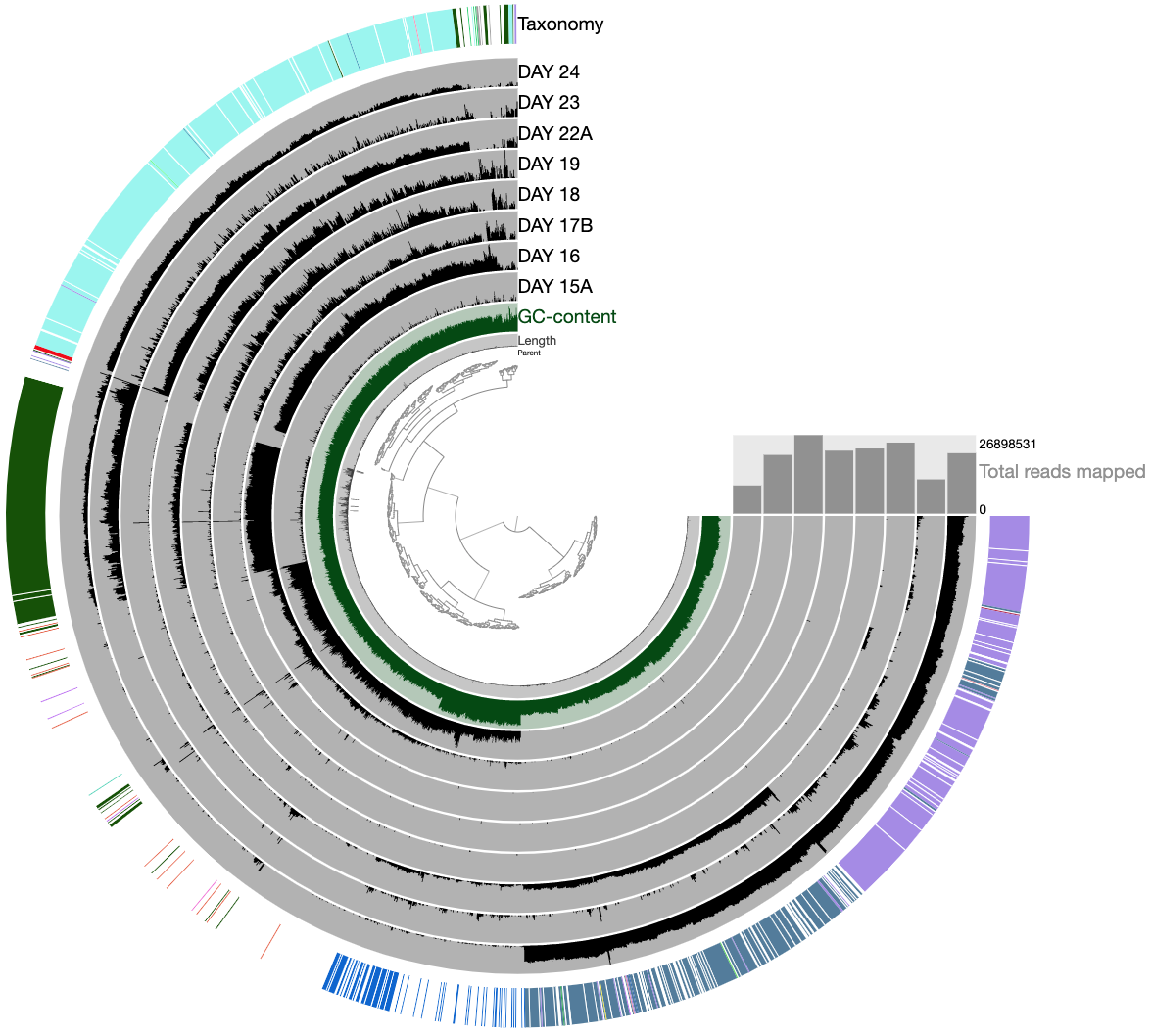

Fine. Let’s refine that bin:

anvi-refine -p PROFILE.db \

-c CONTIGS.db \

-C MAXBIN \

-b maxbin_007_and_008

Which should give us this display, on which we see the distribution of contigs that were originally binned into maxbin_007 and maxbin_008 across samples. The hierarchical clustering picked up some trends, and you can see clusters one can identify quickly:

We can now make the following selections to split these two bins into six, and update our database by storing these refined bins from the Bins panel in the interface:

If you take another look after this, you can see that maxbin_007_and_008 is replaced with smaller bins, and the total redundancy in the collection is much lower:

anvi-estimate-genome-completeness -p PROFILE.db \

-c CONTIGS.db \

-C MAXBIN

Bins in collection "MAXBIN"

===============================================

╒══════════════════════╤══════════╤══════════════╤════════════════╤════════════════╤══════════════╤════════════════╕

│ bin name │ domain │ confidence │ % completion │ % redundancy │ num_splits │ total length │

╞══════════════════════╪══════════╪══════════════╪════════════════╪════════════════╪══════════════╪════════════════╡

│ maxbin_001 │ BACTERIA │ 1 │ 100 │ 4.23 │ 148 │ 2969341 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_002 │ BACTERIA │ 0.9 │ 94.37 │ 0 │ 88 │ 1801068 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_003 │ BACTERIA │ 0.7 │ 81.69 │ 2.82 │ 144 │ 2764617 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_004 │ BACTERIA │ 0.9 │ 97.18 │ 1.41 │ 188 │ 2571878 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_005 │ BACTERIA │ 1 │ 98.59 │ 0 │ 151 │ 2555414 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_006 │ BACTERIA │ 0.6 │ 78.87 │ 5.63 │ 305 │ 2901149 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_009 │ EUKARYA │ 0.7 │ 83.13 │ 7.23 │ 1379 │ 13915165 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_007_and_008_1 │ BACTERIA │ 0.4 │ 38.03 │ 2.82 │ 417 │ 696607 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_007_and_008_2 │ BACTERIA │ 0.6 │ 47.89 │ 0 │ 369 │ 753160 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_007_and_008_3 │ BLANK │ 1 │ 0 │ 0 │ 206 │ 290126 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_007_and_008_4 │ BACTERIA │ 0.3 │ 32.39 │ 5.63 │ 488 │ 810476 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_007_and_008_5 │ BACTERIA │ 0.3 │ 38.03 │ 2.82 │ 247 │ 2333489 │

├──────────────────────┼──────────┼──────────────┼────────────────┼────────────────┼──────────────┼────────────────┤

│ maxbin_007_and_008_6 │ BACTERIA │ 0.8 │ 71.83 │ 2.82 │ 556 │ 1237568 │

╘══════════════════════╧══════════╧══════════════╧════════════════╧════════════════╧══════════════╧════════════════╛

The take home message here is that even when automatic binning approaches yield poorly identified bins, it is possible to improve the final results through a manual refinement step. Clearly these extra steps require a lot of expertise, intuition, attention, and decision making. And fortunately you are all familiar with each one of them because science.

Thank you for following the tutorial this far!

Show/hide More on refinement

You can read more about anvi-refine here. Also you may want to look at Tom’s refining of the Loki archaea: Inspecting the genomic link between Archaea and Eukaryota.

If you are feeling lazy, you can just take a quick look at this videos from the post above.

First a closer look at Lokiarchaeum sp. GC14_75

And then curating it:

You should always double-check your metagenome-assembled genomes.

Show/hide Meren's two cents on binning

Binning is inherently a very challenging task.

In most cases it is absolutely doable, especially when there is a decent assembly, but it is very challenging.

The IGD is one of the most friendly metagenomic datasets available to play with (since an astonishing fraction of nucleotides map back to the assembly), and it comes from a well-implemented experimental design (because that’s what Banfield group does). Yet, you now have seen the extent of disagreement between multiple binning approaches even for this dataset.

You should reming yourself that each of these approaches are implemented by people who are well-trained scientists working with groups of people who are experts in their fields. These tools are benchmarked against others and showed improvements. So each one of them provides the best result compared to all others in at least one metagenomic dataset. I think understanding what this means is important. There is no silver bullet in the common bioinformatics toolkit that will take care of every dataset when you fire it. In fact, depending on the dataset, even the best tools we have may be as efficient as sticks and stones against the Death Star. Computational people are working very hard to improve things, but they would be the first ones to suggest that their tools should never make users feel free from the fact that it is their own responsibility to make sure the results are meaningful and appropriate.

So which one to choose? How to get out of this situation easily and move on? I know how much desire there is to outsource everything we do to fully automated computational solutions. I also acknowledge that the ability to do that is important to perform large-scale and reproducible analyses without going through too much pain. But we are not at a stage yet with metagenomics where you can rely on any of the available automated binning tools, and expect your MAGs to be safe and sound.

For instance, I think CONCOCT is doing a pretty awesome job identifying MAGs in the IGD, even with the low-abundance organisms. However, it is not perfect, either. In fact if you look carefully, you can see that it creates two bins for one Candida albicans genome. Hierarchical clustering will always get you closest to the best organization of contigs with simple distance metrics and linkage algorithms. But there are major challenges associated with that approach, including the fact that it is simply an exploratory method and can’t give you “bins” out-of-the-box. Even more importantly, it has tremendous limitations come from its computational complexity (~O(m2 log m), where m is the number of data points). So in most cases it is not even a remote possibility to organize contigs using a hierarchical clustering approach in an assembly in reasonable amount of time (and there is no way to visualize that even if you were to get a dendrogram for 200,000 contigs (you can create simple 2D ordinations with that number of items, but you really shouldn’t, but that’s another discussion)). Except assemblies with rather smaller number of contigs like the IGD, we are always going to use automated ways to identify bins, at least initially, knowing that resulting bins may be, and in most cases will be, crappy. That’s why in anvi’o we implemented ways to quickly look into automatically identified bins (i.e., the collection mode of anvi-interactive), and even refine those with poor redundancy scores to improve final results (i.e., anvi-refine).

So we can fix crappy bins to an extent since we know more or less how things should look like, and we have tools to do that. That being said, there is one more consideration that is very easy to miss. Although it is somewhat possible to recover from conflation error (i.e., more than one genome ends up in one bin), it is much harder to recover from the fragmentation error (i.e., one genome is split into multiple bins). You can see an example for fragmentation error if you take a careful look from this figure (i.e., CONCOCT bins between 9:30 and 12:00 o’clock, or MaxBin bins between 5:00 and 7:00 o’clock):

This is a problem that likely happens quite often, and very hard to deal with once the bins are identified. But we can recover from that.

From fragmentation to conflation error: A Meren Lab Heuristic to fight back

One of the heuristics we recently started using in our lab to avoid fragmentation error is to confine CONCOCT’s clustering space to a much smaller number of clusters than the expected number of bacterial genomes in a given dataset, and then curate resulting contaminated bins manually. Let’s say we expect to find n bacterial genomes, so we run CONCOCT with a maximum number of clusters of about n/2 (no judging! I told you it was a heuristic!).

Well, how do you even know how many bacterial genomes you should expect to find in a metagenome?

Thanks for the great question. Although this may sound like a challenging problem to some, we have a very simple way to resolve it (which I described in this blog post). If you still have access to the IGD, you can run this simple command:

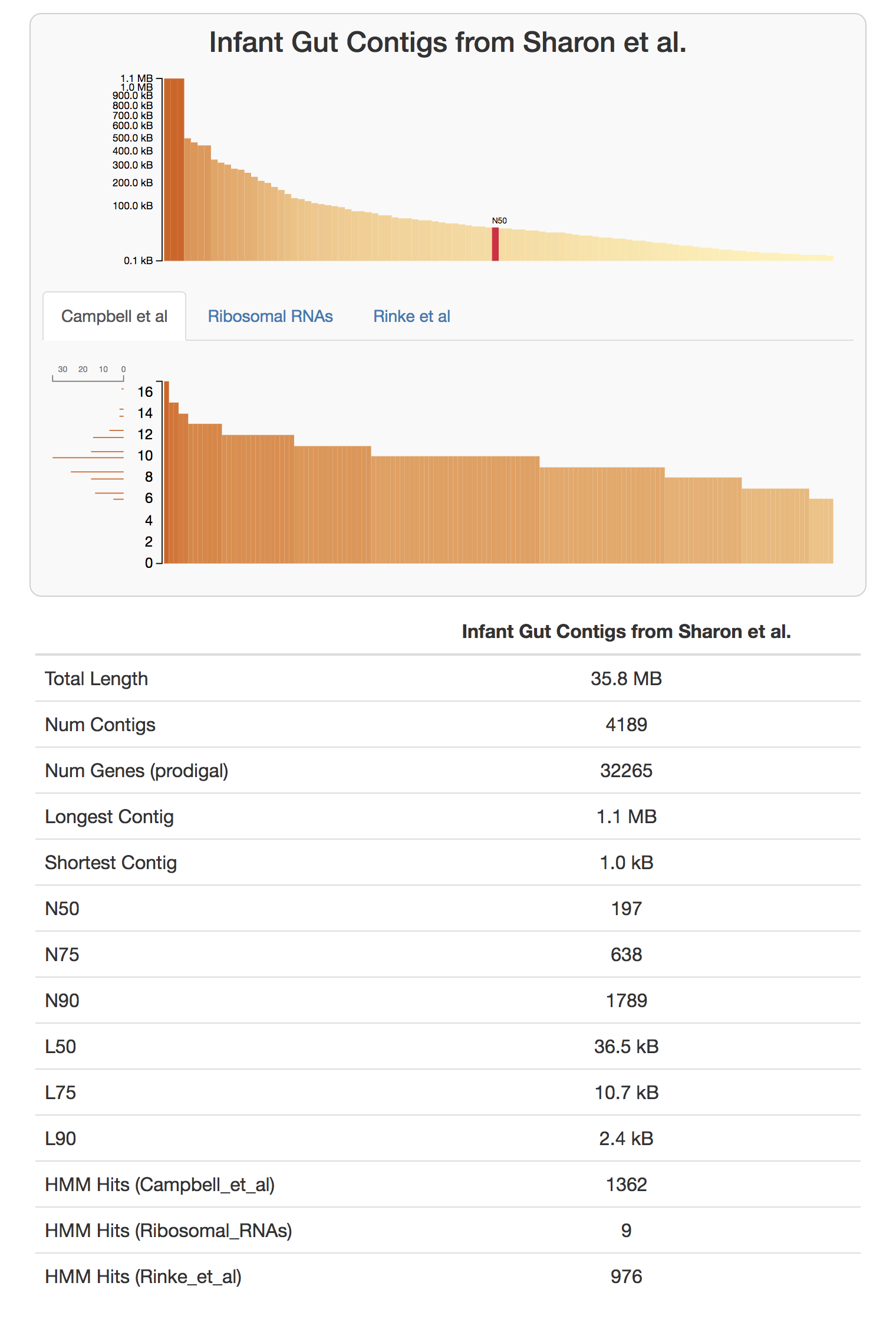

anvi-display-contigs-stats CONTIGS.db

If you take a look at the resulting interactive graph, you can see that one should expect to find about 10 near-complete genomes in this dataset:

We have a citable version, and a more formal description of this workflow in our recent paper “Identifying contamination with advanced visualization and analysis practices: metagenomic approaches for eukaryotic genome assemblies” (see the supplementary material).

Fine. Using anvi-cluster-with-concoct program, we ask CONCOCT to naively identify 5 clusters in this dataset, and store the results in the profile database as a collection:

anvi-cluster-with-concoct -p PROFILE.db \

-c CONTIGS.db \

--num-clusters 5 \

-C CONCOCT_C5

anvi-cluster-with-concoct has been superseded with anvi-cluster-contigs

Now you can run the interface again,

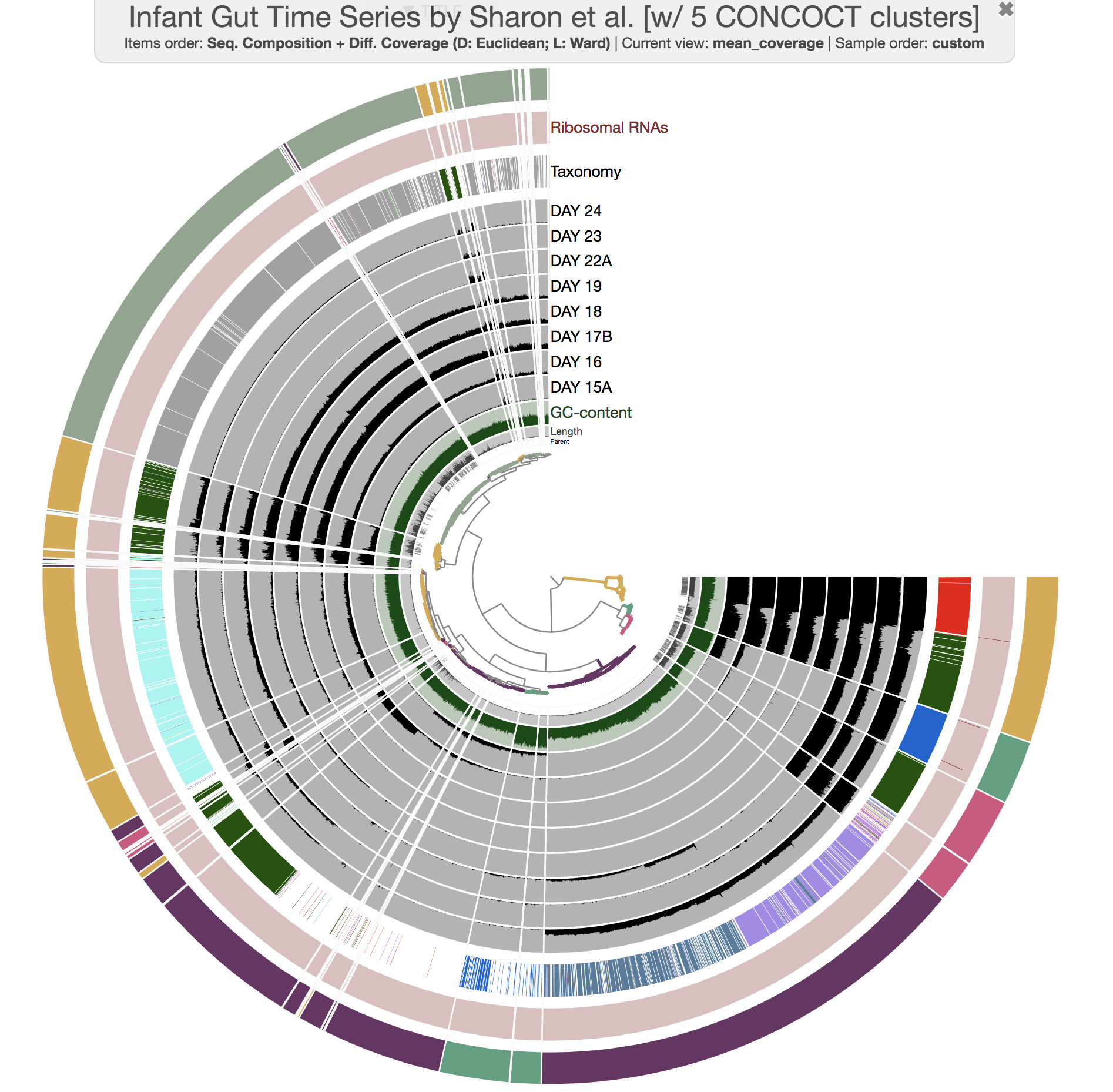

anvi-interactive -p PROFILE.db \

-c CONTIGS.db \

--title 'Infant Gut Time Series by Sharon et al. [w/ 5 CONCOCT clusters]' \

--collection-autoload CONCOCT_C5

and you would see this:

Well, there aren’t any fragmentation errors anymore, and in fact CONCOCT did an amazing job to identify general patterns in the dataset. Now refining these bins to fix all the conflation errors would be much more easier. If you would like to try, here is an example:

anvi-refine -p PROFILE.db \

-c CONTIGS.db \

-C CONCOCT_C5 \

-b Bin_1

There are more ways to improve bins and binning results. But although we have seen major improvements in our research by exploring these directions, there are also many other cases where nothing is quite enough.

Then it is time to increase the depth of sequencing, implement a different assembly strategy, rethink the sampling strategy, or change the experimental approach to do what seems to be undoable. Here is an example from Tom Delmont et al. to that last point with soil metagenomics: doi:10.3389/fmicb.2015.00358.

We all just have to continue working, and enjoy this revolution.

Chapter III: Phylogenomics

This is more of a practical tutorial to do phylogenomic analyses on metagenome-assembled genomes described in anvi’o collections. For a more abstract tutorial on phylogenomics, please consider first reading ‘An anvi’o workflow for phylogenomics’.

To see a practical application of phylogenomics see this workflow.

If you haven’t followed the previous sections of the tutorial, you will need the anvi’o merged profile database and the anvi’o contigs database for the IGD available to you. Before you continue, please click here, do everything mentioned there, and come back right here to continue following the tutorial from the next line when you read the directive go back.

Please run the following command in the IGD dir, so you have everything you need. We will simply import our previously generated collection of bins in the IGD dataset as the default collection:

anvi-import-collection additional-files/collections/merens.txt \

--bins-info additional-files/collections/merens-info.txt \

-p PROFILE.db \

-c CONTIGS.db \

-C default

At this point, you have in your anvi’o profile database a collection with multiple bins:

anvi-show-collections-and-bins -p PROFILE.db

Collection: "default"

===============================================

Collection ID ................................: default

Number of bins ...............................: 13

Number of splits described ...................: 4,451

Bin names ....................................: Aneorococcus_sp, C_albicans, E_facealis, F_magna, L_citreum, P_acnes, P_avidum, P_rhinitidis, S_aureus, S_epidermidis, S_hominis, S_lugdunensis, Streptococcus

Putting genomes in a phylogenomic context is one of the common ways to compare them to each other. The common practice is to concatenate aligned sequences of single-copy core genes for each genome of interest, and generate a phylogenomic tree by analyzing the resulting alignment.

Let’s assume we want to run a phylogenomic analysis on all genome bins we have in the collection merens in the IGD (you may have your own collections somewhere, that is fine too).

In order to do the phylogenomic analysis, we will need a FASTA file of concatenated genes. And to get that FASTA file out of our anvi’o databases, we will primarily use the program anvi-get-sequences-for-hmm-hits.

Selecting genes from an HMM Profile

We first need to identify an HMM profile, and then select some gene names from this profile to play with.

Going back to the IGD, let’s start by looking at what HMM profiles are available to us:

anvi-get-sequences-for-hmm-hits -c CONTIGS.db \

-p PROFILE.db \

-o seqs-for-phylogenomics.fa \

--list-hmm-sources

* Bacteria_71 [type: singlecopy] [num genes: 71]

* Archaea_76 [type: singlecopy] [num genes: 76]

* Protista_83 [type: singlecopy] [num genes: 83]

* Ribosomal_RNAs [type: Ribosomal_RNAs] [num genes: 12]

As you know, you can use anvi-run-hmms program with custom made HMM profiles to add your own HMMs into the contigs database.

Alright. We have two. Let’s see what genes do we have in Bacteria_71:

anvi-get-sequences-for-hmm-hits -c CONTIGS.db \

-p PROFILE.db \

-o seqs-for-phylogenomics.fa \

--hmm-source Bacteria_71 \

--list-available-gene-names

* Bacteria_71 [type: singlecopy]: ADK, AICARFT_IMPCHas, ATP-synt, ATP-synt_A,

Chorismate_synt, EF_TS, Exonuc_VII_L, GrpE, Ham1p_like, IPPT, OSCP, PGK,

Pept_tRNA_hydro, RBFA, RNA_pol_L, RNA_pol_Rpb6, RRF, RecO_C, Ribonuclease_P,

Ribosom_S12_S23, Ribosomal_L1, Ribosomal_L13, Ribosomal_L14, Ribosomal_L16,

Ribosomal_L17, Ribosomal_L18p, Ribosomal_L19, Ribosomal_L2, Ribosomal_L20,

Ribosomal_L21p, Ribosomal_L22, Ribosomal_L23, Ribosomal_L27, Ribosomal_L27A,

Ribosomal_L28, Ribosomal_L29, Ribosomal_L3, Ribosomal_L32p, Ribosomal_L35p,

Ribosomal_L4, Ribosomal_L5, Ribosomal_L6, Ribosomal_L9_C, Ribosomal_S10,

Ribosomal_S11, Ribosomal_S13, Ribosomal_S15, Ribosomal_S16, Ribosomal_S17,

Ribosomal_S19, Ribosomal_S2, Ribosomal_S20p, Ribosomal_S3_C, Ribosomal_S6,

Ribosomal_S7, Ribosomal_S8, Ribosomal_S9, RsfS, RuvX, SecE, SecG, SecY, SmpB,

TsaE, UPF0054, YajC, eIF-1a, ribosomal_L24, tRNA-synt_1d, tRNA_m1G_MT,

Adenylsucc_synt

OK. A lot. Good for you, Bacteria_71.

Bacteria_71 is a collection that anvi’o developers curated by taking Mike Lee’s bacterial single-copy core gene collection first released in GToTree, which is an easy-to-use phylogenomics workflow.

For the sake of this simple example, let’s assume we want to use a bunch of ribosomal genes for our phylogenomic analysis: Ribosomal_L1, Ribosomal_L2, Ribosomal_L3, Ribosomal_L4, Ribosomal_L5, Ribosomal_L6.

OK. The following command will give us all these genes from all bins described in the collection default:

anvi-get-sequences-for-hmm-hits -c CONTIGS.db \

-p PROFILE.db \

-o seqs-for-phylogenomics.fa \

--hmm-source Bacteria_71 \

-C default \

--gene-names Ribosomal_L1,Ribosomal_L2,Ribosomal_L3,Ribosomal_L4,Ribosomal_L5,Ribosomal_L6

Init .........................................: 4451 splits in 13 bin(s)

Hits .........................................: 668 hits for 1 source(s)

Filtered hits ................................: 64 hits remain after filtering for 6 gene(s)

Mode .........................................: DNA sequences

Genes are concatenated .......................: False

Output .......................................: seqs-for-phylogenomics.fa

If you look at the resulting FASTA file, you will realize that it doesn’t look like an alignment (I trimmed the output, you will see the full output when you look at the file yourself):

less seqs-for-phylogenomics.fa

>Ribosomal_L1___Bacteria_71___9a3cc bin_id:E_facealis|source:Bacteria_71|e_value:8.4e-59|contig:Day17a_QCcontig1|gene_callers_id:116|start:106378|stop:107068|length:690

ATGGCTAAAAAGAGCAAAAAAATGCAAGAAGCATTGAAAAAAGTTGATGCTACAAAAGCTTACTCAGTTGAAGAAGCAGTAGCTTTAGCAAAAGATACAAACATCGCGAAATTTGACGCA

(...)

>Ribosomal_L6___Bacteria_71___05162 bin_id:S_epidermidis|source:Bacteria_71|e_value:1.1e-43|contig:Day17a_QCcontig7|gene_callers_id:2325|start:13243|stop:13780|length:537

ATGAGTCGTGTTGGTAAGAAAATTATTGACATTCCTAGTGACGTAACAGTAACTTTTGACGGAAGTCATGTCACTGTAAAAGGTCCAAAAGGTGAATTAGAAAGAACTTTAAATGAAAGA

(...)

>Ribosomal_L5___Bacteria_71___ec17f bin_id:S_epidermidis|source:Bacteria_71|e_value:1.8e-31|contig:Day17a_QCcontig7|gene_callers_id:2328|start:14441|stop:14981|length:540

TTGAACCGTTTAAAAGAAAAATTTAATACAGAAGTTACTGAAAACTTAGTGAAAAAATTCAATTATAGTTCAGTGATGGAAGTACCAAAAATTGAGAAAATCGTTGTGAATATGGGTGTA

(...)

To exit less mode, press q.

Every sequence for every HMM hit is for itself :/ Hmm.

Concatenated we stand, divided we fall.

Concatenating genes

Although you can see how and why the previous output could be very useful for many other purposes, it is kinda useless for a phylogenomic analysis since we need a single concatenated alignment of gene sequences per genome.

If you look at the help menu of anvi-get-sequences-for-hmm-hits, you will see that there is a flag, --concatenate-genes, to get your genes of interest to be concatenated. Let’s do that:

anvi-get-sequences-for-hmm-hits -c CONTIGS.db \

-p PROFILE.db \

-o seqs-for-phylogenomics.fa \

--hmm-source Bacteria_71 \

-C default \

--gene-names Ribosomal_L1,Ribosomal_L2,Ribosomal_L3,Ribosomal_L4,Ribosomal_L5,Ribosomal_L6 \

--concatenate-genes

Config Error: If you want your genes to be concatenated into a multi-alignment file, you must

also ask for the best hit (using the `--return-best-hit`) flag to avoid issues

if there are more than one hit for a gene in a given genome. Anvi'o could have

set this flag on your behalf, but it just is not that kind of a platform :/

Well. That didn’t go well.

The reason why it didn’t go so well is because even in the most complete genomes, there may be multiple HMM hits for a given ‘single-copy gene’. Here is an evidence for that coming from gold standard genomes for skeptics. As a solution to this problem, anvi’o asks you to use the --return-best-hit flag, which will return the most significant HMM hit if there are more than one gene that matches to the HMM of a given gene in a given genome. Fine. Let’s do that, then:

anvi-get-sequences-for-hmm-hits -c CONTIGS.db \

-p PROFILE.db \

-o seqs-for-phylogenomics.fa \

--hmm-source Bacteria_71 \

-C default \

--gene-names Ribosomal_L1,Ribosomal_L2,Ribosomal_L3,Ribosomal_L4,Ribosomal_L5,Ribosomal_L6 \

--concatenate-genes \

--return-best-hit

If you take a look at the output file, you can see that we are getting somewhere.

But the output is in DNA alphabet, which may not be the best option for phylogenomic analyses, especially if the genomes you have are coming from distant clades (which happens to be the case for IGD). Fortunately, you can easily switch to AA alphabet with an additional flag --get-aa-sequences (and no, there is no end to anvi’o flags, and the earlier you start getting used to the idea of reading those help menus, the sooner you will master your anvi’o game).

anvi-get-sequences-for-hmm-hits -c CONTIGS.db \

-p PROFILE.db \

-o seqs-for-phylogenomics.fa \

--hmm-source Bacteria_71 \

-C default \

--gene-names Ribosomal_L1,Ribosomal_L2,Ribosomal_L3,Ribosomal_L4,Ribosomal_L5,Ribosomal_L6 \

--concatenate-genes \

--return-best-hit \

--get-aa-sequences

If you look at the resulting file again, you will see how everything looks just so lovely. Congratulations. You did it.

In v8, anvi’o forgets to tell you that two bins in the default collection, P_acnes and S_lugdunensis, have none of the 6 requested genes, and instead silently removes them from the analysis (bad anvi’o). This is fixed in the development version and will hopefully be updated in this tutorial at the time of the next stable release. But for now, just keep it in mind since the loss of those two bins will become relevant in the next section.

Computing the phylogenomic tree

Once you have your concatenated genes, which you now have them in seqs-for-phylogenomics.fa if you followed the previous section, it is time to perform the phylogenomic analysis.

There are multiple ways to do this. Here we will use the program anvi-gen-phylogenomic-tree, which accepts a FASTA file and uses one of the programs it knows about to compute the tree. Currently the only option is FastTree, which infers approximately-maximum-likelihood phylogenetic trees from FASTA files that look like yours. Send us your favorite, and we will happily consider expanding the collection of available tools for this analysis.

Computing a phylogenomic tree from our FASTA file is as simple as this:

anvi-gen-phylogenomic-tree -f seqs-for-phylogenomics.fa \

-o phylogenomic-tree.txt

If anvi’o complains that you don’t have FastTree, you need to do two things. First, feel ashamed. Second, get FastTree: click me. (If you are using a mac and have brew, quietly type brew install fasttree and pretend it never happened).

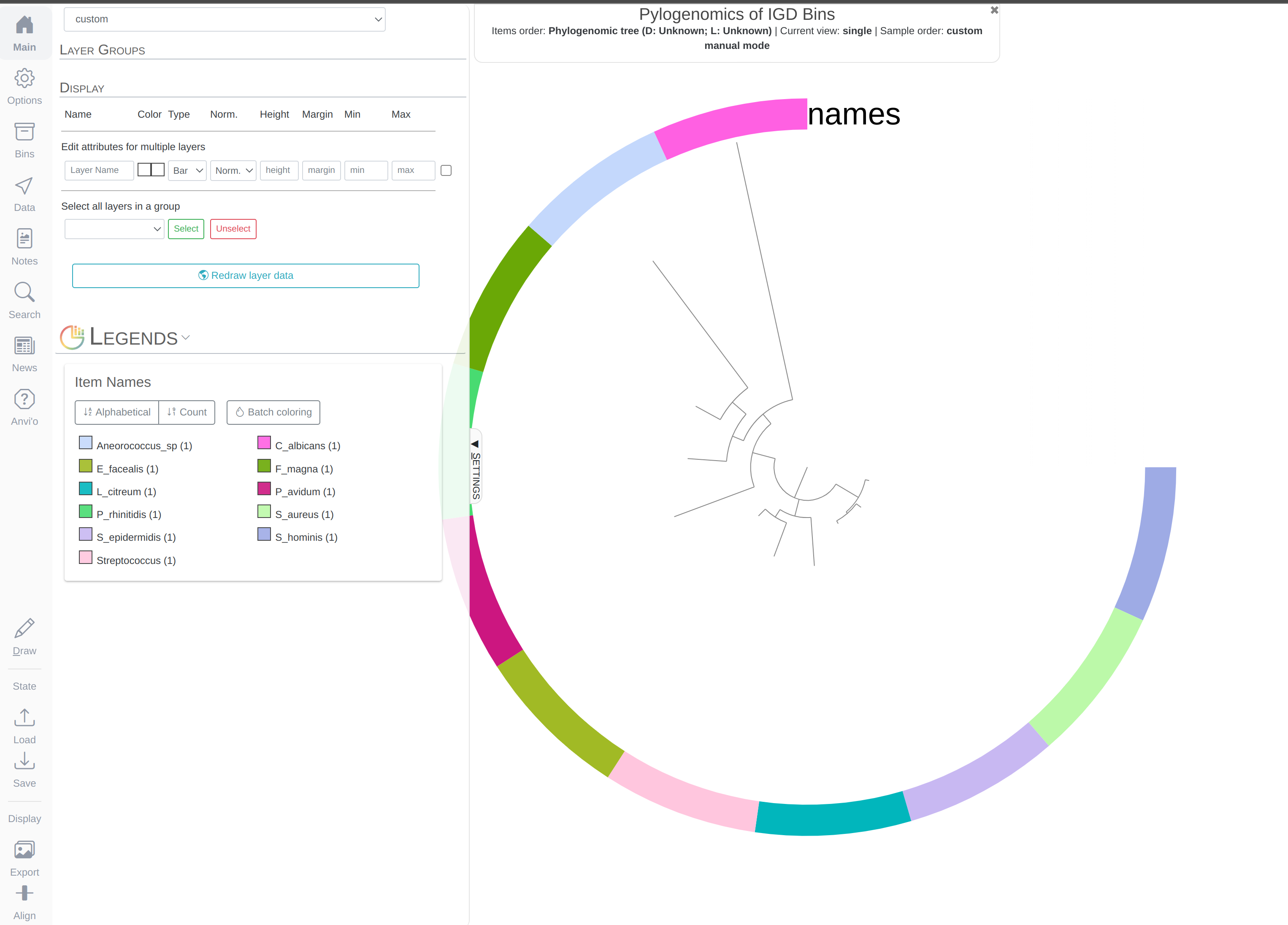

The resulting file phylogenomic-tree.txt is a proper newick tree. If you want, you can visualize it immediately with anvi-interactive in manual mode:

anvi-interactive --tree phylogenomic-tree.txt \

-p temp-profile.db \

--title "Pylogenomics of IGD Bins" \

--manual

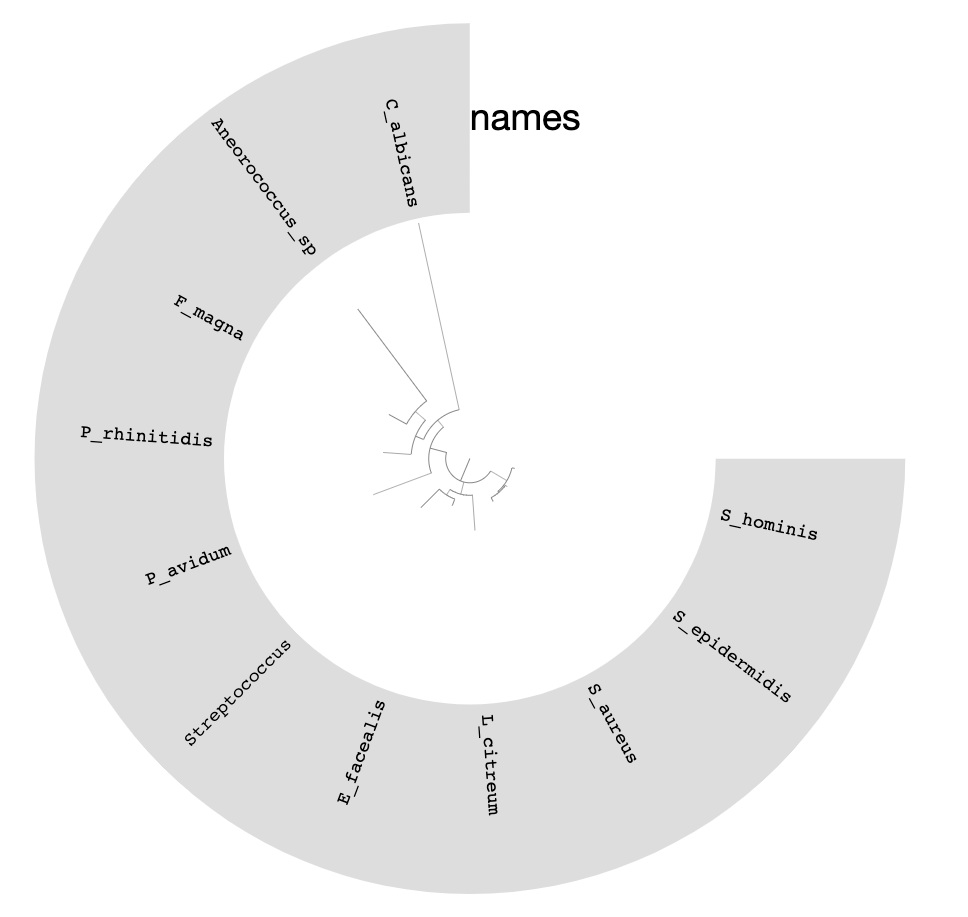

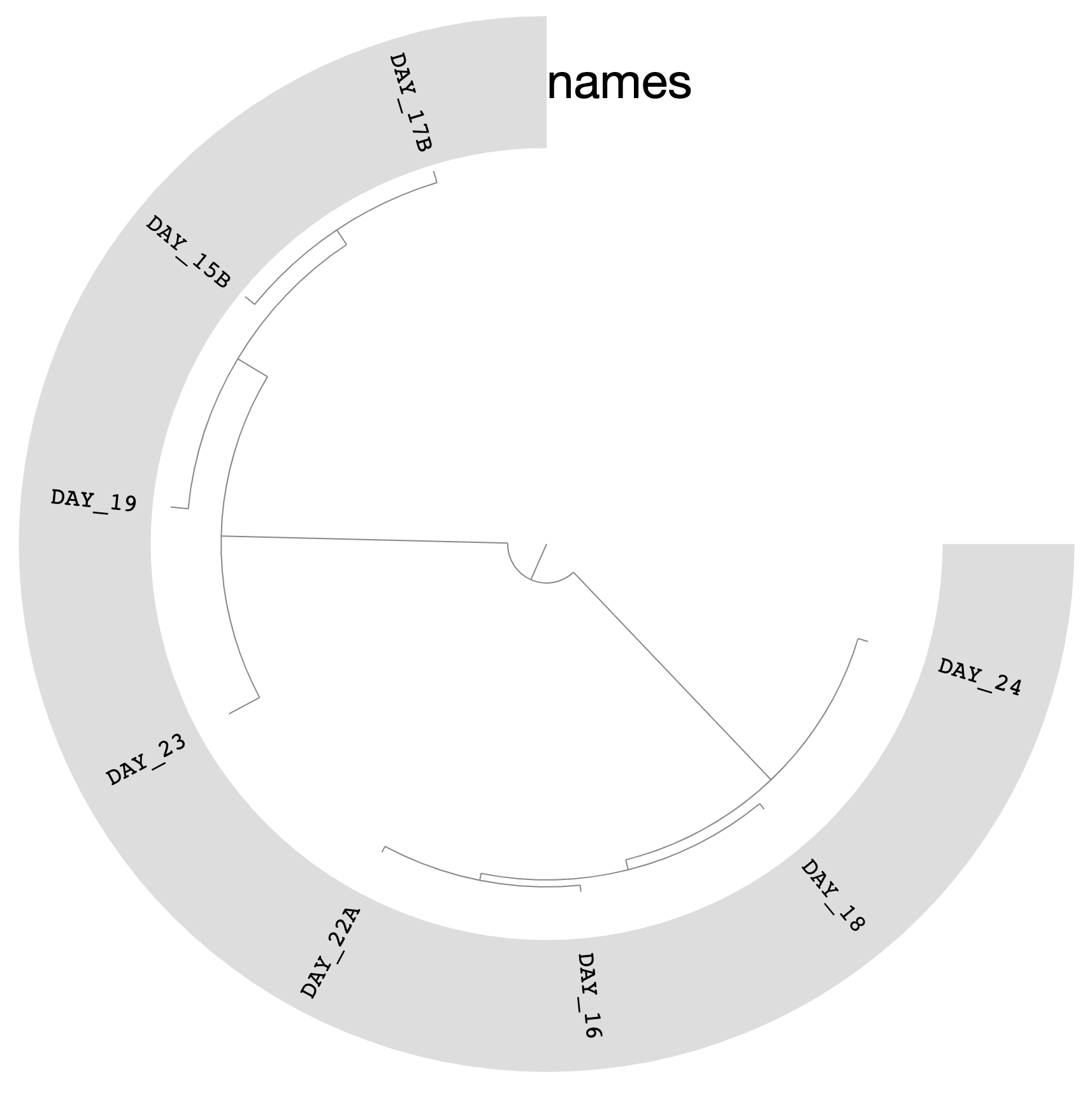

Which should give you this (after clicking Draw):

You can replace the colors with the bin names by selecting Text from Main > Legends > Item Names > bin_name and re-clicking Draw:

We can do much more with this phylogenomic tree of our bins than visualizing it in manual mode.

For instance, we could use it immediately to organize our bins in our collection while showing their distribution across samples.

anvi-interactive -p PROFILE.db \

-c CONTIGS.db \

-C default \

--tree phylogenomic-tree.txt

You will likely get an error from this command due to the loss of 2 bins that are missing the ribosomal proteins we asked for (see warning box at the end of the previous section). Those 2 bins never made it into the seqs-for-phylogenomics.fa, and therefore weren’t included in the tree. We’ll hopefully fix this section of the tutorial soon, but in the meantime please keep reading for the solution.

If you got an error from the previous command saying something like “the 11 items in your tree file do not match to the 13 items the ‘default’ collection describes”, then you can fix the mismatch between the two by making a new collection containing only the 11 genomes that made it into the phylogenomic tree. Here is how you can do that by exporting the original ‘default’ collection, removing the 2 bins missing from the tree (here done using the inverse grep command, but other strategies are possible), and importing the reduced collection back into the profile database:

anvi-export-collection -p PROFILE.db -C default

grep -v P_acnes collection-default.txt | grep -v S_lugdunensis > collection-default-reduced.txt

anvi-import-collection -c CONTIGS.db -p PROFILE.db -C default_reduced collection-default-reduced.txt

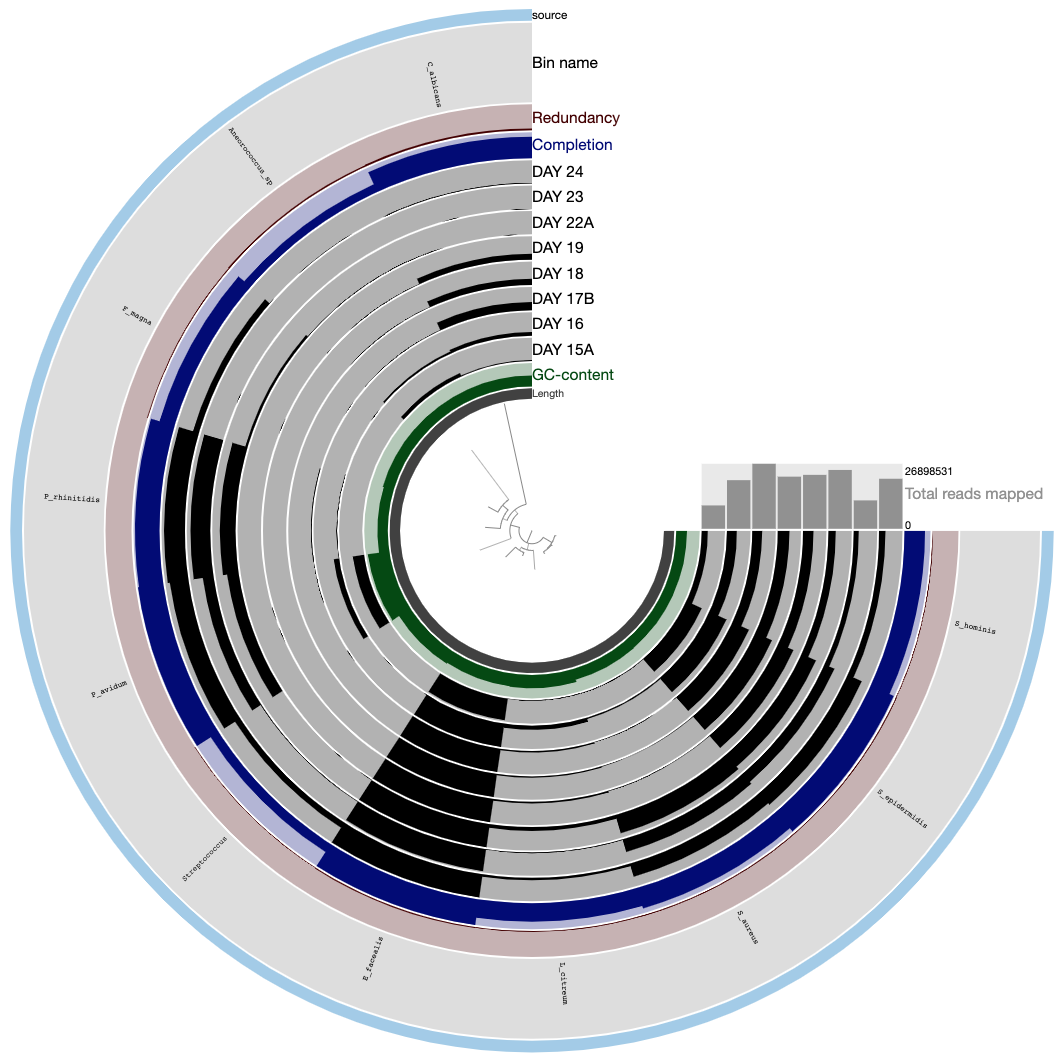

After this, you can visualize the tree like so:

anvi-interactive -p PROFILE.db \

-c CONTIGS.db \

-C default_reduced \

--tree phylogenomic-tree.txt

Which would give you the following display after selecting the ‘Phylogenomic tree’ from the ‘orders’ combo box in the ‘Settings’ tab (note that the name of your collection might not match to the one in the screenshot).

The tree in the middle shows the phylogenomic organization of bins we identified in the IGD.

Now you know how to organize distantly related genomes using universally conserved genes.

Chapter IV: Pangenomics

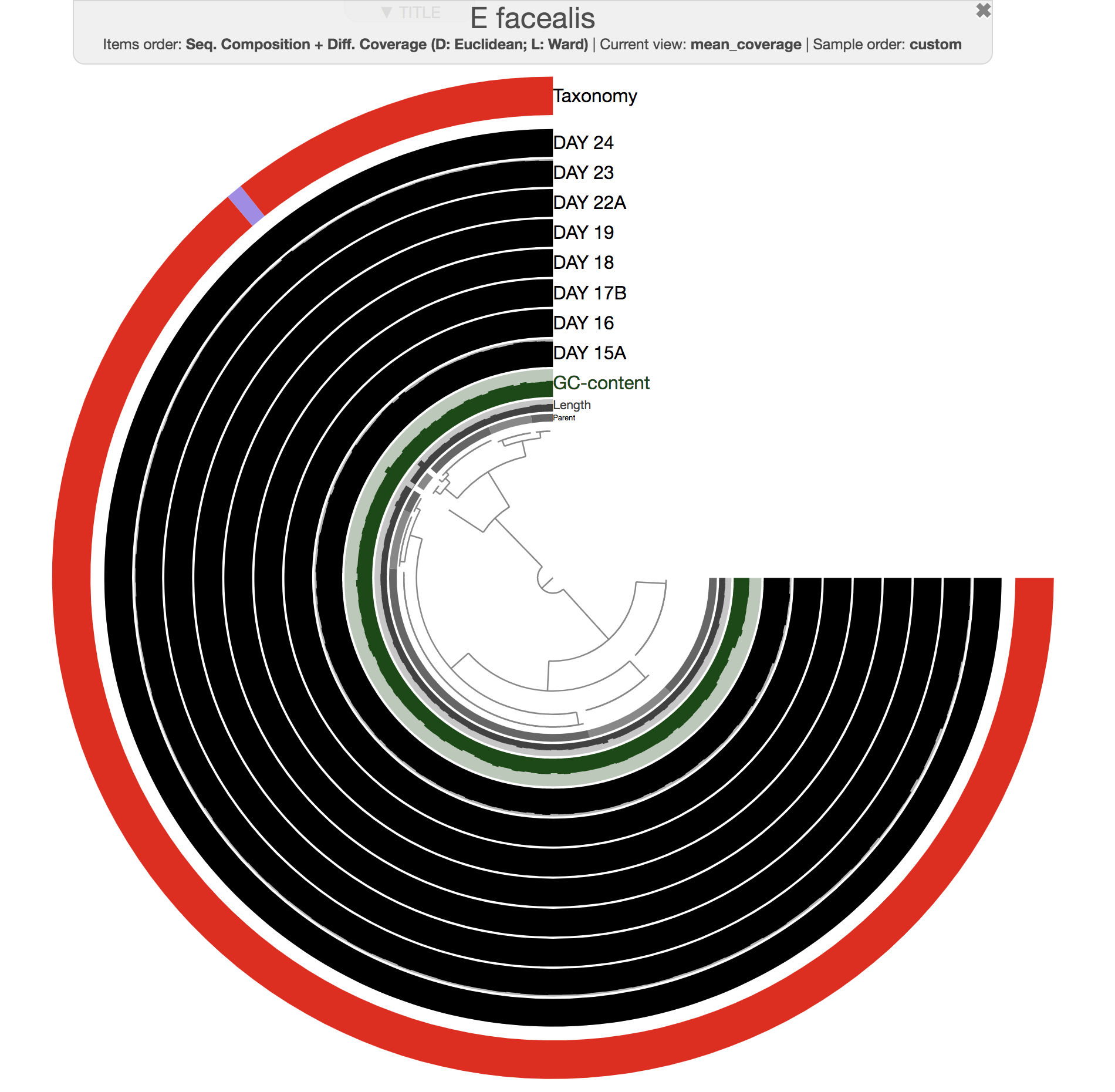

Both phylogenomics and pangenomics are strategies under the umbrella of comparative genomics, and they are inherently very similar despite their key differences. In this chapter we will discuss pangenomics and use anvi’o to have a small pangenomic analysis using our famous E. faecalis bin we recovered from the infant gut dataset and a bunch of others from the interwebs.

You can find a comprehensive tutorial on the anvi’o pangenomic workflow here.

If you haven’t followed the previous sections of the tutorial, you will need the anvi’o merged profile database and the anvi’o contigs database for the IGD available to you. Before you continue, please click here, do everything mentioned there, and come back right here to continue following the tutorial from the next line when you read the directive go back.

Please run following command in the IGD dir. They will set the stage for us to take a look at the E. faecalis bin:

anvi-import-collection additional-files/collections/e-faecalis.txt \

--bins-info additional-files/collections/e-faecalis-info.txt \

-p PROFILE.db \

-c CONTIGS.db \

-C E_faecalis

Generating a genome storage

For this example I downloaded 6 E. faecalis, and 5 E. faecium genomes to analyze them together with our E. faecalis bin. For each of these 11 external genomes, I generated anvi’o contigs databases. You can find all of them in the additional files directory:

ls additional-files/pangenomics/external-genomes/*db

additional-files/pangenomics/external-genomes/Enterococcus_faecalis_6240.db

additional-files/pangenomics/external-genomes/Enterococcus_faecalis_6250.db

additional-files/pangenomics/external-genomes/Enterococcus_faecalis_6255.db

additional-files/pangenomics/external-genomes/Enterococcus_faecalis_6512.db

additional-files/pangenomics/external-genomes/Enterococcus_faecalis_6557.db

additional-files/pangenomics/external-genomes/Enterococcus_faecalis_6563.db

additional-files/pangenomics/external-genomes/Enterococcus_faecium_6589.db

additional-files/pangenomics/external-genomes/Enterococcus_faecium_6590.db

additional-files/pangenomics/external-genomes/Enterococcus_faecium_6601.db

additional-files/pangenomics/external-genomes/Enterococcus_faecium_6778.db

additional-files/pangenomics/external-genomes/Enterococcus_faecium_6798.db

The post Accessing and including NCBI genomes in ‘omics analyses in anvi’o explains how to download sets of genomes you are interested in from the NCBI and turn them into anvi’o contigs databases.

There also are two files in the additional-files/pangenomics directory to describe how to access to the external-genomes:

| name | contigs_db_path |

|---|---|

| E_faecalis_6240 | external-genomes/Enterococcus_faecalis_6240.db |

| E_faecalis_6250 | external-genomes/Enterococcus_faecalis_6250.db |

| E_faecalis_6255 | external-genomes/Enterococcus_faecalis_6255.db |

| E_faecalis_6512 | external-genomes/Enterococcus_faecalis_6512.db |

| E_faecalis_6557 | external-genomes/Enterococcus_faecalis_6557.db |

| E_faecalis_6563 | external-genomes/Enterococcus_faecalis_6563.db |

| E_faecium_6589 | external-genomes/Enterococcus_faecium_6589.db |

| E_faecium_6590 | external-genomes/Enterococcus_faecium_6590.db |

| E_faecium_6601 | external-genomes/Enterococcus_faecium_6601.db |

| E_faecium_6778 | external-genomes/Enterococcus_faecium_6778.db |

| E_faecium_6798 | external-genomes/Enterococcus_faecium_6798.db |

and the internal one:

| name | bin_id | collection_id | profile_db_path | contigs_db_path |

|---|---|---|---|---|

| E_faecalis_SHARON | E_faecalis | E_faecalis | ../../PROFILE.db | ../../CONTIGS.db |

It is this simple to combine MAGs and isolates.

So everything is ready for an analysis, and the first step in the pangenomic workflow is to generate an anvi’o genomes storage.

anvi-gen-genomes-storage -i additional-files/pangenomics/internal-genomes.txt \

-e additional-files/pangenomics/external-genomes.txt \

-o Enterococcus-GENOMES.db

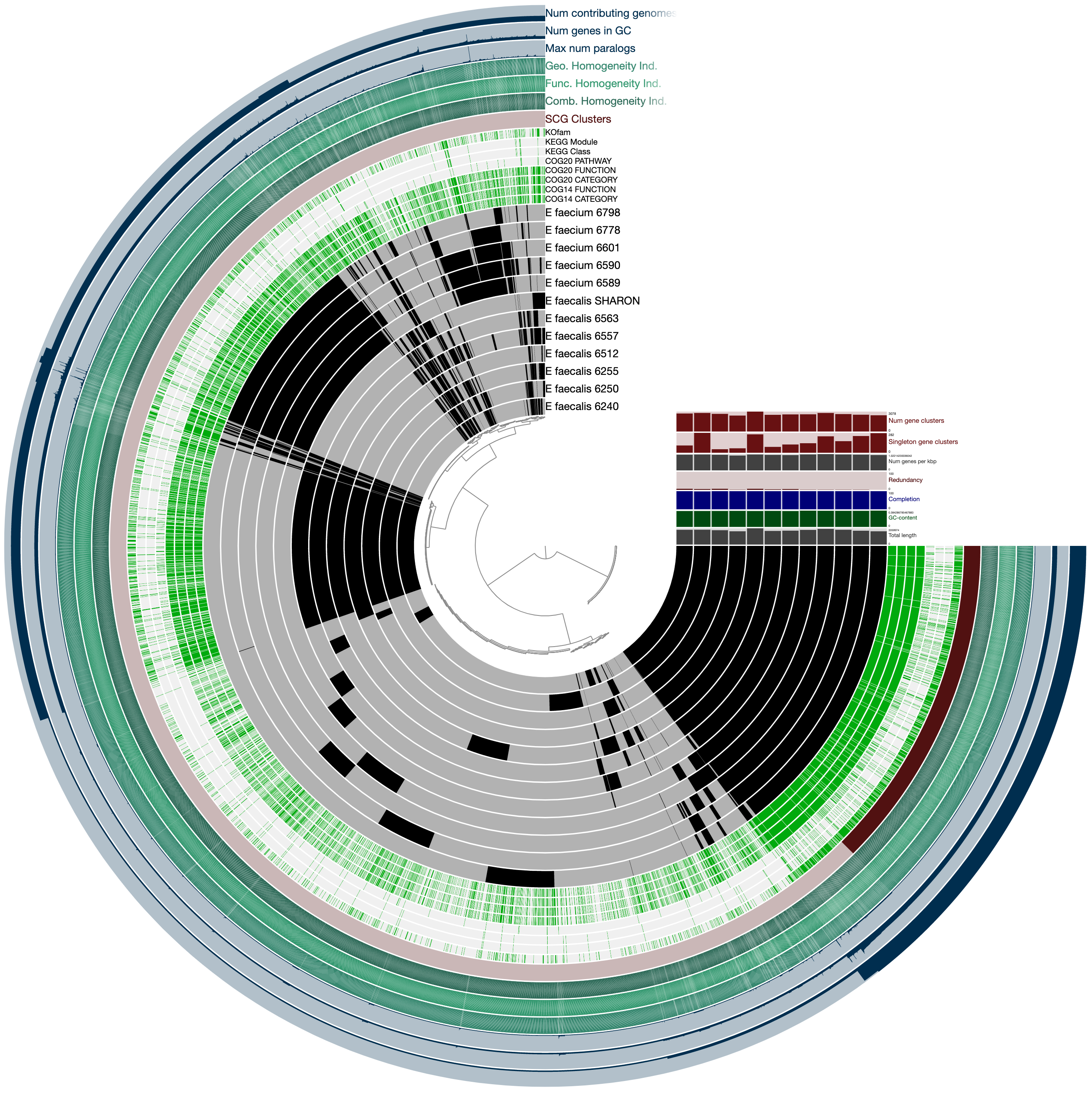

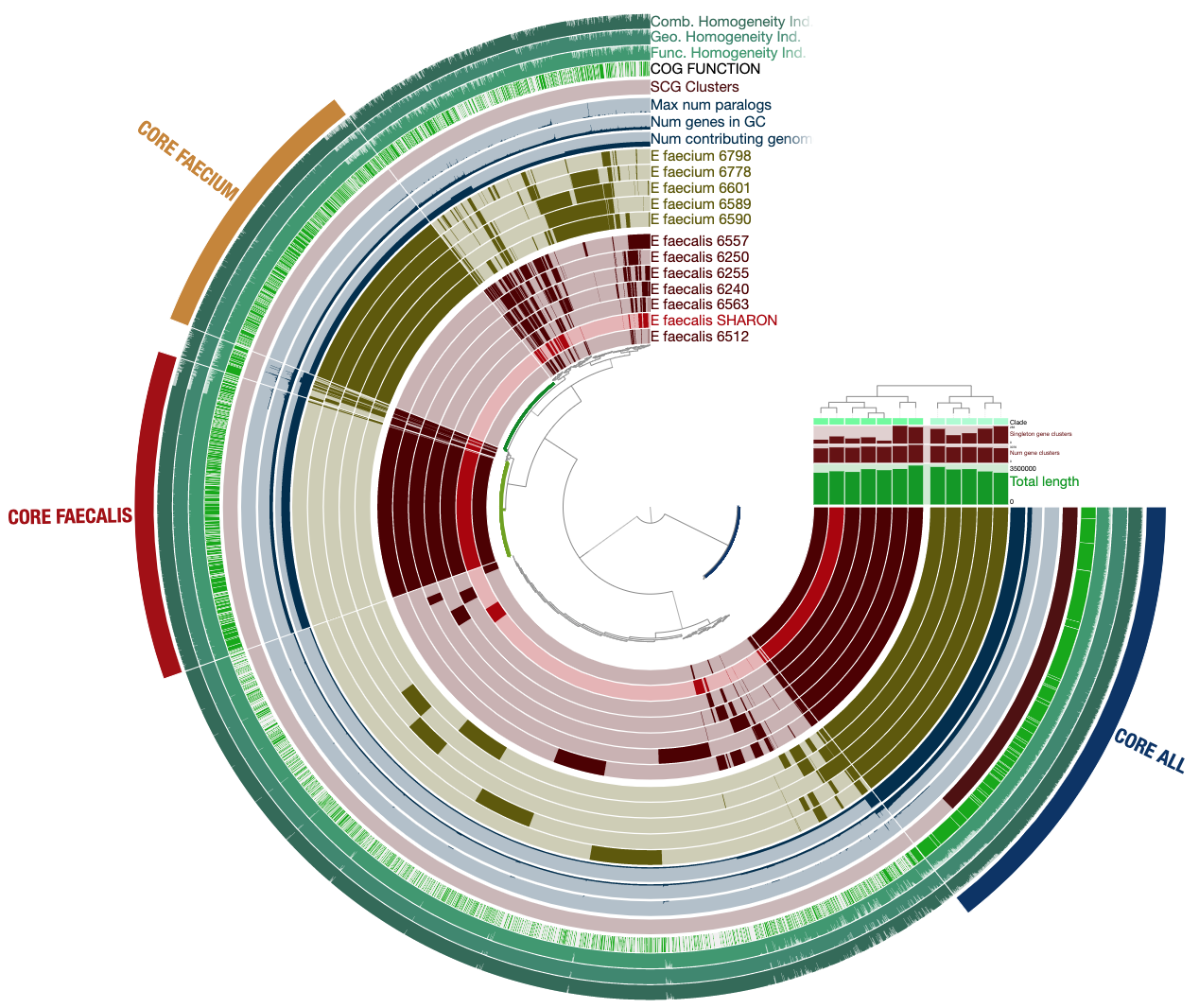

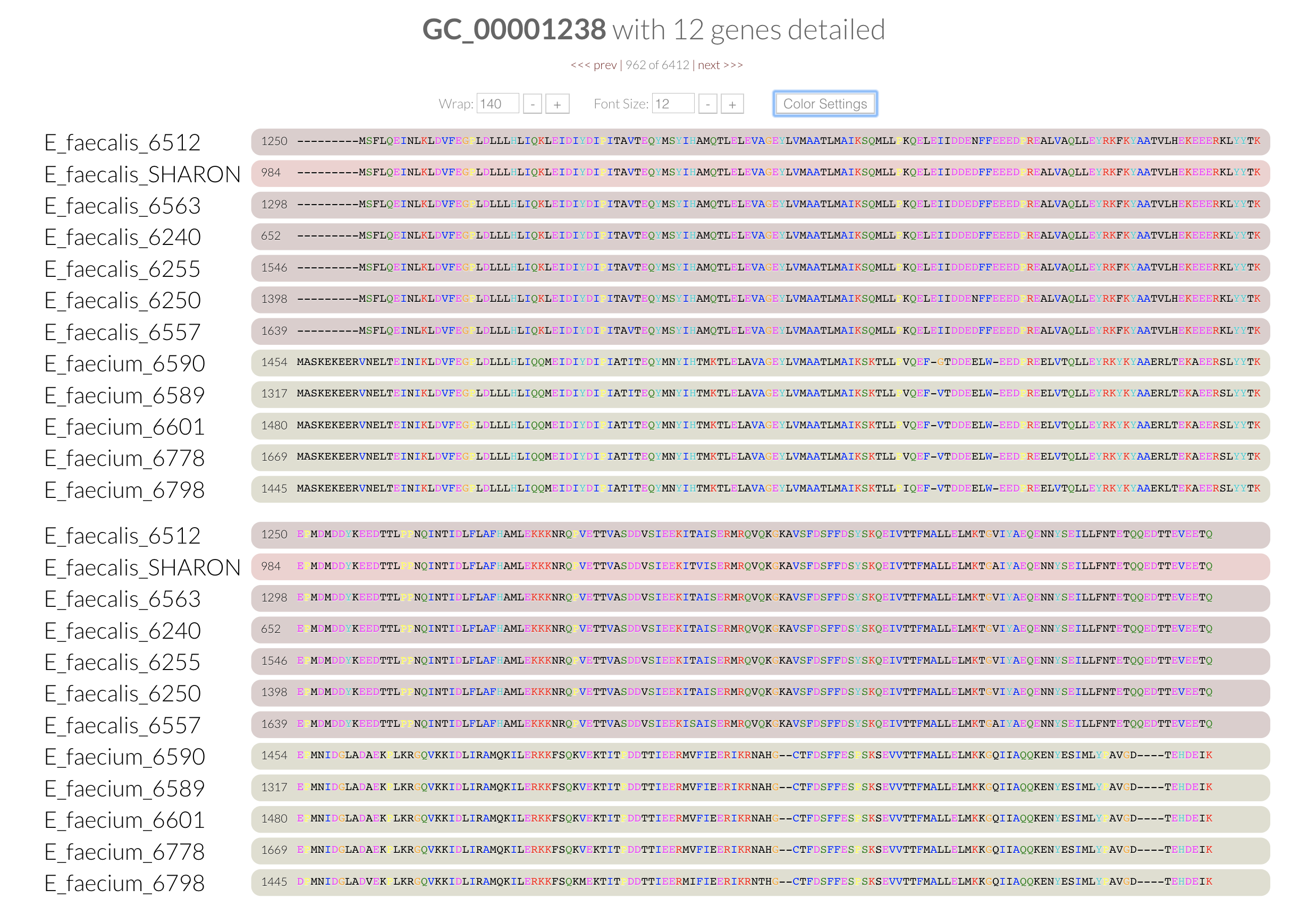

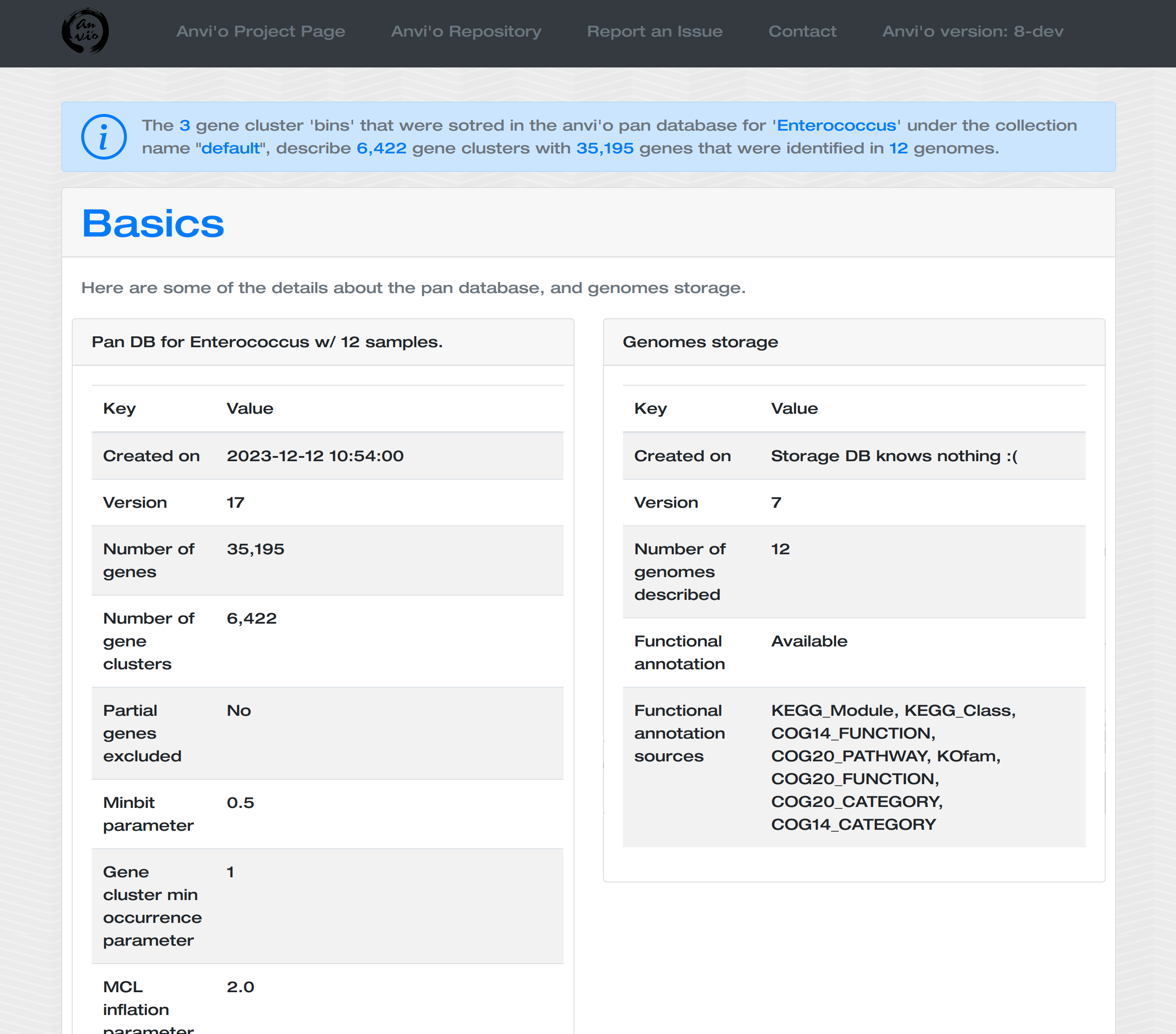

Computing and visualizing the pangenome

Now we have the genomes storage, we can characterize the pangenome:

anvi-pan-genome -g Enterococcus-GENOMES.db \

-n Enterococcus \

-o PAN \

--num-threads 10

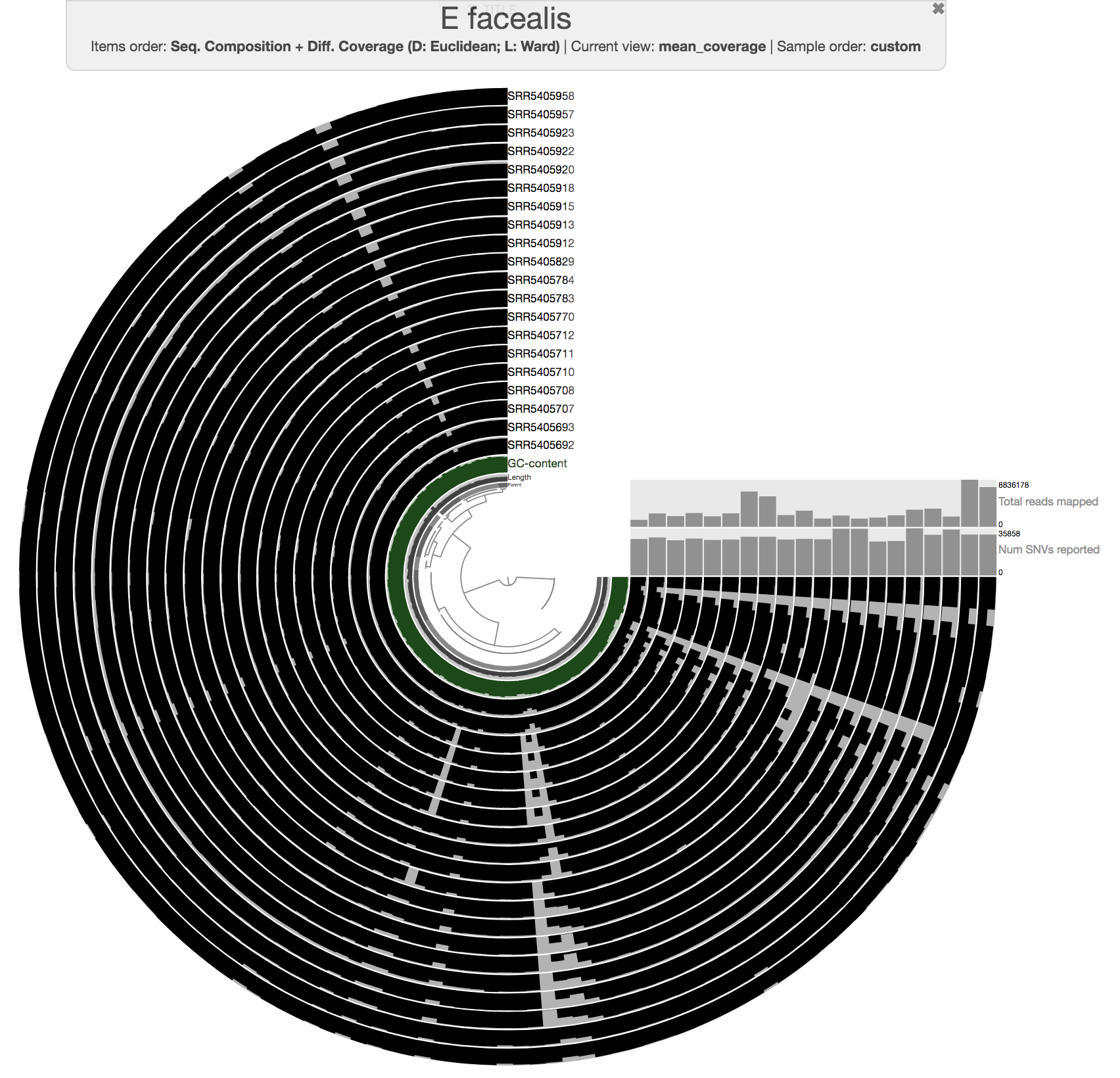

Now the pangenome is ready to display. This is how you can run it:

anvi-display-pan -g Enterococcus-GENOMES.db \

-p PAN/Enterococcus-PAN.db \

--title "Enterococccus Pan"

To get this ugly looking display:

Never settle for bad-looking figures

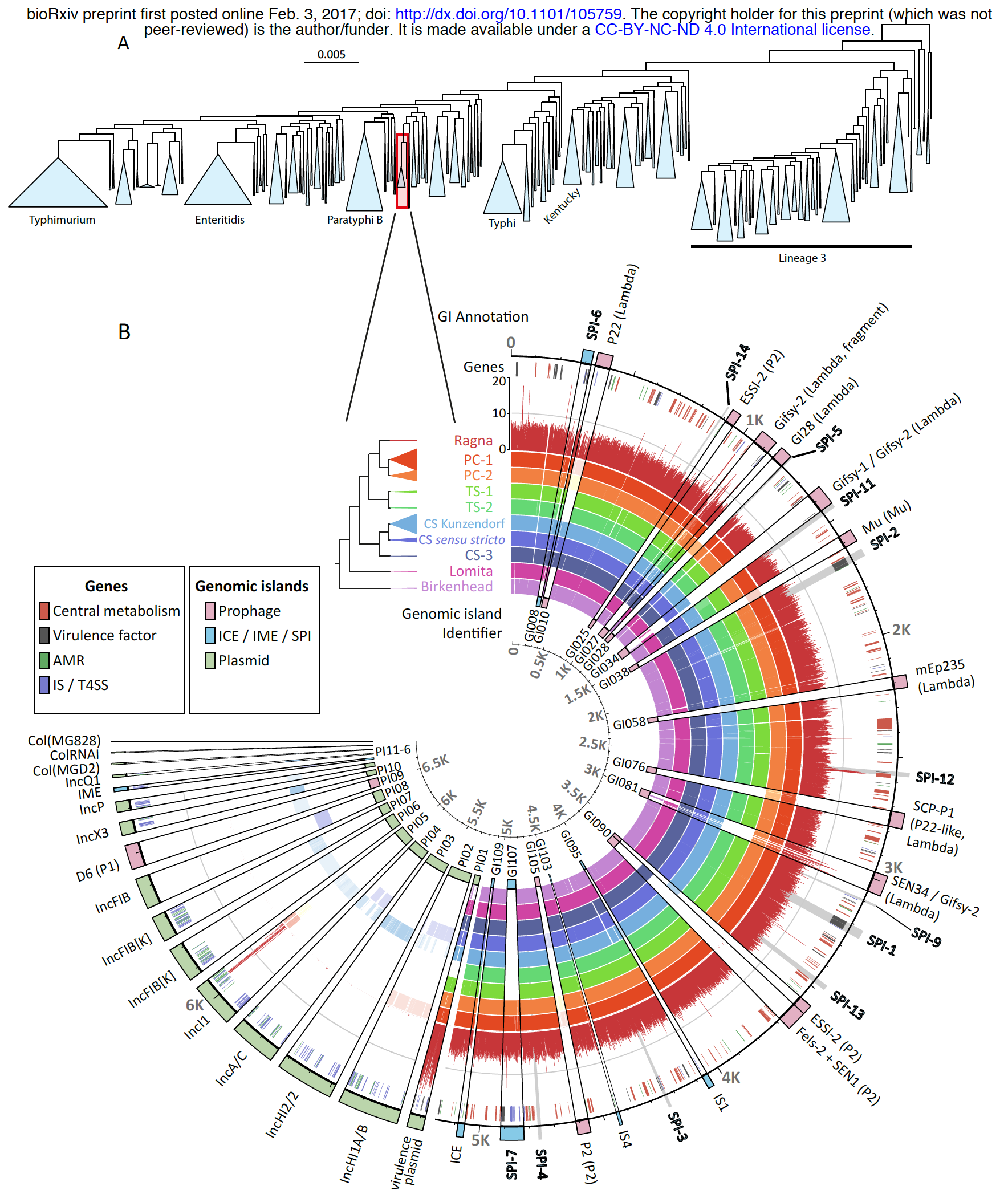

Well, you can do whatever you want, but just take a look at this anvi’o figure:

This is a pangenomic analysis visualized with anvi’o by Zhemin Zhou and his colleagues. Here is more information about this excellent study: An 800-years-old Salmonella genome, and a lovely anvi’o figure.

I am not arguing that every figure should look like that one, but I would like you to consider the fact that if you would like to do better, there are ways. Here is a post about working with SVG files anvi’o generate. Finally, please visit this reproducible workflow to see a step-by-step for a more humanly-possible prettification of a pangenome:

This GIF shows the evolution of an anvi'o figure from the raw anvi'o display to its published form :) We know the anvi'o interactive interface can be scary, but it IS powerful :)https://t.co/IfvDndFgBD describes every step in the interface to go from the 1st to the 2nd image. pic.twitter.com/sb4dzLKAg3

— A. Murat Eren (Meren) (@merenbey) August 27, 2019

OK. I made the previous display a bit prettier for you. If you kill the server, and import the state file the following way, and re-run the server,

anvi-import-state -p PAN/Enterococcus-PAN.db \

--state additional-files/state-files/state-pan.json \

--name default

anvi-display-pan -g Enterococcus-GENOMES.db \

-p PAN/Enterococcus-PAN.db \

--title "Enterococccus Pan"

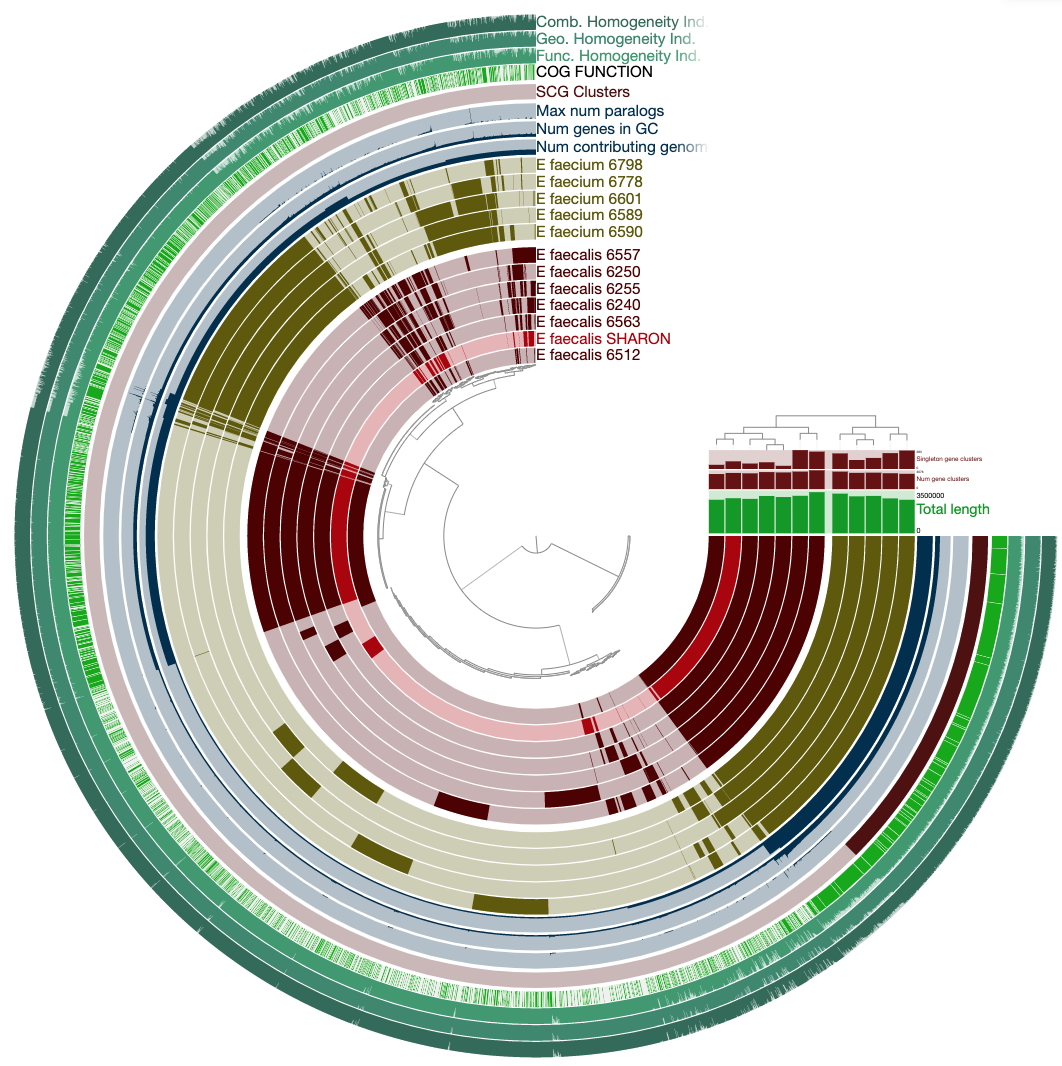

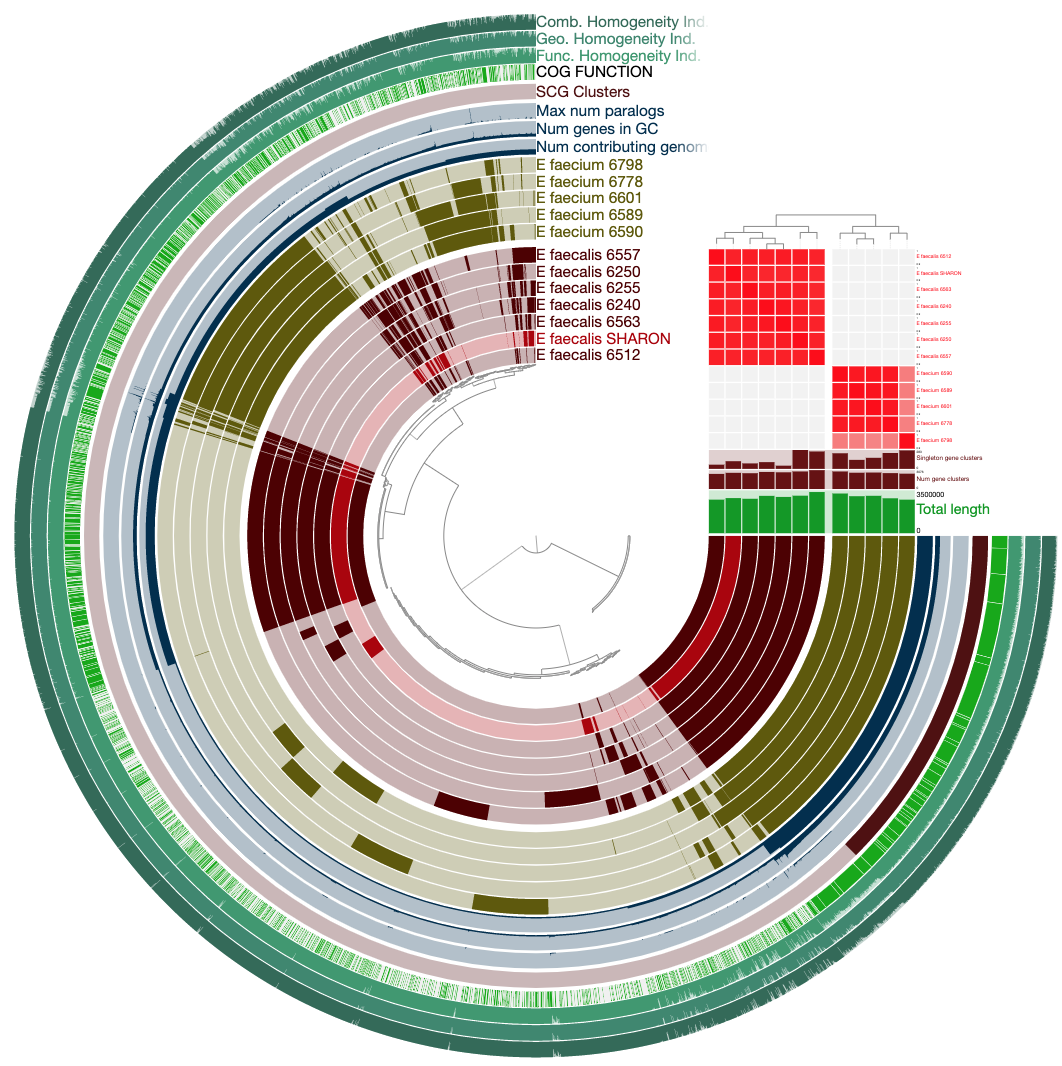

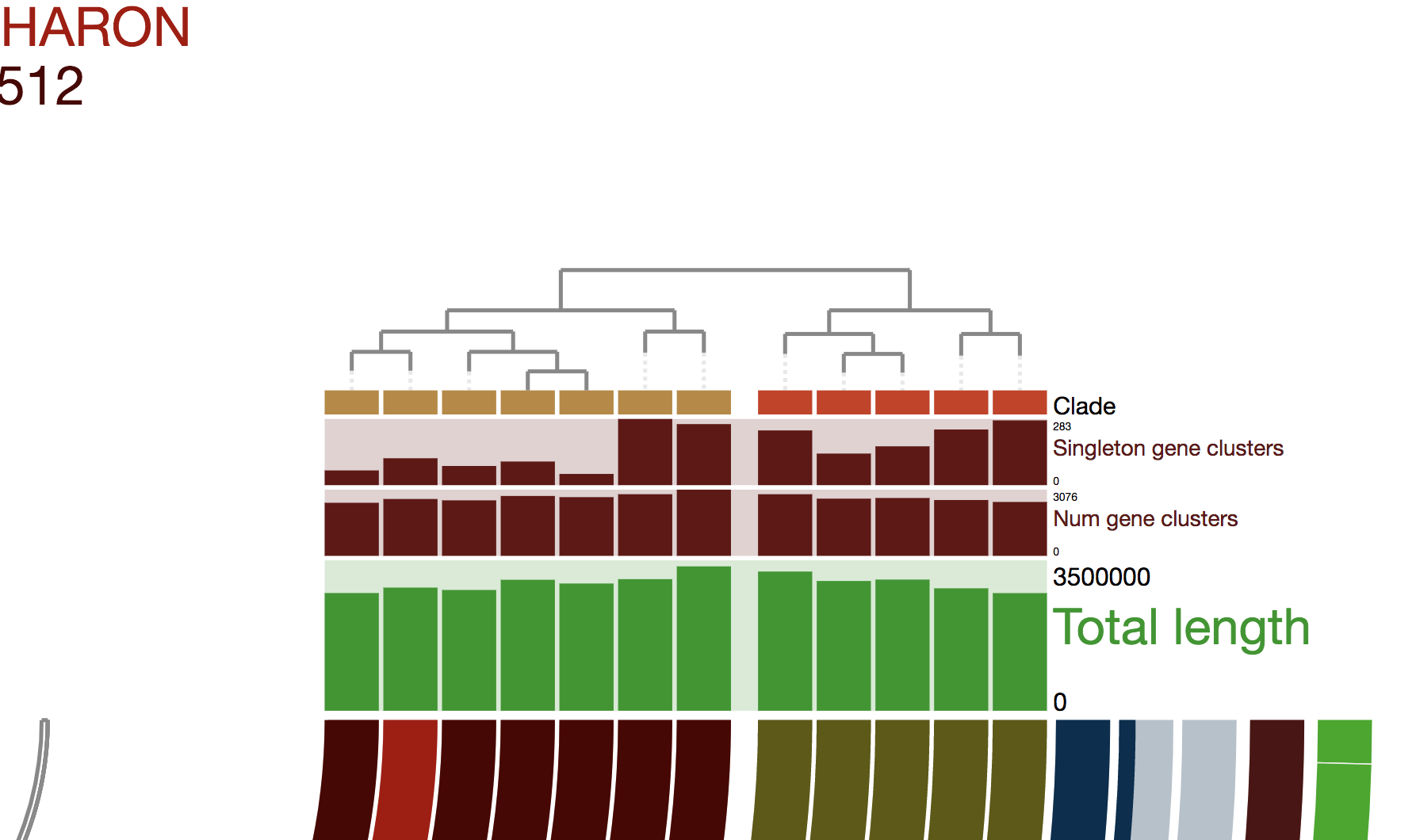

It will look much more reasonable:

Now not only can we see how our E. faecalis genome looks like compared to available genomes, we can also see that it is not missing or carrying a great number of proteins compared to other genomes. The clustering of genomes based on gene clusters indicate that it is most similar to the genome Enterococcus faecalis 6512, which, according to the 00_INFO_ABOUT_EXTERNAL_GENOMES.txt under additional-files/pangenomics/external-genomes directory, corresponds to the assembly ID ASM17257v2 if you were to be interested in exploring further.

Adding average nucleotide identity

The pangenome tells us about the similarities and dissimilarities between those genomes given the amino acid sequences of open reading frames we identified within each one of them. We could also compare them to each other by computing the average nucleotide identity between them. Anvi’o comes with a program to do that, which uses PyANI by Pritchard et al. to pairwise align DNA sequences between genomes to estimate similarities between them. See this tutorial for details.

We can compute average nucleotide identity among our genomes, and add them to the pan database the following way:

anvi-compute-genome-similarity -e additional-files/pangenomics/external-genomes.txt \

-i additional-files/pangenomics/internal-genomes.txt \

--program pyANI \

-o ANI \

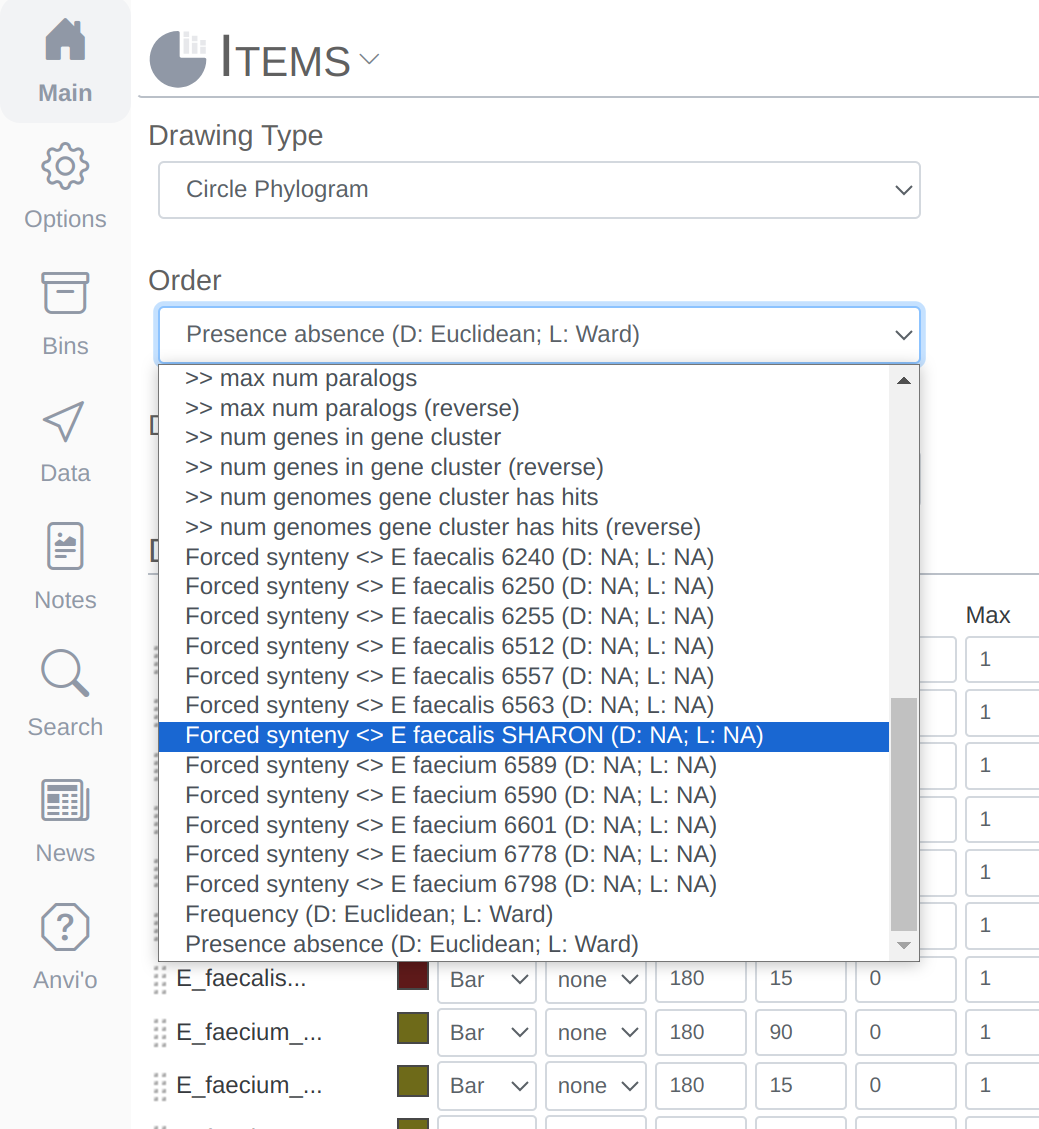

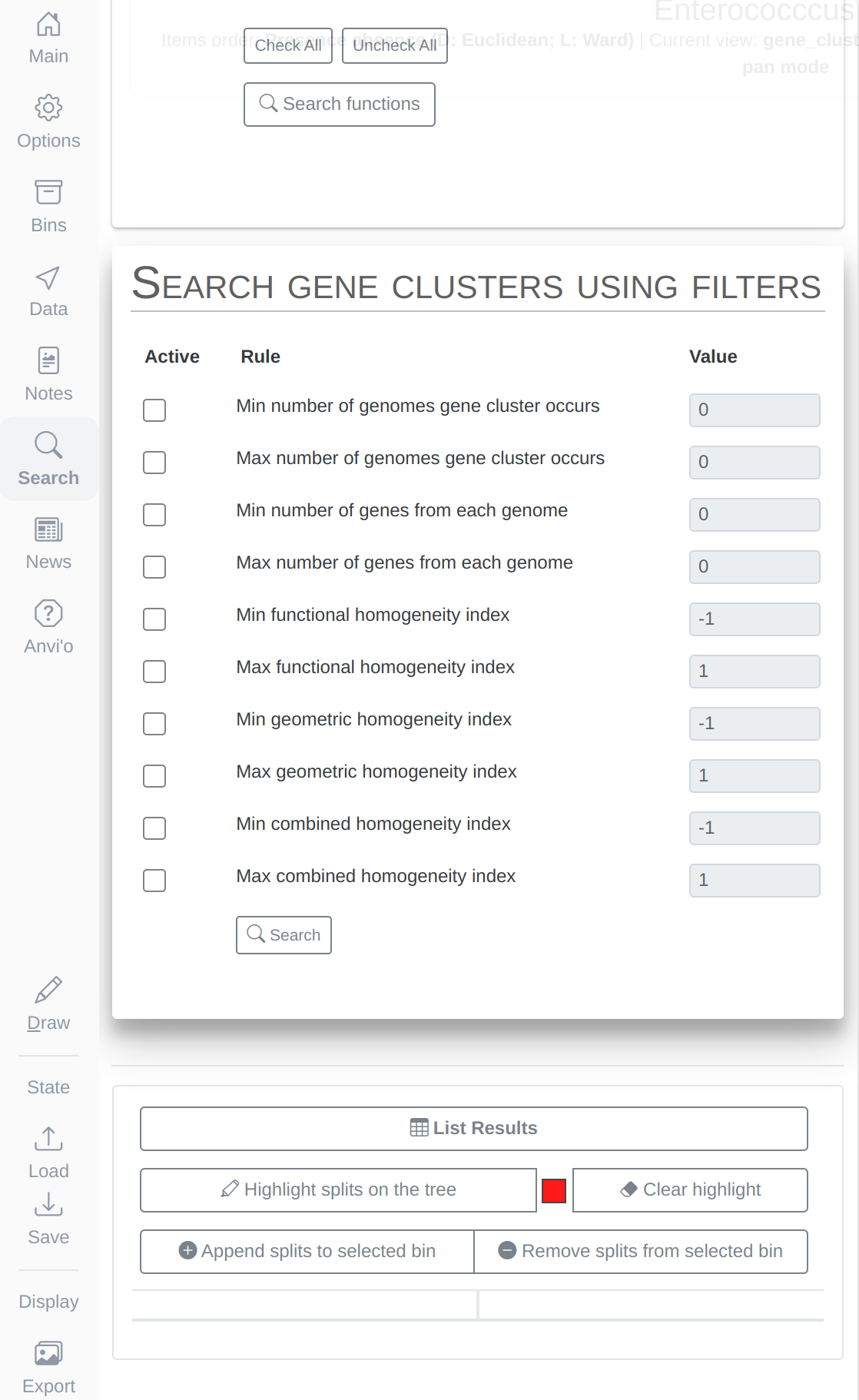

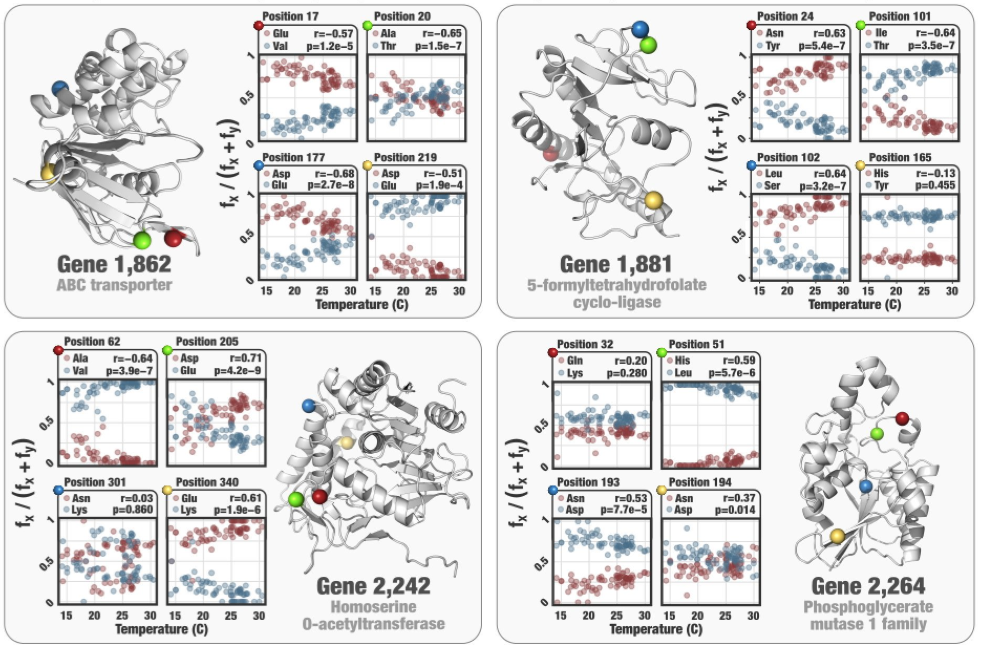

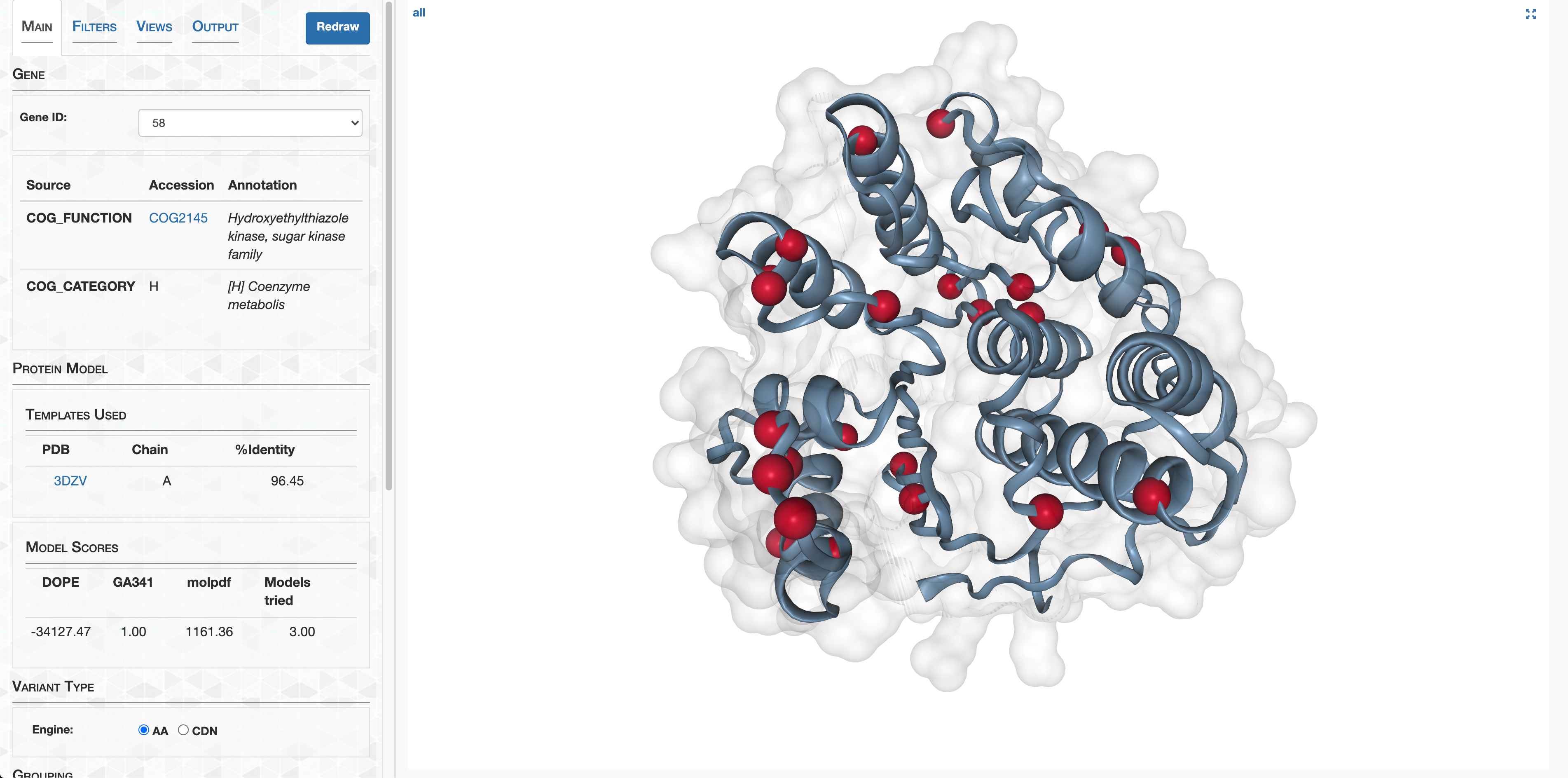

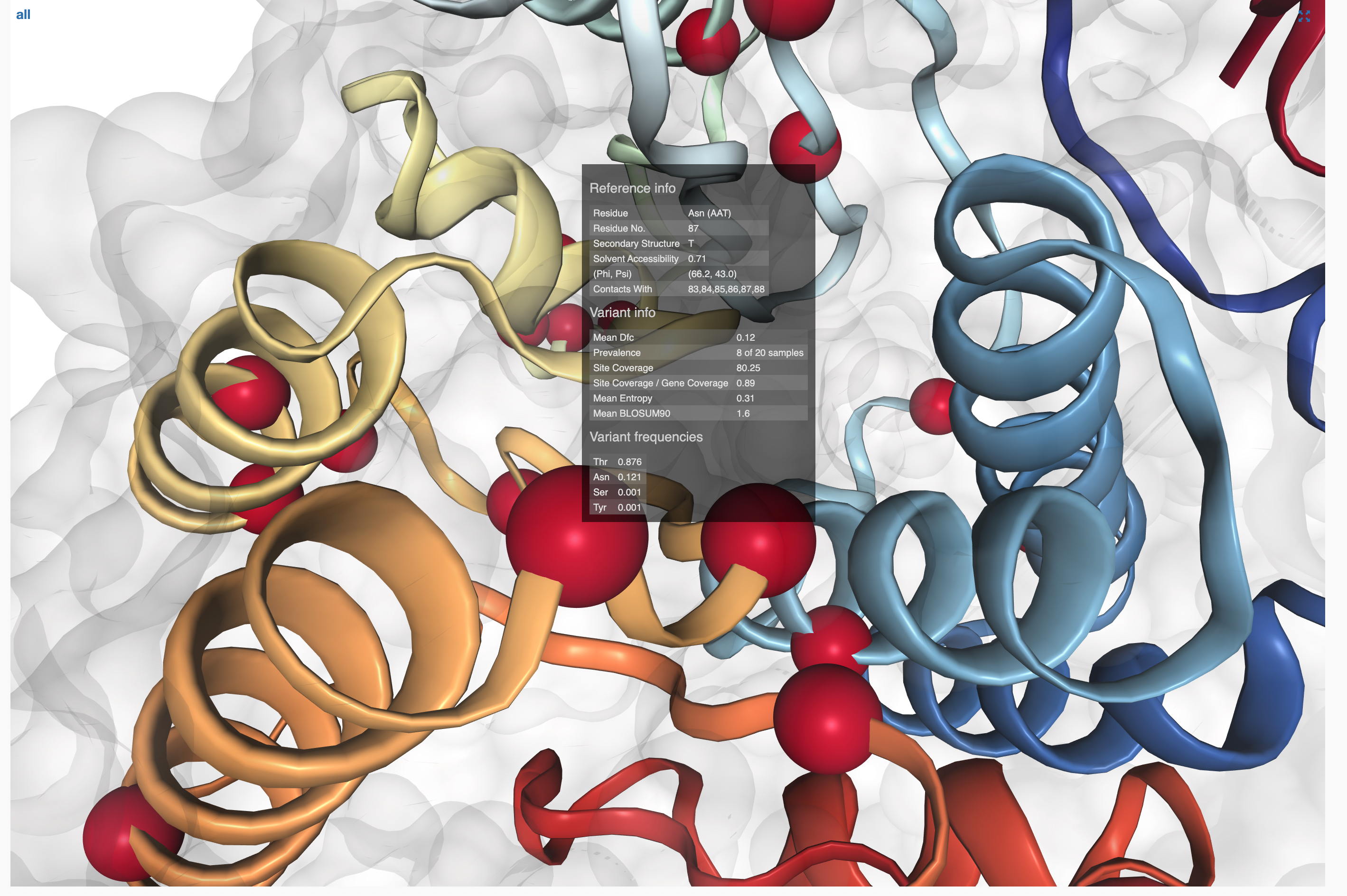

-T 6 \